The defender side of AI in 2026 has moved from optional to mandatory. The World Economic Forum's Global Cybersecurity Outlook 2026 found 94 percent of leaders agree AI is the single most significant driver of cybersecurity change in 2026, 87 percent flagged AI-related vulnerabilities as the fastest-growing cyber risk through 2025, and the share of organizations assessing the security of their AI tools pre-deployment doubled from 37 percent to 64 percent in a single year. IBM's 2025 Cost of a Data Breach Report, based on more than 600 organizations, recorded the harder edge of the same trend: 13 percent of organizations reported breaches of AI models or applications outright, 97 percent of those breached organizations lacked proper AI access controls, and shadow AI added US$670,000 to the average breach cost. AI cybersecurity is no longer a research topic. It is a board-reported risk, a regulatory obligation in the EU, and the first new spending line in Gartner's 2026 information-security forecast.

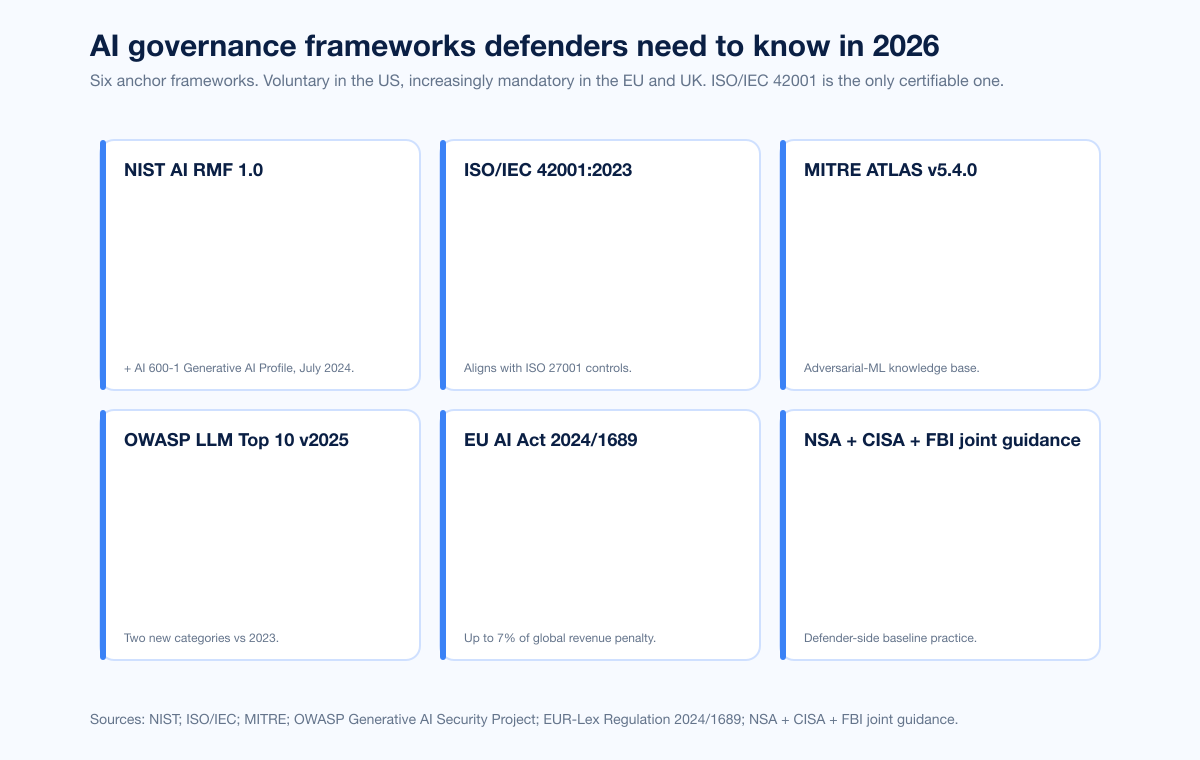

Three forces shape the defensive landscape in 2026. First, the risk taxonomy stabilized around two anchor frameworks: OWASP's Top 10 for LLM Applications version 2025, which ranks LLM01 Prompt Injection through LLM10 Unbounded Consumption, and MITRE ATLAS, which by version 5.4.0 in February 2026 tracks 16 tactics, 84 techniques, 56 sub-techniques, 32 mitigations, and 42 real-world case studies. Second, governance got teeth. The EU AI Act (Regulation 2024/1689) entered into force across all 27 member states on 1 August 2024; its prohibitions are enforceable from 2 February 2025, GPAI obligations from 2 August 2025, high-risk obligations from 2 August 2026, and full applicability lands 2 August 2027. Maximum penalty for prohibited-AI violations: EUR 35M or 7 percent of global revenue, whichever is higher. Third, defender AI scaled past pilot. CrowdStrike's Charlotte AI triages detections at 98 percent accuracy and saves 40-plus analyst hours per week. Microsoft's Security Alert Triage Agent identifies 6.5x more malicious alerts and improves verdict accuracy by 77 percent. IBM measured organizations using AI extensively cut breach lifecycle by 80 days and saved nearly US$1.9M per breach.

This post is the Stingrai research team's canonical 2026 reference for the defender side of AI cybersecurity. It pairs with our companion post on the offensive side, which covers attacker AI, GTG-1002, and AI-aware malware. It assembles verified figures from 20 named primary publishers: NIST, MITRE, OWASP, ISO/IEC, ENISA, the UK AI Security Institute, the US AI Safety Institute, the EU AI Office, IBM, Microsoft, CrowdStrike, Mandiant, Gartner, the World Economic Forum, HiddenLayer, Protect AI, Lakera, the NVD, NSA / CISA / FBI joint advisories, the New York Department of Financial Services, and the SEC. Lead data is full-year 2024 and 2025 telemetry plus governance dates published through April 2026; primary publishers have not yet released full-year 2026 reports as of the cut. Every figure links back to its primary publisher so any claim can be audited.

TL;DR: 12 labeled key facts

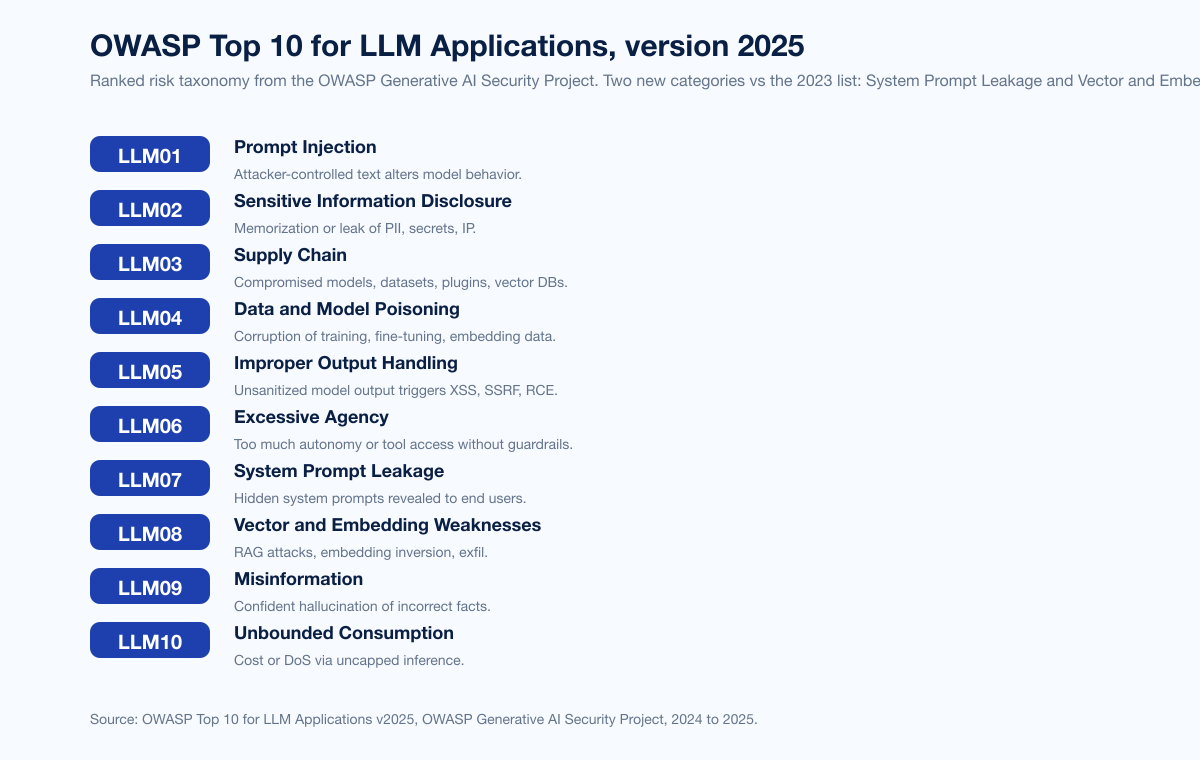

OWASP LLM Top 10 v2025: LLM01 Prompt Injection holds the top slot for the second consecutive edition; LLM02 Sensitive Information Disclosure jumped from sixth to second; two new categories were added vs 2023, LLM07 System Prompt Leakage and LLM08 Vector and Embedding Weaknesses (OWASP GenAI Security Project).

MITRE ATLAS v5.4.0 (February 2026): 16 tactics, 84 techniques, 56 sub-techniques, 32 mitigations, 42 case studies (MITRE ATLAS).

NIST AI RMF 1.0: four core functions Govern, Map, Measure, Manage; AI 600-1 Generative AI Profile (July 2024) adds 200-plus suggested actions for GenAI (NIST AI RMF, NIST AI 600-1).

ISO/IEC 42001:2023: first international standard for an AI Management System; published December 2023; certifiable by accredited bodies (ISO).

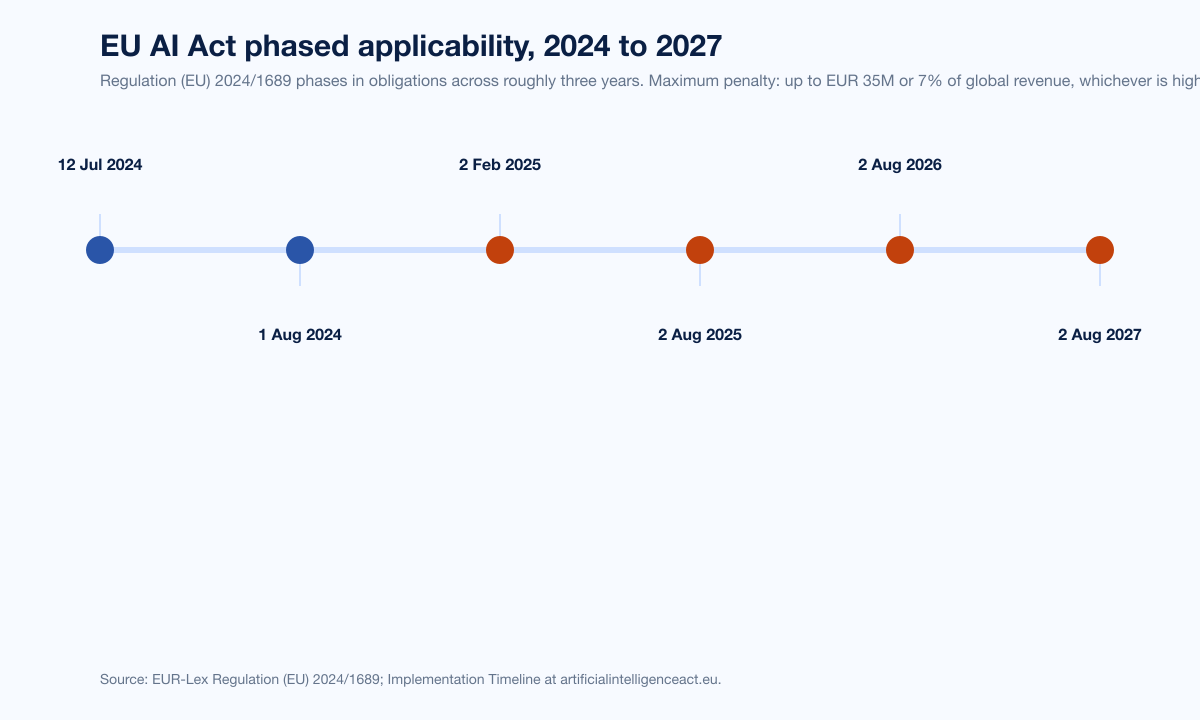

EU AI Act: prohibitions from 2 February 2025; GPAI rules from 2 August 2025; high-risk requirements from 2 August 2026; full applicability 2 August 2027; up to EUR 35M or 7 percent of global revenue in fines (EU AI Act Implementation Timeline).

WEF Global Cybersecurity Outlook 2026: 94 percent agree AI is the biggest driver of cybersecurity change in 2026; 87 percent flag AI vulnerabilities as the fastest-growing cyber risk; pre-deployment AI security assessment doubled from 37 percent to 64 percent (WEF GCO 2026).

IBM Cost of a Data Breach 2025: 13 percent of organizations reported breaches of AI models or applications; 97 percent of those lacked proper AI access controls; shadow AI added US$670,000 per breach (IBM newsroom).

IBM CODB 2025 defender impact: organizations using AI extensively saved nearly US$1.9M per breach and cut breach lifecycle by 80 days; global average breach cost dropped 9 percent to US$4.44M (IBM Cost of a Data Breach 2025).

HiddenLayer 2025 AI Threat Landscape Report (250 IT leaders): 99 percent of organizations are prioritizing AI security in 2025; 95 percent increased AI security budgets; 74 percent confirm an AI breach in the past year; 33 percent of AI breaches originated from chatbot attacks (HiddenLayer).

Microsoft Cyber Signals Issue 9 (April 2025): Microsoft thwarted approximately US$4B in AI-powered fraud April 2024 to April 2025; blocked 1.6M bot signup attempts per hour (Microsoft Security Blog).

CrowdStrike Charlotte AI: 98 percent triage accuracy; 40-plus analyst hours saved per week; 70 percent reduction in manual investigation work (CrowdStrike press release).

Gartner forecast: global information security spending reaches US$244.2B in 2026 (up 13.3 percent YoY); AI cybersecurity market grows at 74 percent CAGR and AI data security at 155 percent CAGR; by 2027 generative AI is forecast to be involved in 17 percent of cyberattacks or data leaks (Gartner via SoftwareStrategiesBlog).

Key takeaways

The risk taxonomy is settled enough to use. OWASP LLM Top 10 v2025 plus MITRE ATLAS v5.4.0 give defenders a vocabulary that is now broadly accepted across vendors and standards bodies. CISOs no longer need to build their own LLM threat model from scratch; they can adopt these and customize.

Governance is now compliance. EU AI Act prohibitions are already enforceable, GPAI obligations have applied since August 2025, and the high-risk obligations land 2 August 2026. ISO/IEC 42001 is the first certifiable AI management system standard. SEC Form 10-K cybersecurity rules already capture material AI incidents. NY DFS made AI cybersecurity a Part 500 obligation in October 2024. The "voluntary AI ethics" era ended in 2024 to 2025.

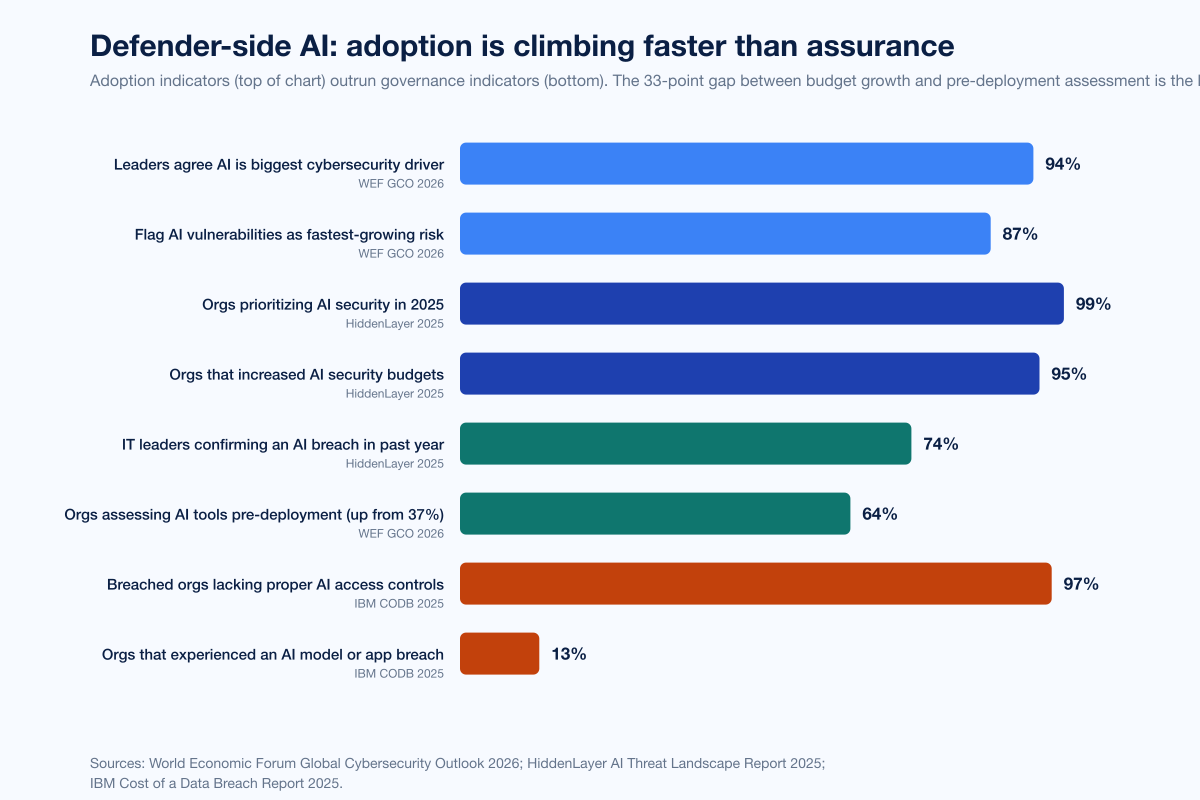

Adoption is climbing faster than assurance. WEF found 99 percent of organizations are prioritizing AI security and 95 percent are increasing budgets, but only 64 percent assess AI tools pre-deployment, and IBM found 97 percent of breached AI deployments had no proper access controls. The 33-point gap between "we want to" and "we actually checked" is the live exposure window.

Defender AI delivers measurable ROI now, not later. Charlotte AI 98 percent triage accuracy + 40 hours saved per week. Microsoft Security Alert Triage Agent identifies 6.5x more malicious alerts and improves verdict accuracy 77 percent. IBM measured US$1.9M of savings and 80 days faster containment for organizations deploying AI extensively. The argument for defender AI is no longer aspirational.

Open-source AI components are a real attack surface. A cross-tenant RCE on the Replicate.ai hosting platform was disclosed in May 2024. Roughly 100 malicious models were disclosed on Hugging Face in March 2024. LangChain CVE-2024-8309 and CVE-2025-68664 (LangGrinch, CVSS 9.3) target the most-used LLM application framework. Protect AI's huntr program has shipped dozens of disclosed vulnerabilities per month across the OSS AI/ML supply chain.

Methodology

Date cutoff: 29 April 2026. Lead data is full-year 2024 or 2025 telemetry where a primary publisher has released it; 2026 figures are labeled as forecasts or projections. Statistics that could not be reached via a named primary source on at least one verification pass were dropped rather than estimated. Where multiple primary publishers report compatible figures we cite the publisher whose methodology window matches the claim. Secondary news aggregators are cited only where they constitute the public record of a corporate disclosure or CVE assignment.

Sources span: NIST (AI 100-1 RMF, AI 600-1 GAI Profile); MITRE ATLAS v5.x (Sept 2025 NIST presentation, October 2025 Zenity collaboration, November 2025 v5.1.0, February 2026 v5.4.0); OWASP Top 10 for LLM Applications v2025 (released late 2024 by the OWASP Generative AI Security Project); ISO/IEC 42001:2023; EU AI Act (Regulation 2024/1689 published 12 July 2024 in OJEU); UK AI Security Institute Frontier AI Trends Report 2025 and 2025 year-in-review; ENISA Threat Landscape 2025 (October 2025; 4,875 incidents July 2024 to June 2025); WEF Global Cybersecurity Outlook 2026 (January 2026); IBM Cost of a Data Breach Report 2025 (released 30 July 2025; 600-plus organizations); HiddenLayer 2025 AI Threat Landscape Report (released 4 March 2025; 250 IT leaders); Lakera Gandalf platform statistics and arXiv 2501.07927 Gandalf the Red paper; Protect AI huntr program; NVD CVE records (CVE-2024-8309 and CVE-2025-68664); Replicate platform disclosure blog and contemporaneous reporting in Dark Reading and The Hacker News; Microsoft Cyber Signals Issue 9 (April 2025); Microsoft Digital Defense Report 2025 (October 2025); CrowdStrike 2025 and 2026 Global Threat Reports plus Charlotte AI product disclosures; NSA / CISA / FBI Cybersecurity Information Sheets (April 2024 and May 2025); CISA AI Roadmap and JCDC AI Cybersecurity Collaboration Playbook (January 2025); NY DFS Industry Letter 16 October 2024; SEC press release 26 July 2023; Anthropic Responsible Scaling Policy version 3.0; Gartner forecasts (3Q25 update plus 2026 IT and security press releases).

Figure 1: OWASP Top 10 for LLM Applications version 2025. Two new categories vs the 2023 list: LLM07 System Prompt Leakage and LLM08 Vector and Embedding Weaknesses. Source: OWASP Generative AI Security Project.

Risk taxonomy: the OWASP LLM Top 10 v2025

The OWASP Top 10 for Large Language Model Applications v2025 is the de facto risk taxonomy for AI applications in 2026. It was updated in late 2024 by the OWASP Generative AI Security Project to reflect real-world incidents and the rapid growth of agentic AI, adding two new categories, substantially reworking several others, and reordering existing risks based on community feedback.

LLM01 Prompt Injection

Manipulation of input prompts to compromise model outputs and behavior. LLM01 holds the top slot for the second consecutive edition. LLMs process instructions and data through a single channel without clear separation, which means an attacker can craft input that the model interprets as a new instruction rather than content to process. Indirect prompt injection (where attacker text lives in a webpage, email, or PDF that the LLM later retrieves) is the production-grade variant most CISOs underestimate.

LLM02 Sensitive Information Disclosure

Unintended exposure of training data, embedded secrets, or system context. LLM02 jumped from sixth place in the 2023 edition to second in 2025. The two failure modes are training-data memorization (the model regurgitates PII or proprietary text from its training set) and runtime context leak (the model echoes secrets a previous user pasted in or pulls from RAG-attached documents).

LLM03 Supply Chain

Compromise at any point in the AI ecosystem. The OWASP project explicitly names pre-trained base models, fine-tuning datasets, third-party plugins, vector databases, and external APIs as in-scope dependencies. The two named real-world cases below (Replicate.ai 2024 and Hugging Face malicious models) sit squarely in LLM03.

LLM04 Data and Model Poisoning

Intentional corruption of pre-training, fine-tuning, or embedding data. ENISA's 2025 Threat Landscape (ENISA TL 2025) tracks model poisoning as one of the named AI-system threats; it sits next to "Rules File Backdoor" attacks against AI coding assistants like Cursor and GitHub Copilot.

LLM05 Improper Output Handling

Insufficient validation, sanitization, or filtering of LLM output before downstream processing. The classic case: an application drops model output into a HTML page (XSS), a SQL query (SQLi), or a shell command (RCE) without escaping. LangChain's CVE-2024-8309 (June 2024, prompt-injection-leading-to-Cypher-injection in GraphCypherQAChain) is a textbook LLM05 chained with LLM01.

LLM06 Excessive Agency

Granting the LLM too much autonomy, tool access, or permission scope. Excessive Agency is the agentic-AI category. As LLM-driven agents move from chat to action (calling tools, running shell commands, hitting payment APIs), the gap between "what the model is allowed to attempt" and "what the model can effectively do without a human in the loop" becomes the most consequential design decision in the system.

LLM07 System Prompt Leakage

Inadvertent or attacker-induced disclosure of system prompts and configuration. LLM07 is new in v2025. The risk is twofold: system prompts often contain operational details, brand tone instructions, or even keys; and once leaked, they become the attacker's blueprint for higher-quality jailbreaks.

LLM08 Vector and Embedding Weaknesses

Manipulation or misinterpretation of vector representations used for retrieval-augmented generation (RAG). LLM08 is also new in v2025. The most-cited failure modes are embedding inversion (recovering the original text from its embedding), poisoned-document RAG injection, and cross-tenant leakage in shared vector stores.

LLM09 Misinformation

Confident generation of incorrect, biased, or misleading output. The category formalizes "hallucination" as a security concern, not just a quality concern, when downstream automation acts on the wrong answer.

LLM10 Unbounded Consumption

Insufficient controls on resource usage. The denial-of-wallet variant, where attackers force expensive inference loops, is the operationally common one in 2026. The Gartner forecast that AI cybersecurity will grow at 74 percent CAGR and AI data security at 155 percent CAGR partly reflects the cost of fixing this category.

MITRE ATLAS: the adversarial-ML knowledge base

MITRE ATLAS (Adversarial Threat Landscape for Artificial Intelligence Systems) is the AI counterpart to ATT&CK. As of version 5.1.0 in November 2025 the framework contains 16 tactics, 84 techniques, 56 sub-techniques, 32 mitigations, and 42 real-world case studies. The November 2025 release expanded from 15 tactics and 66 techniques in October 2025; the October 2025 collaboration with Zenity Labs added 14 new attack techniques specifically for AI agents and generative AI systems. The February 2026 v5.4.0 release added "Publish Poisoned AI Agent Tool" and "Escape to Host" techniques. The case-study count grew from 33 in October 2025 to 42 by February 2026.

ATLAS pairs with ATT&CK rather than replacing it; defenders typically map attacks against AI systems to ATLAS first, then bridge to ATT&CK at the lateral-movement and impact stages where adversary tradecraft converges with traditional intrusions. The canonical "agent escape" pattern (model is jailbroken via LLM01, then uses tool access to drop code on the host or call privileged APIs) shows up cleanly in this combined view.

Figure 2: Six anchor governance frameworks. Voluntary in the US, increasingly mandatory in the EU and UK. ISO/IEC 42001 is the only certifiable AIMS. Sources: NIST; ISO; MITRE ATLAS; OWASP; EUR-Lex Regulation 2024/1689; NSA + CISA joint guidance.

Governance: from voluntary to mandatory

NIST AI Risk Management Framework 1.0 + AI 600-1

The NIST AI Risk Management Framework 1.0, released January 2023, defines four core functions: Govern, Map, Measure, Manage. Govern establishes accountability structures and a culture of risk management. Map identifies the AI system context, intended uses, and risks. Measure quantifies and tracks risks across the system lifecycle. Manage implements controls and incident response. The RMF is voluntary in the US, but it is increasingly the baseline that procurement teams and federal contractors map their AI assurance programs to.

The NIST AI 600-1 Generative AI Profile, published in July 2024, is the first cross-sectoral companion to AI RMF 1.0. It extends the four core functions to generative-AI-specific risks (CBRN information, confabulation, dangerous or violent recommendations, data privacy, information integrity, harmful bias) and adds more than 200 suggested actions. For defenders, the Profile is the most actionable artifact NIST has shipped in this space; treat it as a control library, not a policy document.

ISO/IEC 42001:2023

ISO/IEC 42001:2023, published December 2023, is the first international management-system standard for AI. It specifies requirements for establishing, implementing, maintaining, and continually improving an Artificial Intelligence Management System (AIMS). Critically, it is certifiable: an independent third-party conformity assessment body can audit and attest that an organization's AI processes meet the standard, the same way ISO 27001 attestation works for general information security. Microsoft, AWS, and a growing list of cloud providers have already pursued certification. For organizations subject to enterprise procurement, expect ISO/IEC 42001 to follow ISO 27001's path from "nice to have" to "required by RFP."

EU AI Act (Regulation 2024/1689)

The EU AI Act was published in the Official Journal of the EU on 12 July 2024 and entered into force on 1 August 2024 across all 27 member states. It is the most consequential AI regulation in 2026 because of three things: it has teeth (penalties up to EUR 35M or 7 percent of global revenue), it has extraterritorial reach (anyone selling AI services into the EU market falls under it), and its phased applicability lands key obligations on dates that are now in the immediate planning horizon.

The phased timeline (artificialintelligenceact.eu):

2 February 2025: Article 5 prohibitions enforceable. Banned practices include social scoring by public authorities, untargeted scraping of facial images for biometric databases, real-time remote biometric identification in publicly accessible spaces for law enforcement (with narrow exceptions), emotion recognition in workplaces and educational institutions, and biometric categorization that infers sensitive attributes.

2 August 2025: GPAI (general-purpose AI) model rules, governance obligations, confidentiality, and most non-GPAI penalties enforceable.

2 August 2026: High-risk AI system requirements enforceable. This is the big enforcement date for most AI systems used in regulated contexts (employment, education, critical infrastructure, law enforcement, biometrics, etc.).

2 August 2027: Full applicability. Pre-existing GPAI models placed on the EU market before 2 August 2025 must achieve compliance by this date.

Figure 3: EU AI Act phased applicability from publication July 2024 through full applicability August 2027. Source: EUR-Lex Regulation (EU) 2024/1689; Implementation Timeline at artificialintelligenceact.eu.

US: EO 14179 and the AI Action Plan

The Biden Administration's Executive Order 14110 (Safe, Secure, and Trustworthy Development and Use of AI), signed 30 October 2023, was the most comprehensive US AI governance instrument. It was rescinded by President Trump within hours of his inauguration on 20 January 2025. The replacement, Executive Order 14179 "Removing Barriers to American Leadership in Artificial Intelligence", was signed 23 January 2025. It directs an action plan to sustain US AI leadership; that plan was released in July 2025.

For defenders, the practical implication is that the US AI security baseline now lives less in a single executive order and more in agency-specific guidance that survived the rescission: the SEC cybersecurity disclosure rules (finalized 26 July 2023, effective for fiscal years ending on or after 15 December 2023) require Form 10-K disclosure of cybersecurity risk management plus Item 1.05 of Form 8-K disclosure of material incidents within four business days. The NY DFS October 2024 Industry Letter operationalized AI cybersecurity under 23 NYCRR Part 500 for covered financial institutions. The NSA, CISA, FBI, and Five Eyes partners co-authored the April 2024 Deploying AI Systems Securely Cybersecurity Information Sheet; NSA followed up on 22 May 2025 with AI Data Security: Best Practices for Securing Data Used to Train and Operate AI Systems. CISA's AI Cybersecurity Collaboration Playbook (TLP:CLEAR, January 2025) governs voluntary information sharing.

UK and other jurisdictions

The UK AI Security Institute (formerly UK AI Safety Institute, founded November 2023) has conducted evaluations on more than 30 frontier models. Its 2025 Frontier AI Trends Report found that AI models can complete apprentice-level cyber tasks 50 percent of the time on average, up from just over 10 percent in early 2024, and that AISI tested its first model that successfully completed expert-level cyber tasks (typically requiring 10-plus years of human experience) in 2025. AISI also notes it has found universal jailbreaks in every system it tested. The Inspect framework (and its siblings InspectSandbox, InspectCyber, ControlArena) is open-sourced and used by governments, companies, and academics globally.

Anthropic Responsible Scaling Policy

Frontier AI labs publish their own assurance frameworks. Anthropic's Responsible Scaling Policy version 3.0 defines AI Safety Levels modeled on US BSL biosafety levels: ASL-2 (current LLMs including Claude), ASL-3 (substantially elevated risk vs non-AI baselines or low-level autonomous capabilities), ASL-4 and beyond (not yet defined). Anthropic activated ASL-3 protections in 2025 for specific Claude deployments. For buyers, RSPs are the primary vehicle through which AI labs make commitments about model evaluation, deployment safeguards, and red-teaming before release.

Real-world AI vulnerability disclosures, 2024 to 2025

Risk taxonomies and governance frameworks land on enterprise risk registers, but it is the named CVE and vendor-disclosure record that gives defenders a sense of how AI systems actually fail.

Replicate.ai cross-tenant RCE (May 2024)

A vulnerability in Replicate.ai's hosting platform that would have allowed unauthorized cross-tenant access to customers' models, prompts, and results was responsibly disclosed in January 2024 and publicly reported in May 2024. The root cause was the AI model packaging format itself. AI models are typically distributed in formats that allow arbitrary code execution. The reported attack used a malicious Cog container uploaded to Replicate, which achieved remote code execution on the platform infrastructure with elevated privileges; the model and director containers shared a network namespace, which made cross-tenant traversal possible. No customer data was compromised, no in-the-wild exploitation was observed, and Replicate hardened the platform with TLS-encrypted internal traffic per its shared-network vulnerability disclosure and contemporaneous coverage in Dark Reading and The Hacker News. This is the canonical real-world LLM03 Supply Chain case.

Hugging Face: 100 malicious models (March 2024)

Approximately 100 machine-learning models uploaded to Hugging Face were disclosed as containing code-execution payloads in March 2024. The vector was the Python pickle serialization format, which is widely used for ML model storage and which can execute arbitrary code via the __reduce__ method on deserialization. Hugging Face had pickle scanning, malware scanning, and secrets scanning, but it did not block pickle models outright. Hugging Face has since extended in-platform model security checks via partnerships with third-party scanners. The disclosure was widely covered in Dark Reading and BleepingComputer. This is LLM03 Supply Chain operationalized at the model registry layer.

LangChain CVE-2024-8309 (June 2024)

CVE-2024-8309 is a prompt-injection-into-Cypher-injection vulnerability in LangChain's GraphCypherQAChain module. User-controlled natural-language input was directly embedded into LLM-generated Cypher queries; an attacker could craft prompts that influenced query generation to compromise the connected graph database. This is LLM01 Prompt Injection chained with LLM05 Improper Output Handling.

LangChain CVE-2025-68664 LangGrinch (December 2025)

CVE-2025-68664 (CVSS 9.3) is a serialization-injection vulnerability in LangChain's dumps() and dumpd() functions that fail to escape dictionaries containing the lc key. The escaping bug enables injection of LangChain object structures via user-controlled fields like metadata, additional_kwargs, and response_metadata. Exploitation paths include secret extraction from environment variables, instantiation of classes within pre-approved trusted namespaces, and arbitrary code execution via Jinja2 templates. Because LangChain is the most-deployed LLM application framework, the blast radius is large.

Lakera Gandalf prompt-attack corpus

Lakera's Gandalf game has been online since May 2023 and has accumulated more than 40 million prompts and password guesses from 1+ million players, who have collectively spent more than 25 years of game time. Lakera released a corpus of 279,000 labeled prompt attacks alongside the Gandalf the Red paper on arXiv (January 2025). For defenders, the Gandalf corpus is the largest publicly available real-world prompt-injection dataset and a useful baseline for AI red-team tooling.

Protect AI huntr

Protect AI's huntr program is the world's first bug-bounty platform focused exclusively on AI/ML packages, libraries, frameworks, and foundation models. The community has surpassed 15,000 hunters. Recent disclosure batches include 32 vulnerabilities in June 2024 and nearly three dozen in October 2024 (including three critical: two in the Lunary AI developer toolkit and one in Chuanhu Chat). Validated vulnerabilities are paid bounties and assigned CVEs. The huntr backlog is the leading public indicator of the OSS AI/ML supply chain's residual risk.

Defender-side AI: adoption, budgets, and the assurance gap

Three primary publishers dominate the defender-side data. Their numbers tell a coherent story: organizations are spending and adopting fast, but governance is lagging, and the organizations that spend on AI defenses earn measurable breach-cost reductions.

WEF Global Cybersecurity Outlook 2026

The WEF Global Cybersecurity Outlook 2026, published January 2026, surveys C-suite leaders globally on cybersecurity priorities. AI is the dominant theme of the 2026 edition. Key figures:

94 percent of leaders agree AI is the single most significant driver of cybersecurity change in 2026.

87 percent flagged AI vulnerabilities as the fastest-growing cyber risk through 2025.

The share of organizations assessing the security of their AI tools pre-deployment doubled from 37 percent in 2025 to 64 percent in 2026.

The 64 percent pre-deployment-assessment figure is the most important defender-adoption datapoint of the year. It says structured AI security review is graduating from a research practice into a procurement gate, but with more than a third of organizations still skipping it.

IBM Cost of a Data Breach 2025

The IBM Cost of a Data Breach 2025 Report, released 30 July 2025 (600-plus organizations), is the canonical source for AI's effect on breach economics:

13 percent of organizations reported breaches of AI models or applications.

97 percent of those breached organizations lacked proper AI access controls.

20 percent of breaches involved shadow AI; shadow AI added US$670,000 to the average breach cost (US$4.63M vs the headline US$4.44M global average).

Organizations using AI and automation extensively cut breach lifecycle by 80 days and saved nearly US$1.9M vs those that did not.

The global average breach cost dropped to US$4.44M, down 9 percent year over year, the first material drop since 2020, attributed to faster detection and containment via AI-powered defense tools.

HiddenLayer 2025 AI Threat Landscape Report

HiddenLayer's 2025 AI Threat Landscape Report (released 4 March 2025) surveyed 250 IT leaders. Headline figures:

99 percent of organizations are prioritizing AI security in 2025.

95 percent increased AI security budgets.

74 percent of IT leaders said their organization definitely experienced an AI breach in the past year.

72 percent of IT leaders flag shadow AI as a major risk.

33 percent of AI breaches originated from chatbot attacks; 45 percent from malware in models pulled from public repositories; 21 percent from third-party applications.

The 33 percent chatbot figure is the operational counterpart to OWASP LLM01 Prompt Injection: prompt-injection attacks on internal and external chatbots are now the leading attack vector against deployed AI systems.

Figure 4: Defender adoption is climbing faster than assurance. Sources: WEF Global Cybersecurity Outlook 2026; IBM Cost of a Data Breach 2025; HiddenLayer AI Threat Landscape Report 2025.

Gartner: the new AI security market

Gartner's 3Q25 information security forecast projects global security spending reaching US$244.2B in 2026, up 13.3 percent year over year. AI cybersecurity grows at 74 percent CAGR and AI data security at 155 percent CAGR, the two highest growth lines in the forecast. Gartner created a dedicated AI cybersecurity market category for the first time in this forecast, and the category nearly doubles in 2026. By 2028, more than 50 percent of enterprises will use AI security platforms to protect their AI investments. By 2027, 17 percent of total cyberattacks or data leaks will involve generative AI.

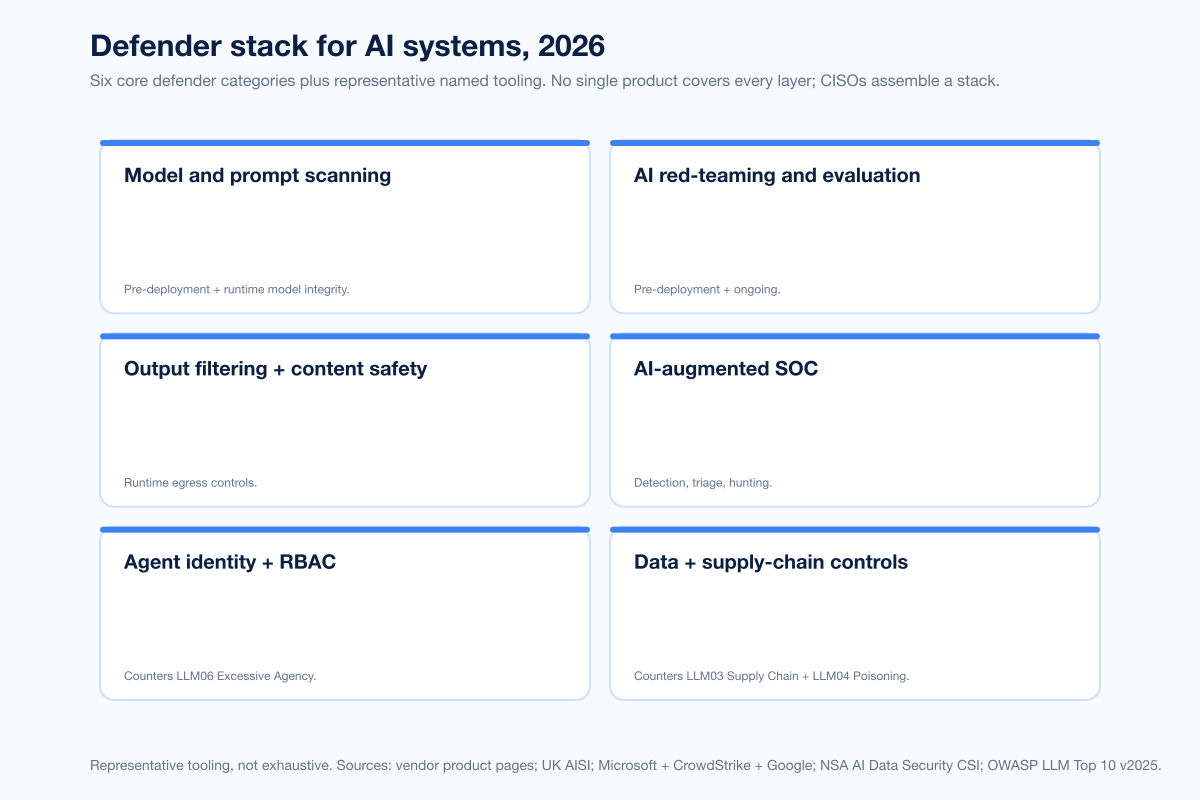

The defender stack for AI systems in 2026

Six pillars define the practical defender stack. No single product covers every layer; CISOs assemble from the categories below.

Figure 5: Six categories of the AI defender stack. Representative tooling, not exhaustive. Sources: vendor product pages; UK AISI; Microsoft Security Copilot; CrowdStrike Charlotte AI; NSA AI Data Security CSI; OWASP LLM Top 10 v2025.

1. Model and prompt scanning

Pre-deployment and runtime model integrity, plus prompt-time content classification. HiddenLayer (Austin, Texas) ships AISec for model scanning and prompt detection. Protect AI (Seattle, Washington) ships Guardian (model scanning) and Recon (LLM red-team automation). Lakera (Zurich) ships Lakera Guard for prompt classification. Garak is an open-source LLM vulnerability scanner widely used in AI red-team workflows.

2. AI red-teaming and evaluation

Pre-deployment and ongoing adversarial testing. The UK AI Security Institute Inspect framework (open source) is widely adopted for autonomous evaluation harnesses. Lakera's Gandalf corpus provides 279,000 labeled prompt attacks. Calypso AI (Dublin) ships ModelOps and red-team evaluation suites. Frontier AI labs (Anthropic, OpenAI) maintain internal red teams plus partner programs.

3. Output filtering and content safety

Runtime egress controls between the model and downstream systems. Microsoft Purview AI Hub, AWS Bedrock Guardrails, Google Cloud Model Armor, and NVIDIA NeMo Guardrails are the four canonical platform-side options. The Output Filter category is where enterprises most commonly bridge the gap between the LLM05 Improper Output Handling category and the application-layer SQL/HTML/shell escaping that traditional appsec teams already understand.

4. AI-augmented SOC

Defender AI inside detection, triage, hunting, and response. The 2025 to 2026 maturation here is sharper than any of the categories above:

CrowdStrike Charlotte AI Detection Triage triages security detections with over 98 percent accuracy and saves 40-plus analyst hours per week (continuous real-world data from the Falcon Complete Next-Gen MDR environment). The November 2025 Charlotte Agentic SOAR release introduced an orchestration layer for the Falcon Agentic Security Platform, and Charlotte AI achieved FedRAMP High Authorization for public-sector deployment.

Microsoft Security Copilot plus the Security Alert Triage Agent identifies 6.5x more malicious alerts, improves verdict accuracy by 77 percent, and frees analysts to spend 53 percent more time investigating real threats. Microsoft ships Security Copilot to all Microsoft 365 E5 customers.

Microsoft Cyber Signals Issue 9 (April 2025) reported Microsoft thwarted approximately US$4 billion in AI-powered fraud between April 2024 and April 2025, blocked roughly 1.6 million bot signup attempts per hour, and rejected 49,000 fraudulent partnership enrollments.

Google Sec-PaLM, IBM watsonx for Cyber, and Cisco AI Defense (powered by the Robust Intelligence acquisition) round out the major-platform AI-augmented SOC offerings.

5. Agent identity and RBAC

Authorization controls for autonomous AI agents. Excessive Agency (LLM06) is rapidly becoming the most consequential failure mode in agentic deployments. Mature programs scope tool access via OAuth-style scoped tokens, maintain explicit allow lists for tool invocation, require step-up authentication on privileged actions (payments, admin APIs, code execution), and log every tool call with provenance back to the originating prompt. The 2025 disclosures of agent jailbreaks (visible at scale in OpenAI's quarterly disruption reports and in the cross-link to the offensive companion post) make this the single highest-leverage control to harden in 2026.

6. Data and supply-chain controls

Provenance, scanning, and assurance for the components that flow into AI systems. Model SBOM (a software bill of materials for AI artifacts) is emerging as the analog to traditional SBOM. Hugging Face ships in-platform pickle scanning and partners with third-party security vendors. Sigstore for ML artifacts is in active development. The NSA AI Data Security CSI (May 2025) explicitly addresses data supply-chain vulnerabilities, maliciously modified data, and data drift as primary risks.

Industry-specific obligations: financial services, healthcare, critical infrastructure

Regulators in regulated industries are running ahead of horizontal AI regulation. The pattern is consistent: take an existing cybersecurity rule, instruct covered entities to apply it to AI, and add specific AI risk categories.

Financial services: the NY DFS Industry Letter of 16 October 2024 operationalized AI cybersecurity under 23 NYCRR Part 500. The letter does not impose new requirements; it explains how covered entities (banks, insurers, credit unions, mortgage originators) should apply existing Part 500 risk assessment, MFA, third-party service provider, and incident-reporting obligations to AI risk. It identifies four AI-specific risks: AI-enabled social engineering, AI-enhanced cyberattacks, theft of nonpublic information used in AI deployments, and supply-chain vulnerabilities. NY DFS made multifactor authentication for all authorized users mandatory by November 2025.

Public companies: the SEC cybersecurity disclosure rules, final 26 July 2023, require Form 10-K disclosure of cybersecurity risk management, strategy, and governance, plus Form 8-K Item 1.05 disclosure of material cybersecurity incidents within four business days. AI-related incidents that are material (e.g., a breach of an AI-driven customer-facing system, a deepfake-enabled BEC loss above the company's materiality threshold) trigger the same 8-K obligations as any other cyber incident.

Critical infrastructure: the NSA Joint Guidance on Deploying AI Systems Securely (April 2024, co-authored with CISA, FBI, ACSC, CCCS, NCSC-NZ, NCSC-UK) is the Five-Eyes baseline for critical-infrastructure AI deployment.

Operational technology / industrial: NSA, CISA, and partners released joint guidance on integrating AI in OT environments in 2025.

Industry threat-landscape signals

ENISA Threat Landscape 2025

ENISA's 2025 Threat Landscape (October 2025) analyzes 4,875 incidents from July 2024 to June 2025. Headline AI findings: AI-supported phishing accounted for more than 80 percent of observed social engineering activity worldwide by early 2025. Generative AI is described as both a weapon and a target. ENISA names the Rules File Backdoor supply-chain vector targeting AI coding assistants (Cursor, GitHub Copilot) by injecting malicious instructions into configuration files. ENISA also flags the rise of standalone malicious AI systems (locally hosted models built for offensive use) since the start of 2025.

Microsoft Digital Defense Report 2025

The Microsoft Digital Defense Report 2025 (October 2025) is the largest single defender-side telemetry release of the year. Microsoft observes that AI-driven phishing is now three times more effective than traditional campaigns; people are 4.5x more likely to click an AI-written phishing email versus a human-written one; and over 40 percent of ransomware attacks have a hybrid component. Defenders, Microsoft notes, are already using AI to block billions in fraud, compress response times from hours to minutes, and scale protections globally.

CrowdStrike 2026 Global Threat Report

The CrowdStrike 2026 Global Threat Report, titled "Year of the Evasive Adversary", anchors the offensive-side companion post but is also the canonical 2026 primary publisher for adversary-AI growth: 89 percent year-over-year rise in AI-enabled adversary attacks in 2025. Defenders should treat that 89 percent figure as the headline justification for the budget growth visible in the WEF and HiddenLayer surveys.

Where defenders should focus in 2026

The data above suggests a sharp prioritization for the next twelve months.

Adopt OWASP LLM Top 10 v2025 + MITRE ATLAS as your shared vocabulary. Every AI risk register, AI red-team scope, and AI procurement assessment should map back to these two frameworks. The vocabulary is now stable enough that vendor product pages, audit checklists, and incident reports use them in compatible ways.

Plan for EU AI Act high-risk obligations now. 2 August 2026 is the date that matters. If your AI systems touch employment, education, critical infrastructure, law enforcement, biometrics, or credit, scope and gap-assess against Annex III today.

Close the pre-deployment assessment gap. WEF found 64 percent of organizations now assess AI tools pre-deployment, up from 37 percent. The other 36 percent are over-represented in HiddenLayer's 74 percent confirmed-AI-breach group. A repeatable AI Security Review (template + risk gates + sign-off) is one of the highest-leverage controls a CISO can ship in 2026.

Prioritize agent-identity and RBAC controls. Excessive Agency (LLM06) is the failure mode whose blast radius is growing fastest. Scope tool access narrowly, allow-list privileged operations, require step-up auth on tool invocation, and log every tool call.

Deploy defender AI on the SOC, not just the perimeter. IBM measured US$1.9M in savings and 80 days faster containment for organizations deploying AI extensively. Microsoft's Security Alert Triage Agent identifies 6.5x more malicious alerts. Charlotte AI saves 40 hours per analyst per week. The ROI argument is concrete.

Treat the OSS AI/ML supply chain as in-scope. Pickle scanning, model SBOM, scoped credentials for model registries, and attestation of training data provenance belong in your standard supply-chain controls. The Replicate cross-tenant disclosure, the Hugging Face malicious-models disclosure, and the LangChain CVE record show why.

How Stingrai approaches AI security

At Stingrai we built our AI pentesting agent Snipe on top of HackerOne's report corpus, and our team's offensive research practice (18 published CVEs, presentations at DEFCON and BSIDES, OSCE3 / OSCP / OSWE / OSEP / CREST CRT credentials) directly informs how we test client AI systems. Our PTaaS engagements now ship with explicit OWASP LLM Top 10 v2025 + MITRE ATLAS coverage as a default workflow for any client deploying production LLM features. Founded in 2021 in Toronto with a London, UK office, the team has earned 5.0 / 5.0 across 19 Clutch reviews. We are aligned with SOC 2, ISO/IEC 27001, HIPAA, PCI DSS 4.0, NIST SP 800-53 / 800-171, DORA, and NIS2.

Frequently Asked Questions

What is the OWASP LLM Top 10 v2025 and why does it matter in 2026?

The OWASP Top 10 for LLM Applications version 2025 is the current canonical risk taxonomy for AI applications. It was published in late 2024 by the OWASP Generative AI Security Project and ranks ten risk categories from LLM01 Prompt Injection through LLM10 Unbounded Consumption, with two new categories added vs the 2023 list (LLM07 System Prompt Leakage and LLM08 Vector and Embedding Weaknesses). It matters in 2026 because the vocabulary is now stable enough that vendor product pages, audit checklists, regulatory guidance (NY DFS, NSA, ENISA), and academic threat models reference it in compatible ways. Every AI risk register, AI red-team scope, and AI procurement assessment should map back to it.

What does MITRE ATLAS cover and how big is it now?

MITRE ATLAS (Adversarial Threat Landscape for Artificial Intelligence Systems) is the AI counterpart to ATT&CK. It catalogs adversarial machine-learning tactics, techniques, and case studies. As of version 5.4.0 in February 2026 it tracks 16 tactics, 84 techniques, 56 sub-techniques, 32 mitigations, and 42 real-world case studies, including 14 new techniques contributed via the October 2025 collaboration with Zenity Labs that focus on AI agents and generative AI systems. ATLAS pairs with ATT&CK rather than replacing it; defenders typically map attacks against AI systems to ATLAS first, then bridge to ATT&CK at the lateral-movement and impact stages.

What is the EU AI Act timeline and what are the penalties?

The EU AI Act (Regulation 2024/1689) was published 12 July 2024 and entered into force 1 August 2024. Phased applicability: Article 5 prohibitions enforceable 2 February 2025; GPAI rules and most non-GPAI penalties enforceable 2 August 2025; high-risk system requirements enforceable 2 August 2026; full applicability 2 August 2027. Maximum penalty for prohibited-AI violations: EUR 35M or 7 percent of global revenue, whichever is higher. The Act has extraterritorial reach: any provider placing AI systems on the EU market is in scope.

What is NIST AI RMF and the AI 600-1 Generative AI Profile?

The NIST AI Risk Management Framework 1.0 (January 2023) defines four core functions: Govern, Map, Measure, Manage. It is voluntary in the US but increasingly the procurement baseline for federal contractors and enterprise AI assurance programs. The AI 600-1 Generative AI Profile (July 2024) is the cross-sectoral GenAI companion; it adds more than 200 suggested actions across the four core functions for risks specific to generative AI (CBRN information, confabulation, dangerous or violent recommendations, data privacy, information integrity, harmful bias).

How is ISO/IEC 42001 different from NIST AI RMF?

ISO/IEC 42001:2023, published December 2023, is the first international management-system standard for AI. The key difference vs NIST AI RMF is that 42001 is certifiable: an accredited third-party conformity assessment body can audit and attest that an organization's AI processes meet the standard, the same model as ISO 27001 for general information security. NIST AI RMF is a voluntary framework with no formal certification scheme. Organizations subject to enterprise procurement should expect ISO/IEC 42001 to follow ISO 27001's path from "nice to have" to "required by RFP" through 2026 to 2027.

What does the IBM Cost of a Data Breach 2025 say about AI?

The IBM Cost of a Data Breach 2025 Report (released 30 July 2025; 600-plus organizations) found 13 percent of organizations reported breaches of AI models or applications, 97 percent of those lacked proper AI access controls, and shadow AI added US$670,000 to the average breach cost. On the defender side, organizations using AI extensively saved nearly US$1.9M per breach and cut breach lifecycle by 80 days. The global average breach cost dropped 9 percent year over year to US$4.44M.

What are the most-cited defender-AI products in 2026?

Three products dominate citation in primary publishers. CrowdStrike's Charlotte AI Detection Triage triages with 98 percent accuracy and saves 40-plus analyst hours per week. Microsoft's Security Alert Triage Agent identifies 6.5x more malicious alerts and improves verdict accuracy by 77 percent. Microsoft's Cyber Signals Issue 9 reported approximately US$4 billion in AI-powered fraud thwarted across April 2024 to April 2025. Beyond those, Google Sec-PaLM, IBM watsonx for Cyber, and Cisco AI Defense (built on the Robust Intelligence acquisition) round out the major-platform offerings.

Which AI vulnerability disclosures should defenders study?

Five named cases. Replicate.ai cross-tenant RCE (publicly disclosed May 2024 per the platform blog post and Dark Reading): malicious Cog container exploited a shared network namespace; canonical LLM03 Supply Chain. Hugging Face 100 malicious models (March 2024 per The Hacker News and BleepingComputer): pickle-format __reduce__ injection in a shared model registry. LangChain CVE-2024-8309 (June 2024): prompt-injection-into-Cypher-injection in GraphCypherQAChain. LangChain CVE-2025-68664 LangGrinch (December 2025, CVSS 9.3): serialization-injection in dumps()/dumpd() enabling secret exfil and RCE via Jinja2. Lakera Gandalf corpus: 40-plus million prompts from 1-plus million players plus the 279,000-prompt corpus published with the Gandalf the Red paper on arXiv (January 2025) is the largest public real-world prompt-injection dataset.

How does this differ from your AI cyber attack statistics 2026 post?

The AI cyber attack statistics 2026 post covers the offensive side: how attackers use AI (Anthropic GTG-1002, OpenAI disruption reports, AiTM phishing kits, deepfake fraud, the 16 percent IBM attacker-AI rate). This post covers the defensive side: risk taxonomy (OWASP LLM Top 10, MITRE ATLAS), governance (NIST AI RMF, ISO/IEC 42001, EU AI Act, US executive orders, NY DFS), AI-system vulnerability classes plus named CVEs, defender adoption and budget data (WEF, HiddenLayer, IBM CODB), and the defender stack (model scanning, AI red-teaming, output filtering, AI-augmented SOC, agent identity, supply-chain controls). Read both for full coverage. They are companion pieces.

Where can I get the latest defender-side AI data?

The primary publishers to track. Standards: NIST, MITRE ATLAS, OWASP GenAI Security Project, ISO/IEC. Government / regulator: EU AI Office, UK AI Security Institute, CISA, NSA AISC, NY DFS, SEC. Surveys + telemetry: WEF Global Cybersecurity Outlook (annual, January), IBM Cost of a Data Breach (annual, July), HiddenLayer AI Threat Landscape (annual, March), Microsoft Digital Defense Report (annual, October), CrowdStrike Global Threat Report (annual, February), ENISA Threat Landscape (annual, October), Gartner (rolling forecasts).

Related reading

AI Cyber Attack Statistics 2026: Attacker AI, Influence Ops, and Agentic Threats — the offensive-side companion post.

Deepfake Statistics 2026 — synthetic-media fraud telemetry.

Supply Chain Attack Statistics 2026 — third-party and software-supply-chain risk.

Insider Threat Statistics 2026 — shadow-AI is an insider-risk channel.

Stingrai PTaaS — continuous penetration testing aligned with OWASP and NIST.

Stingrai AI Pentesting — AI-system testing built on top of HackerOne's report corpus.