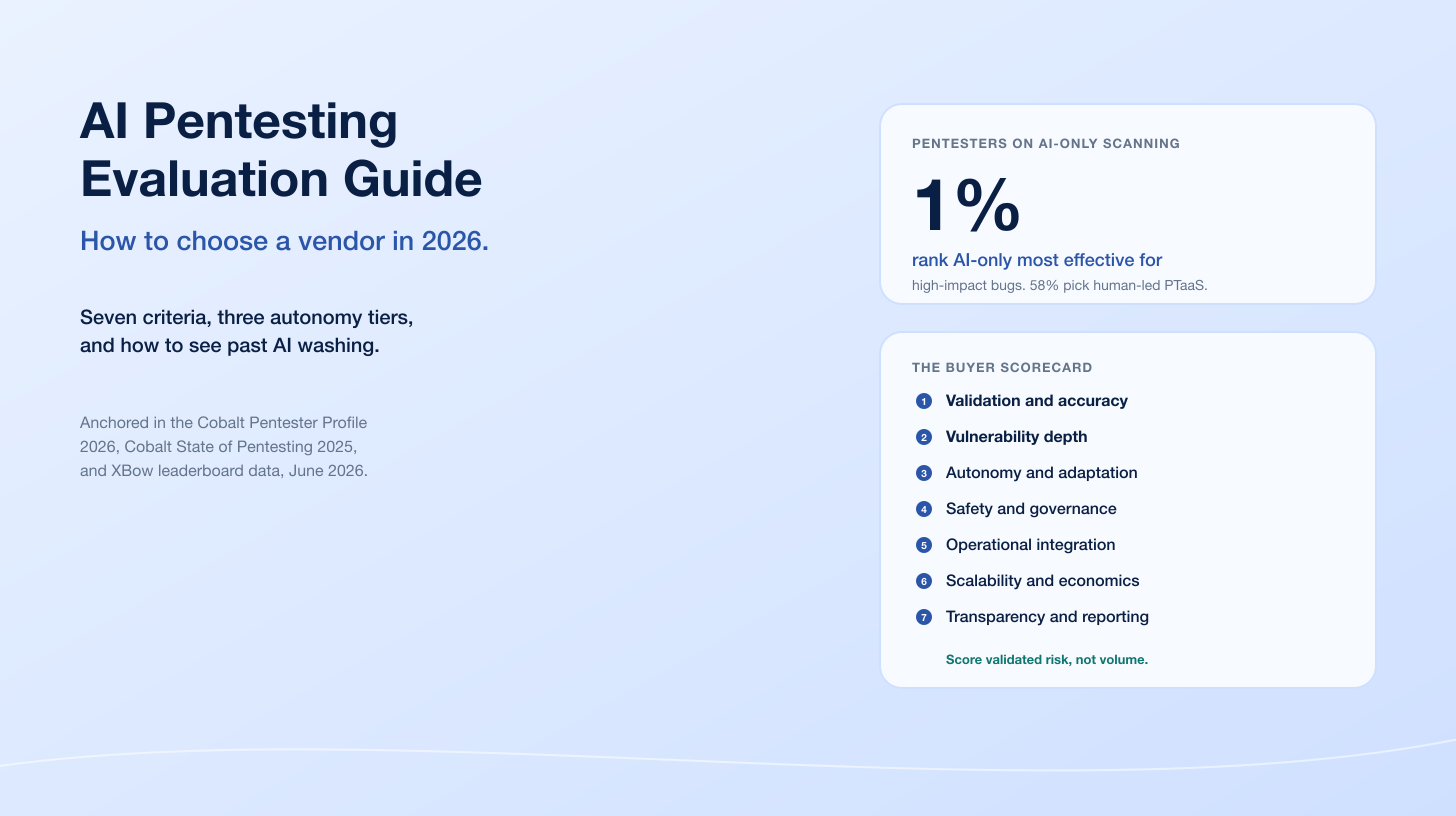

Deepfakes crossed the line from novelty into production-grade fraud tool in 2024 and 2025. Sumsub's 2024 Identity Fraud Report detected a 4x increase in deepfakes worldwide year over year, with deepfakes accounting for 7% of all fraud attempts on its identity-verification platform across more than 3 million analyzed cases. Pindrop's 2025 Voice Intelligence and Security Report measured a +1,300% surge in deepfake fraud attempts across contact centers over the same period, from roughly one per month to seven per day. iProov's Threat Intelligence Report 2025 recorded a 2,665% spike in Native Virtual Camera attacks and a 300% jump in face-swap attacks against identity verification systems.

This post is the Stingrai research team's canonical 2026 reference for deepfake activity. It assembles 69 numeric claims from 20 named primary publishers, including Sumsub, iProov, Pindrop, Onfido / Entrust, Regula, CrowdStrike, Microsoft Digital Defense Report, Chainalysis, Gartner, Europol IOCTA, FBI IC3, and the World Economic Forum. Lead data is full-year 2024 and full-year 2025 telemetry, the freshest available; primary publishers have not yet released full-year 2026 reports as of April 2026. Every number carries its source, year, and methodology window so any figure can be audited inline.

TL;DR: 10 labeled key stats

Deepfakes detected worldwide, 2023 to 2024: 4x increase, now 7% of all Sumsub-tracked fraud attempts (Sumsub Identity Fraud Report 2024).

Deepfake fraud attempts in contact centers, 2024 YoY: +1,300%, from one per month to seven per day (Pindrop 2025 Voice Intelligence and Security Report).

Face-swap attacks vs 2023 (iProov corpus): +300% (iProov Threat Intelligence Report 2025).

Native Virtual Camera attacks, 2024 (iProov corpus): +2,665% (iProov Threat Intelligence Report 2025).

Frequency of deepfake attempts against IDV, 2024: one every five minutes (Onfido and Entrust 2025 Identity Fraud Report).

Vishing attack growth, H1 to H2 2024: +442% (CrowdStrike 2025 Global Threat Report).

Arup Hong Kong deepfake CFO loss, Feb 2024: US$25.6M across 15 wire transfers to 5 accounts (CNN, May 2024).

Humans who reliably spot deepfakes in iProov's study: 0.1% (iProov Threat Intelligence Report 2025).

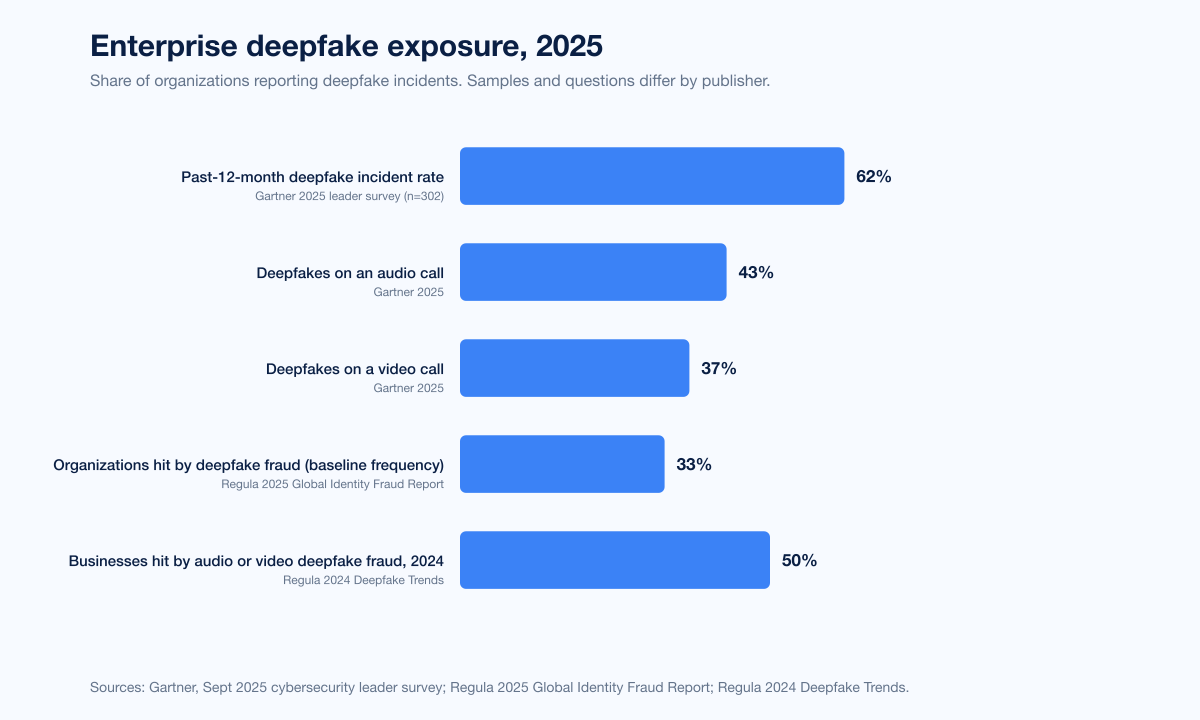

Organizations that faced a deepfake attack in the past 12 months, 2025 Gartner survey (n=302): 62% (Gartner, Sep 2025).

Enterprises that will consider IDV unreliable in isolation by 2026: 30% (Gartner, Feb 2024).

Key takeaways

Deepfake attacks are now a baseline fraud vector, not an edge case. One in three organizations in Regula's 2025 global survey reported deepfake fraud at roughly the same frequency as document fraud and social engineering. The Gartner 2025 leader survey puts the past-12-month exposure rate at 62%.

Voice has become the fastest-growing deepfake channel. Pindrop's +1,300% year-over-year surge in contact-center deepfake attempts, CrowdStrike's +442% vishing growth, and the FBI IC3's US$13 million in 2025 fake-interview losses all point at the voice channel. Video and face-swap attacks grow too, but voice is where volume is landing.

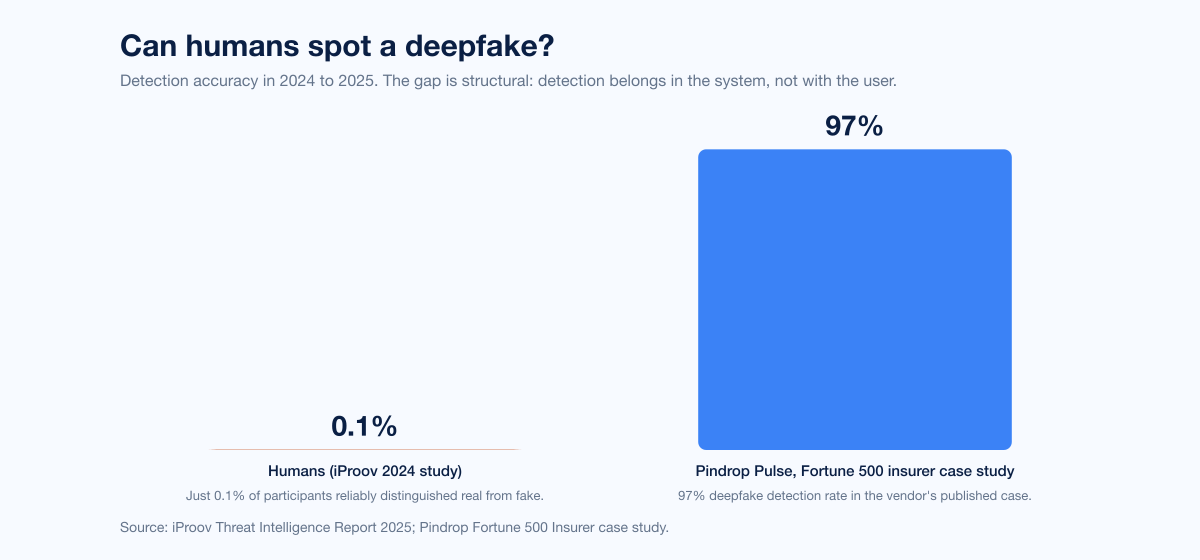

Humans are structurally unable to spot a modern deepfake. iProov's detection study put human accuracy at 0.1%. Detection has to be layered into the system, not left to the end user.

The financial blast radius is in the tens of millions per incident at the top end. Arup's US$25.6M loss is the canonical case study; Ferrari and WPP avoided losses only because an executive asked one unscripted question or the target team was already suspicious. Process is doing the work here, not technology.

Regulators are reacting faster than usual. The EU AI Act, the UK Online Safety Act, the Take It Down Act in the US, and China's synthetic-media rules all force labeling or provenance disclosure. Expect aggressive enforcement in 2026 and 2027.

Methodology

Sources used: Sumsub (identity-verification platform fraud telemetry, more than 3 million analyzed attempts in 2023 and 2024, published November 2024); iProov (Security Operations Center detection telemetry across identity verification deployments, published February 2025 and February 2026); Pindrop (1.2B+ contact-center call corpus, published June 2025); Onfido and Entrust (identity platform telemetry, published November 2024); Regula Forensics (enterprise survey, global, published September 2025 and October 2024); CrowdStrike (Falcon threat intelligence, H1 vs H2 2024, published February 2025); FBI IC3 (US victim complaint corpus, CY 2024, published April 2025); Microsoft Digital Defense Report 2025 (Microsoft threat telemetry, published October 2025); Chainalysis (on-chain scam-wallet data, 2024 to 2025, published January 2026); Europol IOCTA 2025 (EU law-enforcement case corpus, published June 2025); World Economic Forum Global Risks Report 2024 (risk perception survey, published January 2024); Gartner press releases (analyst forecasts and leader surveys, February 2024 and September 2025); and named corporate incident coverage for Arup, Ferrari, and WPP (Hong Kong Police briefings, Fortune, MIT Sloan Management Review, CNN, Bloomberg).

Date cutoff: April 24, 2026. We lead with full-year 2024 or 2025 telemetry where a primary publisher has released it, and label any 2026 figure explicitly as a forecast or projection. Statistics that could not be reached via a named primary source on at least one verification pass were dropped rather than estimated. Secondary news aggregators and vendor blogs that restate other publishers' numbers without adding methodology are cited only where they constitute the public record of a corporate incident (Arup, Ferrari, WPP).

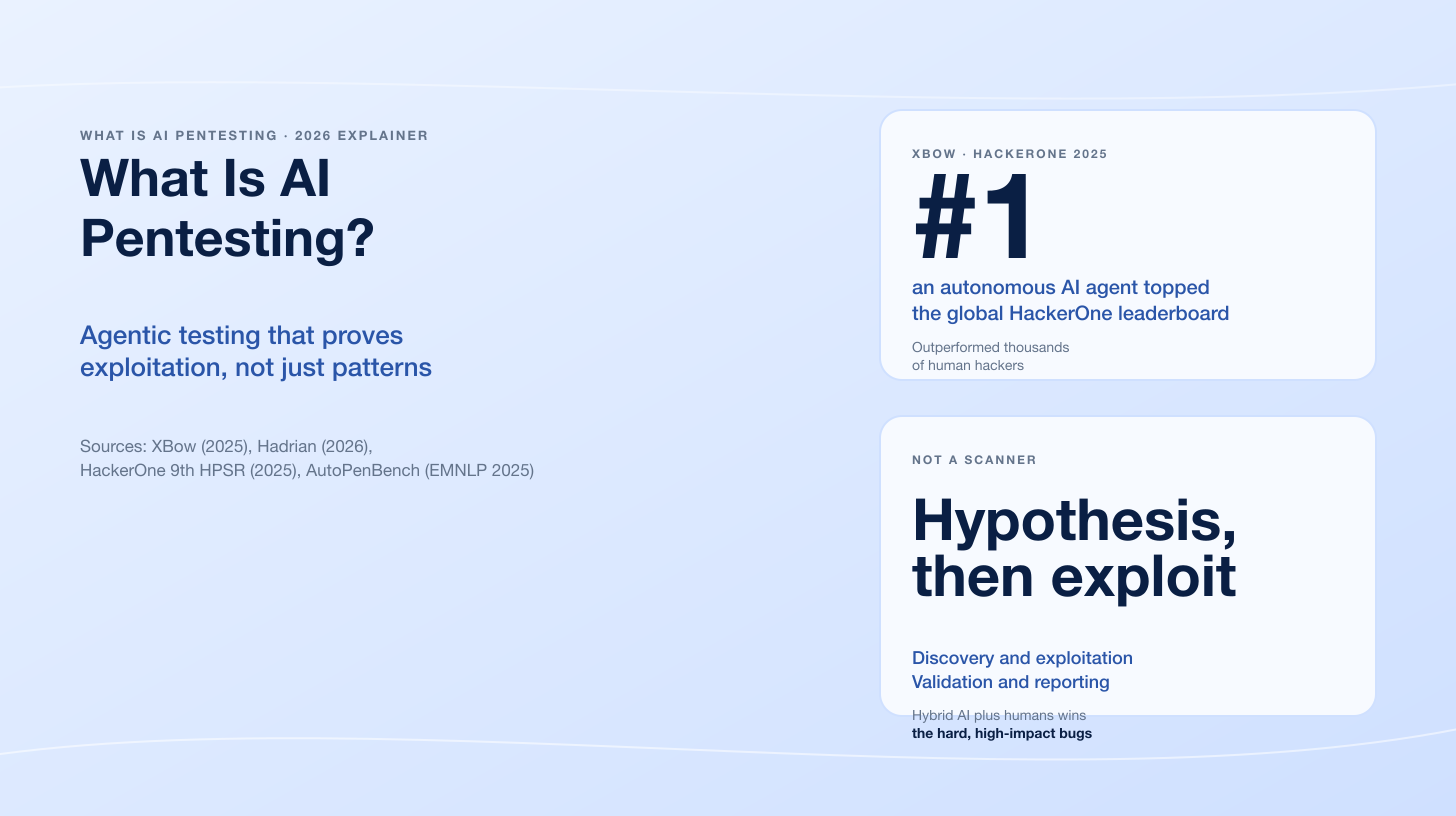

Figure 1: Annual deepfakes shared online. 2023 figure is the DeepMedia-estimated 500,000 videos and voice clips circulated on social media. 2025 projection is the 8 million forecast cited in Europol's IOCTA 2025 and aligned with DeepMedia's published doubling-every-six-months trajectory.

How big is the deepfake problem in 2026?

Three datasets anchor the answer, and they broadly agree.

Platform-level detection data (Sumsub)

Sumsub's 2024 Identity Fraud Report, released in November 2024 and covering over 3 million analyzed fraud attempts between 2023 and 2024, found a 4x year-over-year rise in detected deepfakes worldwide, with deepfakes representing 7% of all fraud attempts by 2024 (Sumsub, 2024). Within that total, the regional pattern was uneven:

Region | 2024 YoY deepfake growth | Source |

|---|---|---|

Middle East | +643% | Sumsub 2024 Identity Fraud Report |

Africa | +393% | Sumsub 2024 Identity Fraud Report |

LATAM and Caribbean | +255% | Sumsub 2024 Identity Fraud Report |

South Korea (country with the largest single YoY growth) | +735% | Sumsub 2024 Identity Fraud Report |

United Kingdom | +118% | Sumsub 2024 Identity Fraud Report |

Election-cycle effects amplified deepfake activity even further in countries holding 2024 votes. Sumsub's May 2024 press release recorded a 245% YoY rise in deepfakes worldwide in Q1 2024 alone, and country-level surges of 303% in the US, 1,625% in South Korea, and 2,800% in China over the 12 months leading into the Q1 2024 cut.

Identity-verification attack data (iProov)

iProov publishes its annual Threat Intelligence Report based on telemetry from its Security Operations Center (iSOC), dark-web monitoring, red-team engagements, and biometric-security research. The 2025 report, released in February 2025, quantifies the attack growth for remote identity verification specifically:

Attack type | 2024 growth vs 2023 | Source |

|---|---|---|

Native Virtual Camera attacks | +2,665% | iProov Threat Intelligence Report 2025 |

Face Swap attacks | +300% | iProov Threat Intelligence Report 2025 |

Documented attack combinations | 115,000+ | iProov Threat Intelligence Report 2025 |

Dark-web users selling attack kits | ~24,000 | iProov Threat Intelligence Report 2025 |

Native Virtual Camera injection lets an attacker feed a pre-recorded or live-generated face-swap stream directly into an identity verification app as if it were coming from the device's camera. It bypasses basic passive-liveness checks. iProov describes it as the primary threat vector of 2024.

Voice-channel attack data (Pindrop and CrowdStrike)

Pindrop's 2025 Voice Intelligence and Security Report analyzed over 1.2 billion calls across its contact-center customer base in 2024 (Pindrop, 2025). Headline findings:

Deepfake fraud attempts, 2024 YoY: +1,300%, from roughly one per month to seven per day.

Synthetic voices as share of Q4 2024 contact-center calls: 0.33%, a +173% increase vs Q1 2024.

Synthetic voice attacks at insurance companies, 2024: +475%.

Synthetic voice attacks at banks, 2024: +149%.

Projected US contact-center fraud exposure, 2025: US$44.5B.

CrowdStrike's 2025 Global Threat Report clocks vishing (voice phishing) at +442% between the first and second half of 2024, and documents that AI-generated phishing emails land a 54% success rate against human targets, roughly 4.5x the 12% rate for human-written phishing. CrowdStrike explicitly ties its deepfake-voice category to the US$25.6M Arup Hong Kong incident (see below).

Figure 2: Year-over-year growth rates by attack channel, 2023 to 2024 or H1 vs H2 2024 depending on publisher. Sources: Pindrop 2025 Voice Intelligence and Security Report; iProov Threat Intelligence Report 2025; CrowdStrike 2025 Global Threat Report.

Category split: voice, face-swap, injection, document forgery

The useful way to segment deepfake attacks is by channel because defenses are channel-specific.

Voice clones

Synthetic voice became the most commonly weaponized deepfake modality in 2024. Pindrop's 0.33% of Q4 2024 contact-center calls contained a synthetic voice, up 173% from Q1 2024. The FBI's December 2024 public service announcement warned that generative AI lowers the barrier to voice-clone fraud because a few seconds of a target's voice from social media is enough to produce a convincing impersonation. The FBI's 2024 Internet Crime Report logged US$2.77 billion in BEC losses across 21,442 incidents, many of which rode a voice-clone follow-up call to pressure staff into approving a wire.

Face-swap and video synthesis

iProov's 300% face-swap growth is the canonical number. Face-swap attacks pair a pre-recorded video of the attacker with a photo of the victim and use generative models to superimpose the victim's face onto the attacker's moving head in real time. The attack reaches identity-verification systems through three paths: a native virtual camera, a device hijack, or a deepfake stream piped into a legitimate camera over a browser or driver shim. Native Virtual Camera alone grew 2,665% YoY per iProov.

Injection vs presentation attacks

Traditional biometric threat modeling split face attacks into "presentation attacks" (a photo or mask held up to the camera) and "injection attacks" (spoofed video streamed directly into the IDV pipeline). 2024 was the year injection definitively overtook presentation. Onfido and Entrust's 2025 Identity Fraud Report reports that digital document forgeries now account for 57% of document fraud, up 244% vs 2023 and 1,600% vs 2021, and that deepfakes represent 24% of all fraudulent attempts to pass motion-based biometrics checks.

Document forgery

Digital document forgery is technically adjacent to face deepfakes but increasingly pipelined with them. Onfido and Entrust's 244% YoY rise in digital forgeries is a direct read on how Photoshop-plus-template pipelines have been displaced by generative tools that produce passport, driver's license, and utility-bill images at the click of a button. Their telemetry records a deepfake attempt every five minutes across their platform in 2024.

Industry impact

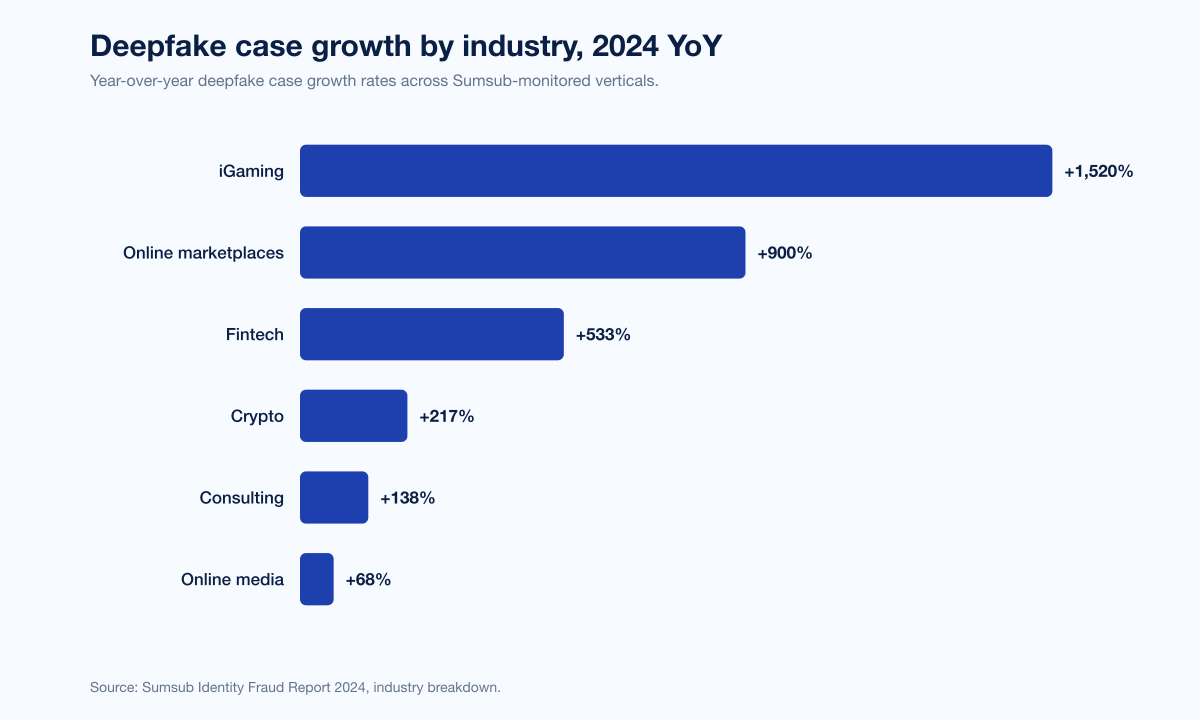

Sumsub's 2024 report breaks industry-level deepfake growth into categories that map cleanly onto buyer verticals.

Figure 3: Year-over-year deepfake case growth rates by industry, 2023 to 2024. Source: Sumsub Identity Fraud Report 2024.

Industry | 2024 YoY deepfake case growth | Source |

|---|---|---|

iGaming | +1,520% | Sumsub 2024 |

Online marketplaces | +900% | Sumsub 2024 |

Fintech | +533% | Sumsub 2024 |

Crypto | +217% | Sumsub 2024 |

Consulting | +138% | Sumsub 2024 |

Online media | +68% | Sumsub 2024 |

Onfido and Entrust's 2025 report, focused on identity verification, ranks cryptocurrency as the single most-targeted industry in 2024 at 9.5% of fraud attempts, nearly double the next-most-targeted sector (lending and mortgages at 5.4%).

Regula's 2024 Deepfake Trends survey (Regula, 2024) found that 50% of businesses globally experienced audio or video deepfake fraud in 2024, averaging US$450,000 in losses per affected organization. Regula's 2025 Identity Fraud Report, published in September 2025, updates the picture: deepfake fraud now strikes organizations at a 33% frequency, as often as traditional identity spoofing (34%) and biometric fraud (34%). Among enterprises reporting over US$1 million in fraud losses, 40% said they had been hit by deepfakes specifically.

Specific named incidents with verified dollar losses

Aggregate statistics matter, but CISOs pitch budget requests with named case studies. Three stand out.

Arup Hong Kong, February 2024 (US$25.6M loss)

A finance worker in Arup's Hong Kong office received what appeared to be a WhatsApp message from the UK-based CFO requesting a confidential transaction. The worker was invited to a video conference call where an entire roster of "colleagues," including the CFO, appeared on screen. Every participant except the worker was a deepfake. Over the course of the call and its aftermath, the worker authorized 15 wire transfers totaling approximately US$25 million to five different Hong Kong bank accounts (CNN, Feb 4 2024; Hong Kong Police briefing). Arup was publicly identified as the victim in May 2024 (CNN, May 16 2024). CrowdStrike's 2025 Global Threat Report records the loss as US$25.6M based on on-chain conversion at the time of transfer, and none of the funds have been recovered as of early 2025.

Ferrari, July 2024 (loss averted)

A Ferrari executive received WhatsApp messages purportedly from CEO Benedetto Vigna referencing an impending acquisition and demanding an immediate NDA signature. A follow-up voice call used a convincing AI-generated deepfake of Vigna's southern Italian accent. The executive's suspicion arose when the voice had faint inconsistencies in tone, and the executive posed a book-title question only the real Vigna would know. The call ended immediately when the attacker could not answer (Fortune, July 2024; MIT Sloan Management Review, 2024). The MIT Sloan case study treats this as the canonical example of social countermeasures (ask something the AI cannot know) beating a technically strong deepfake.

WPP, May 2024 (loss averted)

WPP CEO Mark Read was impersonated via a voice-clone plus Microsoft Teams video call. Attackers built a fake WhatsApp account using a publicly available image of Read, then arranged a Teams call and used the meeting chat to further the impersonation. WPP staff escalated before any money moved (OECD.AI Incident Database, entry 983; Marketing-Interactive, May 2024). A WPP spokesperson confirmed no information or funds were lost.

Other large-cost deepfake vectors

Deepfake job-interview scams. The FBI's 2024 Internet Crime Report documented US$13 million in reported losses from victims who encountered voice or video deepfakes during remote job interviews in 2025.

Government-impersonation crypto scams. Chainalysis's 2026 Crypto Crime Report found scams using deepfaked images of government officials grew more than 1,400% in 2025, and that AI-enabled scams generated 4.5x more revenue per operation than traditional scams. The average scam payment rose to US$2,764 in 2025 from US$782 the year before, a 253% increase. Total 2025 crypto-scam losses hit a record US$17 billion.

Human detection rates

One of the more sobering findings in the 2024-2025 literature is that human beings simply cannot reliably detect a modern deepfake with the naked eye.

Figure 4: In iProov's 2024 study, only 0.1% of participants could reliably distinguish real from AI-generated content. Source: iProov Threat Intelligence Report 2025.

iProov's 2025 report cites a study where just 0.1% of participants could reliably distinguish real from AI-generated content. Microsoft's Digital Defense Report 2025 notes that modern deepfake techniques are "convincing enough to defeat selfie checks and liveness tests, such as simulating natural eye blinks or head turns," and that AI-driven identity forgeries grew 195% globally. When users are confronted with a high-quality face-swap or voice-clone in context (a Teams call with what appears to be a co-worker's face and voice), Ferrari-style skepticism is the exception, not the rule.

The implication: detection belongs in the IDV system, the call-center voice-biometrics layer, or the content-provenance layer, not with the user.

Election-cycle and influence operations

2024 was the biggest election year in modern history. WEF's Global Risks Report 2024 ranked AI-driven misinformation and disinformation as the most severe short-term global risk over a two-year window. Almost 3 billion people voted that year, across Bangladesh, India, Indonesia, Mexico, Pakistan, the United Kingdom, and the United States.

Sumsub's country-level data shows the correlation cleanly. Country-level YoY deepfake surges ahead of 2024 elections included:

Country | 2024 YoY deepfake surge | Source |

|---|---|---|

Indonesia | +1,550% | Sumsub, May 2024 |

Moldova | +900% | Sumsub, May 2024 |

Mexico | +500% | Sumsub, May 2024 |

South Africa | +500% | Sumsub, May 2024 |

United States | +303% | Sumsub, May 2024 |

India | +280% | Sumsub, May 2024 |

Sumsub also reported 81% of survey respondents expressed concern that deepfakes would affect election integrity (Sumsub, 2024).

Microsoft's Digital Defense Report 2025 attributes much of the 2024 election-cycle deepfake activity to nation-state influence operations. Specifically, it documents Chinese actor use of AI-generated content, deepfakes, and automated social-media manipulation to shape narratives in targeted democracies.

AI-generated CSAM (a distinct, documented problem)

Europol's IOCTA 2025 flags AI-generated child sexual abuse material as a fast-growing category. The UK Internet Watch Foundation (IWF July 2024 AI-CSAM update) confirmed 245 AI-generated CSAM reports in 2024, up from 51 in 2023, a 380% increase. The IWF also reported that 90% of the AI-generated images its analysts assessed were realistic enough to qualify under the same law as real CSAM. This is a separate problem space from enterprise identity fraud but sits in the same generative-media pipeline and shapes detection-vendor road maps.

Regulatory landscape, briefly

Governments are legislating faster on deepfakes than on most categories of AI because the harms are concrete and the political pressure is tangible.

EU AI Act (in force August 2024, phased obligations through 2027). Article 50 requires AI-generated content to be marked in machine-readable form and, for deepfakes specifically, clearly disclosed to users as artificially generated or manipulated.

US Take It Down Act (signed May 2025). Criminalizes the publication of non-consensual intimate imagery (including deepfakes) and requires platforms to remove reported content within 48 hours.

US state laws. As of April 2026, 29 US states have criminal statutes covering non-consensual deepfake intimate imagery, and a growing number target political deepfakes ahead of elections.

UK Online Safety Act (phased enforcement since 2024). Requires platforms to proactively detect and remove illegal content, including non-consensual deepfakes, under Ofcom's supervision.

China Deep Synthesis Provisions (in force January 2023) and Generative AI Measures (in force August 2023). Require provenance labeling on AI-generated content and consent from the subjects of any deepfakes.

Regulatory treatment of financial-crime deepfakes. No dedicated US federal statute yet, but the Arup-style voice-clone BEC pattern is prosecuted under wire fraud (18 U.S.C. § 1343) and, where applicable, computer-fraud statutes.

What defenders are doing

Figure 5: Enterprise deepfake exposure, 2025. Sources: Gartner, Sep 2025; Regula 2025 Identity Fraud Report.

A 2025 Gartner leader survey (n=302 cybersecurity leaders) found 62% of organizations had already faced at least one deepfake attack in the past 12 months; 43% had encountered a deepfake on an audio call, and 37% on a video call (Gartner, Sep 2025). Separately, Gartner analysts project that by 2026, 30% of enterprises will consider identity verification and authentication solutions unreliable in isolation because of deepfake attacks on face biometrics (Gartner, Feb 2024).

In response, the defender stack is converging on four layers:

Liveness detection on IDV. Active liveness (user actions, challenge-response, randomized prompts) is now table stakes; passive liveness alone is not sufficient after the iProov 2,665% Native Virtual Camera figure. Vendors include iProov, Jumio, Onfido/Entrust, and Sumsub. Regula and others offer forensic document checks alongside.

Voice biometrics with deepfake detection. Pindrop's own telemetry supports real-time synthetic-voice detection in contact-center audio; Microsoft Azure AI Content Safety, Pindrop Pulse, and academic models from UF, Northwestern, and Stanford all contribute. The 97% deepfake detection rate in a Pindrop Fortune 500 insurer case study is a public benchmark.

Content provenance. The C2PA standard, Adobe Content Credentials, and Google SynthID embed cryptographic provenance in generated images, video, and audio at creation time. Adoption is uneven across generative-model vendors; it is strongest in enterprise creative tools and weakest in open-source generation pipelines.

Process and awareness layers. The Ferrari "ask a book title" countermeasure is not just a joke. It reflects the most important defense in 2026: bake a human-in-the-loop challenge step into any wire-transfer, credential-reset, or NDA-signature workflow that arrives via an unusual channel. Regula's 2024 survey found 84% of surveyed businesses already use MFA and biometrics; the gap is in voice and video authentication, where process is still weaker than technology.

Stingrai's social engineering engagements test exactly these paths. Our vishing engagements rehearse voice-clone scenarios (with consent), and our red team adversary simulations include deepfake video-conference impersonation of finance chiefs on test accounts. The data says the volume is growing 442% to 1,300% YoY depending on the channel; running controlled drills is how you find out where your real escalation paths break.

Cost to organizations

Direct and projected fraud costs attributable to deepfakes across the sources we verified:

Average business deepfake loss, 2024 (Regula): US$450,000 per affected organization.

Total 2024 US cybercrime losses (FBI IC3): US$16.6 billion, up 33% YoY.

BEC losses 2024 (FBI IC3): US$2.77 billion across 21,442 incidents. Voice-clone layering is now a common follow-up tactic in BEC chains.

Deepfake-job-interview losses 2025 (FBI IC3): US$13 million.

AI-enabled crypto-scam losses 2025 (Chainalysis): US$17 billion total; +1,400% growth in government-impersonation deepfake scams; US$2,764 average scam payment (up 253% YoY); 4.5x revenue-per-operation vs non-AI scams.

Contact-center fraud exposure projection, 2025 (Pindrop): US$44.5 billion total fraud exposure; deepfake-related fraud projected +162% for the year.

Arup, Feb 2024 (single incident): US$25.6M.

Cyber insurance carriers have reacted. Several now treat social-engineering losses (including deepfake-driven wire fraud) as a named category subject to sub-limits, and binder conditions increasingly require voice-authentication or call-back protocols on wire transfers above a defined threshold. This is analogous to the MFA requirement that became standard after 2021's ransomware wave; expect it to become universal by the end of 2026.

Forward projection (2026 trajectory)

Projections should be labeled as projections. The ones cited by named primary sources in 2025 or early 2026 are:

Pindrop projects deepfake-related fraud growth of +162% in 2025 and total contact-center fraud exposure of US$44.5B (Pindrop, 2025).

Europol's IOCTA 2025 flags a projected 8 million deepfakes shared online in 2025, up from approximately 500,000 in 2023 (Europol IOCTA 2025; DeepMedia referenced for 2023 baseline).

Gartner projects 30% of enterprises will consider identity verification solutions unreliable in isolation by 2026 because of deepfake attacks on face biometrics (Gartner, Feb 2024).

Microsoft's Digital Defense Report 2025 anticipates continued growth in AI-agent-driven reconnaissance, phishing, and deepfake-enabled influence operations (Microsoft, 2025).

Chainalysis projects continued expansion of AI-enabled impersonation in crypto scams on the back of the 4.5x-per-operation revenue advantage AI provides (Chainalysis 2026 Crypto Crime Report).

No primary source known to us as of April 24, 2026 has published a credible full-year 2026 deepfake number; we list no 2026 retrospective stats because none yet exist.

What this means for defenders

Harden every wire, credential reset, and contract signing path with a human-in-the-loop challenge. Voice alone and video alone are no longer authoritative. Add a recorded call-back to a known number, a pre-agreed passphrase, or a "book question" style check on high-value transactions. Arup's lesson was not that the tech was too good; it was that the process had no second check.

Upgrade identity verification to active liveness plus injection-attack detection. Passive liveness was fine in 2022. After iProov's 2,665% Native Virtual Camera figure, the bar is active challenge and injection-stream detection.

Add voice-deepfake detection to every contact center. Pindrop, Microsoft Azure AI Content Safety, and academic detectors can flag synthetic audio in near real time. Pindrop's reported 97% detection rate at a Fortune 500 insurer is a reasonable target benchmark.

Adopt content-provenance. Publishers and internal comms teams should tag genuine content with C2PA or Content Credentials so any unlabeled impersonation is automatically suspicious.

Rehearse deepfake incidents in tabletops and red team engagements. Stingrai's social engineering and red team engagements include voice-clone and video-conference deepfake scenarios on customer test accounts. We founded Stingrai in 2021 to run controlled offensive engagements; deepfake-capable red team scenarios are a 2024 and later addition because the threat moved fast.

Keep tabs on regulation. EU AI Act obligations phase in through 2027, and the US Take It Down Act and state-level deepfake laws create new compliance duties for platforms that handle user-generated video or audio.

FAQ

What is the most-cited deepfake statistic for 2026?

For volume growth, Sumsub's 4x year-over-year increase in detected deepfakes from 2023 to 2024, based on more than 3 million analyzed fraud attempts, is the most-cited. For fraud specifically, Pindrop's +1,300% rise in contact-center deepfake fraud attempts over the same window is the other anchor. For IDV attacks, iProov's 2,665% Native Virtual Camera growth and 300% face-swap growth carry the most weight in the identity-verification vendor space.

How has deepfake fraud changed year over year?

Year-over-year growth spans 300% (face-swap per iProov) to 2,665% (Native Virtual Camera per iProov) to +1,300% (contact-center deepfake attempts per Pindrop) to +442% (vishing per CrowdStrike). The common thread across 2024 and 2025 is that deepfakes moved from isolated experiments into mainstream fraud-as-a-service offerings, with iProov documenting roughly 24,000 dark-web users selling attack kits.

Which industries were hit hardest by deepfakes in 2024 and 2025?

Per Sumsub's 2024 data, iGaming saw the sharpest year-over-year deepfake case growth at +1,520%, followed by online marketplaces (+900%), fintech (+533%), crypto (+217%), consulting (+138%), and online media (+68%). Onfido and Entrust's 2025 Identity Fraud Report ranks cryptocurrency as the single most-targeted industry for IDV fraud in 2024 at 9.5% of attempts, almost double the next sector.

What was the largest deepfake fraud loss on record?

The single largest publicly documented deepfake fraud loss remains Arup's US$25.6M Hong Kong incident in February 2024, confirmed by Hong Kong Police and attributed in May 2024 when Arup publicly acknowledged being the victim (CNN, May 2024). The worker authorized 15 wire transfers to 5 accounts during and after a deepfake video call populated entirely by synthetic "colleagues."

How accurately can humans spot a deepfake?

iProov's study reported in its Threat Intelligence Report 2025 found just 0.1% of participants could reliably distinguish real from AI-generated content. Microsoft's Digital Defense Report 2025 corroborates that AI-driven identity forgeries are "convincing enough to defeat selfie checks and liveness tests." Detection has to sit in the system, not with the user.

What does Gartner say about deepfakes in 2026?

Gartner's February 2024 analyst forecast projects that by 2026, 30% of enterprises will consider identity verification unreliable in isolation because of deepfakes. Gartner's September 2025 survey of 302 cybersecurity leaders found 62% had already experienced a deepfake attack in the past 12 months.

What does the FBI say about deepfakes and voice cloning?

The FBI's December 2024 PSA on generative-AI-facilitated financial fraud warns that voice-cloning from a few seconds of public audio is now trivial and that victims should build family pass-phrases and authenticate via a separate channel. The 2024 Internet Crime Report logged US$16.6 billion in total cybercrime losses in 2024, up 33% YoY, including US$2.77 billion in BEC losses across 21,442 incidents and US$13 million in 2025 reported losses from deepfake job-interview scams.

How are deepfakes affecting elections?

WEF's Global Risks Report 2024 ranked AI-driven misinformation and disinformation as the most severe short-term global risk over a two-year window, with almost 3 billion people voting across the 2024 cycle. Sumsub's election-cycle data shows country-level deepfake-incident surges of +303% (US), +1,625% (South Korea), and +1,550% (Indonesia) ahead of 2024 votes. Microsoft's Digital Defense Report 2025 attributes much of this to nation-state influence operations.

What is the difference between a deepfake and a face swap?

"Deepfake" is an umbrella term for synthetic media generated by AI to mimic a real person's face, voice, or likeness. A face swap is a specific technique where an AI model superimposes one person's face onto another person's head in a video, typically in real time. Voice clones are a deepfake modality that uses text-to-speech or voice-conversion models to mimic a target's voice. Native Virtual Camera attacks are a delivery mechanism, not a deepfake type, where a face-swap or pre-recorded synthetic stream is piped into the operating system as if it came from the real webcam.

What can organizations do to defend against deepfake fraud?

Five practical steps that the data supports. First, add an out-of-band call-back or pre-agreed passphrase for every wire transfer, credential reset, and NDA signature. Second, upgrade IDV to active liveness plus injection-attack detection. Third, add voice-deepfake detection to contact centers (Pindrop, Microsoft Azure AI Content Safety, or an equivalent). Fourth, adopt C2PA or Content Credentials for any official media you publish so unlabeled clones are automatically suspicious. Fifth, rehearse the attack. Stingrai's social engineering and red team engagements cover deepfake video-conference scenarios and controlled voice-clone drills against test accounts.

Where can I get the latest deepfake data?

The primary publishers to track are Sumsub (annual Identity Fraud Report), iProov (annual Threat Intelligence Report), Pindrop (annual Voice Intelligence and Security Report), Onfido and Entrust (annual Identity Fraud Report), Regula (biennial Global Identity Fraud Report and Deepfake Trends Survey), CrowdStrike (annual Global Threat Report), Microsoft (annual Digital Defense Report), Chainalysis (annual Crypto Crime Report), Europol (annual IOCTA), and FBI IC3 (annual Internet Crime Report). Our Stingrai research blog will mirror these updates as they publish.

References

Sumsub. Identity Fraud Report 2024. November 2024. https://sumsub.com/fraud-report-2024/. Platform telemetry spanning more than 3 million fraud attempts in 2023 and 2024.

Sumsub. "Global Deepfake Incidents Surge Tenfold from 2022 to 2023" press release. January 2024. https://sumsub.com/newsroom/sumsub-research-global-deepfake-incidents-surge-tenfold-from-2022-to-2023/. Internal Sumsub deepfake-detection telemetry.

Sumsub. "Deepfake Cases Surge in Countries Holding 2024 Elections" press release. May 2024. https://sumsub.com/newsroom/deepfake-cases-surge-in-countries-holding-2024-elections-sumsub-research-shows/. Country-level deepfake incident YoY data.

Sumsub. Top Identity Fraud Trends 2026. Early 2026. https://sumsub.com/blog/top-new-identity-fraud-trends/. Annual trends summary citing 2024-2025 telemetry.

iProov. Threat Intelligence Report 2025: Remote Identity Under Attack. February 2025. https://www.iproov.com/reports/threat-intelligence-report-2025-remote-identity-attack. iSOC detection telemetry plus dark-web monitoring and red-team engagements.

iProov. Annual Threat Intelligence Report 2026 press release. February 2026. https://www.iproov.com/press/annual-identity-verification-threat-intelligence-report. 2025 iSOC telemetry.

Pindrop. 2025 Voice Intelligence and Security Report. June 2025. https://www.pindrop.com/research/report/voice-intelligence-security-report/. Analysis of 1.2B+ calls across Pindrop's contact-center customer base, 2024.

Onfido and Entrust. 2025 Identity Fraud Report. November 2024. https://onfido.com/landing/identity-fraud-report/. Identity-verification platform telemetry 2024.

CrowdStrike. 2025 Global Threat Report. February 2025. https://www.crowdstrike.com/en-us/press-releases/crowdstrike-releases-2025-global-threat-report/. Falcon telemetry covering 2024 H1 vs H2.

FBI Internet Crime Complaint Center. 2024 Internet Crime Report. April 2025. https://www.ic3.gov/AnnualReport/Reports/2024_IC3Report.pdf. US victim complaint data.

FBI IC3. Criminals Use Generative Artificial Intelligence to Facilitate Financial Fraud (PSA I-120324-PSA). December 2024. https://www.ic3.gov/PSA/2024/PSA241203. FBI advisory on generative-AI fraud.

Microsoft. Digital Defense Report 2025. October 2025. https://www.microsoft.com/en-us/security/security-insider/threat-landscape/microsoft-digital-defense-report-2025. Microsoft threat telemetry.

Europol. Internet Organised Crime Threat Assessment 2025 (IOCTA). June 2025. https://www.europol.europa.eu/cms/sites/default/files/documents/Steal-deal-repeat-IOCTA_2025.pdf. EU law-enforcement case corpus.

Regula Forensics. 2025 Identity Fraud Report. September 2025. https://regulaforensics.com/blog/2025-identity-fraud-by-numbers/. Global enterprise survey.

Regula Forensics. Deepfake Trends 2024. October 2024. https://regulaforensics.com/resources/deepfake-trends-2024-report/. Global enterprise survey.

World Economic Forum. Global Risks Report 2024 press release. January 2024. https://www.weforum.org/press/2024/01/global-risks-report-2024-press-release/. Annual risk perception survey.

Gartner. "Why CIOs Can't Ignore the Rising Tide of Deepfake Attacks." September 2025. https://www.gartner.com/en/newsroom/press-releases/2025-09-02-why-cios-cannot-ignore-the-rising-tide-of-deepfake-attacks. Gartner 2025 cybersecurity leader survey (n=302).

Gartner. "Gartner Predicts 30% of Enterprises Will Consider Identity Verification and Authentication Solutions Unreliable in Isolation Due to AI-Generated Deepfakes by 2026." February 2024. https://www.gartner.com/en/newsroom/press-releases/2024-02-01-gartner-predicts-30-percent-of-enterprises-will-consider-identity-verification-and-authentication-solutions-unreliable-in-isolation-due-to-deepfakes-by-2026.

Chainalysis. 2026 Crypto Crime Report: Scams. January 2026. https://www.chainalysis.com/blog/crypto-scams-2026/. On-chain scam-wallet data 2024-2025.

Internet Watch Foundation. AI-CSAM Update, July 2024. https://www.iwf.org.uk/media/q4zll2ya/iwf-ai-csam-report_public-july2024_final.pdf. IWF hotline case data.

CNN. "Arup revealed as victim of $25 million deepfake scam involving Hong Kong employee." May 16, 2024. https://www.cnn.com/2024/05/16/tech/arup-deepfake-scam-loss-hong-kong-intl-hnk. Public record of Arup disclosure.

CNN. "Finance worker pays out $25 million after video call with deepfake chief financial officer." February 4, 2024. https://www.cnn.com/2024/02/04/asia/deepfake-cfo-scam-hong-kong-intl-hnk. Hong Kong Police briefing coverage.

Fortune. "Ferrari exec foils deepfake attempt by asking the scammer a question only CEO Benedetto Vigna could answer." July 27, 2024. https://fortune.com/2024/07/27/ferrari-deepfake-attempt-scammer-security-question-ceo-benedetto-vigna-cybersecurity-ai/. Corporate disclosure + case study.

MIT Sloan Management Review. "How Ferrari Hit the Brakes on a Deepfake CEO." 2024. https://sloanreview.mit.edu/article/how-ferrari-hit-the-brakes-on-a-deepfake-ceo/. Case study.

OECD.AI Incident Database. Incident 2024-05-10-e24d: WPP Executives Targeted by Deepfake Scam Using AI Voice Cloning. May 2024. https://oecd.ai/en/incidents/2024-05-10-e24d. Incident record.

Pindrop. Fortune 500 Insurer Detects 97% of Deepfakes and Stops Synthetic Voice Attacks case study. https://www.pindrop.com/research/case-study/insurer-detects-deepfakes-synthetic-voice-attacks-pindrop-pulse/. Vendor-published benchmark.

This is Stingrai research team's 2026 deepfake statistics reference. Stingrai, founded in 2021, is an offensive security firm running penetration testing, red team, and social engineering engagements for global customers. If you want to see how your wire-transfer workflow or contact-center handles a deepfake attempt, our services page lists what we run and how to start a scoping call.