The defining cyber-threat headline of 2025 is Anthropic's GTG-1002 disclosure, published November 13 2025: a Chinese state-sponsored group used Claude Code in an MCP-connected agentic framework to autonomously execute roughly 80 to 90 percent of tactical work across approximately 30 organizations spanning technology, finance, government, and chemical manufacturing, at thousands of requests per second, with human operators confined to 4 to 6 critical decision points per campaign. IBM's 2025 Cost of a Data Breach Report measured the population-scale equivalent: attacker AI was used in 1 in 6 (16 percent) breaches in 2025, with AI-generated phishing (37 percent of attacker-AI cases) and deepfake impersonation (35 percent) the dominant playbooks. Mandiant's M-Trends 2026 recorded the operational consequence: median time from initial access to secondary threat-group handoff collapsed to 22 seconds in 2025, down from more than 8 hours in 2022, with three new AI-aware malware families (PROMPTFLUX, PROMPTSTEAL, QUIETVAULT) querying live LLMs at runtime to evade detection.

XBow named the moment well in its blog post "The Chaos Phase: How AI is Transforming Cybersecurity Threats". XBow's argument: 2025 and 2026 are an unprecedented period in which attackers gain advantages faster than traditional defenses can adapt, organizations face a critical 24-month window to evolve, and the long-term advantage will not go to the side that hires more people but to the side that operationalizes AI for defense most effectively. XBow's prescription is direction-correct. Continuous validation, security chaos engineering, automation at scale, and AI-augmented defense are all things Stingrai sees working on real engagements.

This post is the Stingrai research team's perspective on what XBow's framing gets right, and where the data suggests a different trajectory than "chaos phase resolving to stability." Stingrai is a Toronto-headquartered offensive-security firm founded in 2021, with team certifications including OSCE3, OSCP, OSWE, OSED, and CREST CRT, 18 published CVEs across the team, and a 5.0/5.0 average across 19 Clutch reviews. We run live pentests and red-team engagements augmented by our internal AI agent Snipe, trained on more than 6,000 HackerOne disclosures. The post is in conversation with XBow, not against it. XBow has done genuinely impressive work, including reaching the top of HackerOne's US leaderboard in 90 days, publishing transparent benchmarks against frontier models, and raising US$120M in March 2026 at a US$1B+ valuation. We are writing because the next 24 months of buyer, regulator, and underwriter decisions will turn on whether the field correctly understands the shape of the gap, not just the size of it.

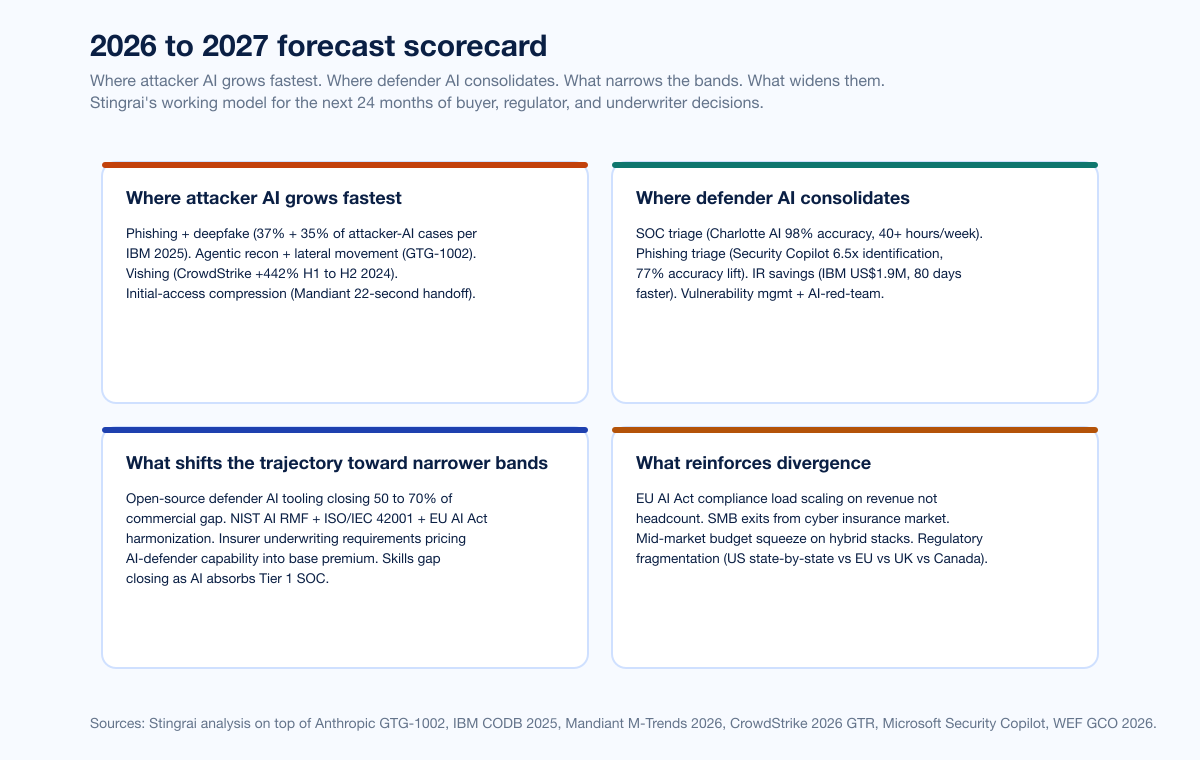

Stingrai's thesis: the chaos phase is real on the attacker side, but the defender side is not racing to a single stable equilibrium. The data shows divergence by organization size, sector, and geography, not convergence. The 2026 to 2027 outcome that matches the public evidence is uneven equilibrium across three bands: well-resourced enterprises with hybrid AI-defender stacks pulling ahead, mid-market struggling under adoption-plus-compliance load, and SMBs disproportionately exposed to AI-augmented attacks. Designing for the average misses the actual distribution.

TL;DR: 12 labeled claims

Anthropic GTG-1002 (November 13, 2025). First publicly documented AI-orchestrated cyber espionage campaign at scale. ~30 organizations targeted; 80 to 90 percent of tactical work autonomously executed by Claude Code; thousands of requests per second; 4 to 6 critical human decision points per campaign; only "a handful" of targets actually compromised, and Anthropic noted Claude "frequently overstated findings" and "fabricated data" (Anthropic, November 2025).

IBM Cost of a Data Breach 2025. Attacker AI in 16 percent of breaches; AI-phishing 37 percent of attacker-AI cases; AI-deepfake 35 percent. Shadow AI added US$670K to average breach cost; 97 percent of organizations that experienced an AI-related incident lacked proper AI access controls (IBM, July 2025).

IBM defender-side AI economics. Organizations that deployed AI defenses extensively saved nearly US$1.9M per breach and identified breaches 80 days faster than peers (IBM, July 2025).

Mandiant M-Trends 2026. Median initial-access-to-secondary-handoff time 22 seconds in 2025 vs more than 8 hours in 2022. New AI-aware malware families: PROMPTFLUX (queries LLMs mid-execution), PROMPTSTEAL (same), QUIETVAULT (credential stealer that hunts local AI CLI tools and runs predefined prompts). Mandiant's caveat: most successful 2025 intrusions still stem from "fundamental human and systemic failures," not direct AI causation (Mandiant M-Trends 2026).

CrowdStrike 2025 + 2026 GTRs. AI-generated phishing email click-through rate 54 percent vs 12 percent human-written; 89 percent year-over-year rise in AI-enabled adversary attacks 2024 to 2025; adversaries exploited legitimate GenAI tools at 90+ organizations via prompt injection (CrowdStrike 2026 GTR findings).

CrowdStrike Charlotte AI. 98 percent triage accuracy; 40+ analyst hours saved per week (CrowdStrike, February 2025).

Microsoft Security Copilot Phishing Triage Agent. 6.5x more malicious emails identified; 77 percent better verdict accuracy; 78 percent faster triage; one customer saved 200 hours per month (Microsoft Tech Community).

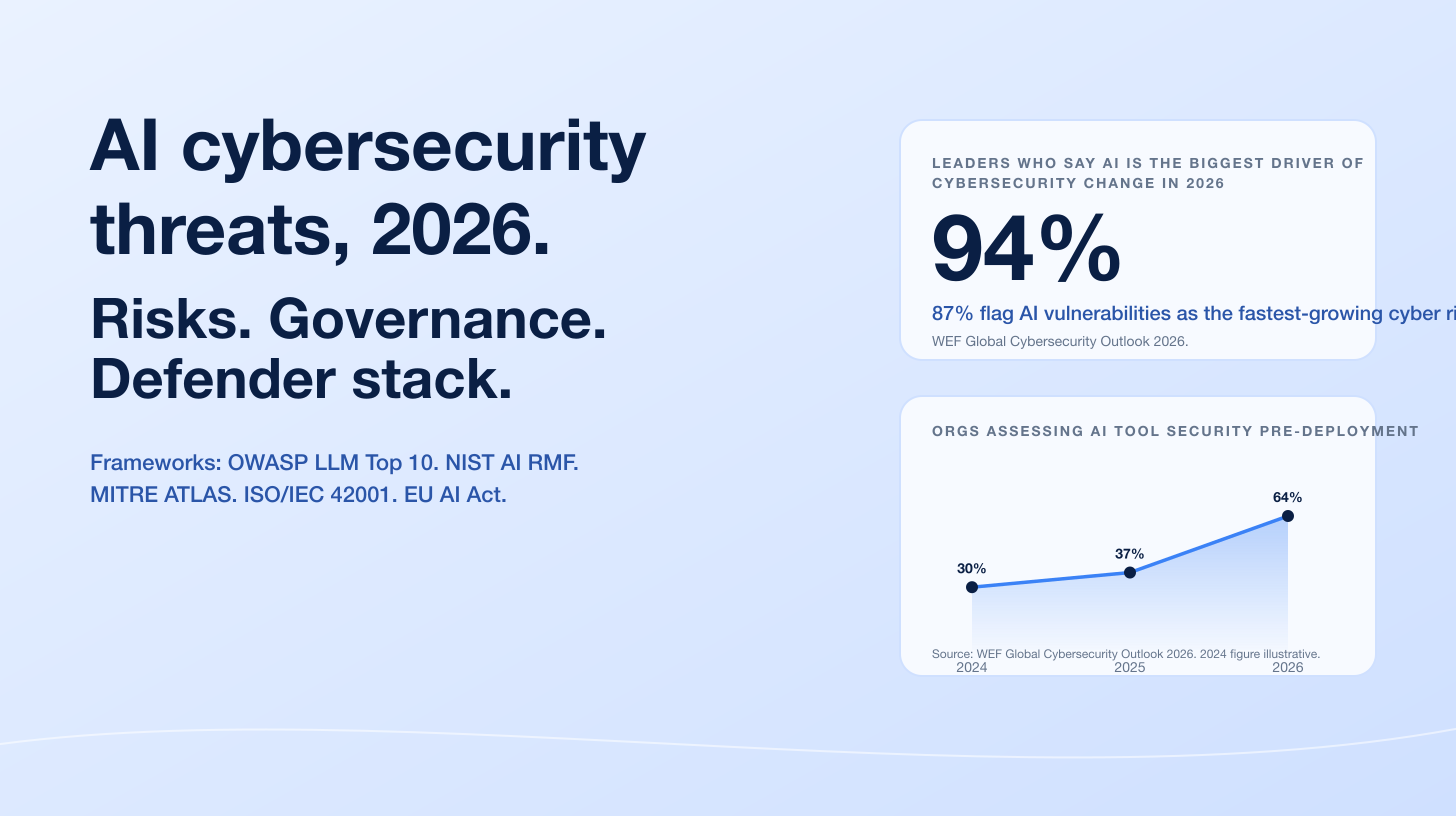

WEF Global Cybersecurity Outlook 2026. 94 percent of leaders agree AI is the single most significant driver of cybersecurity change; 87 percent flag AI vulnerabilities as the fastest-growing cyber risk; pre-deployment AI-tool security assessment doubled from 37 percent to 64 percent year over year (WEF GCO 2026).

WEF org-size resilience gap. 91 percent of the largest enterprises adjusted cybersecurity posture due to geopolitical factors; only 59 percent of small and medium businesses are doing the same. 46 percent of small organizations report insufficient cyber expertise vs 29 percent of large organizations (WEF GCO 2026).

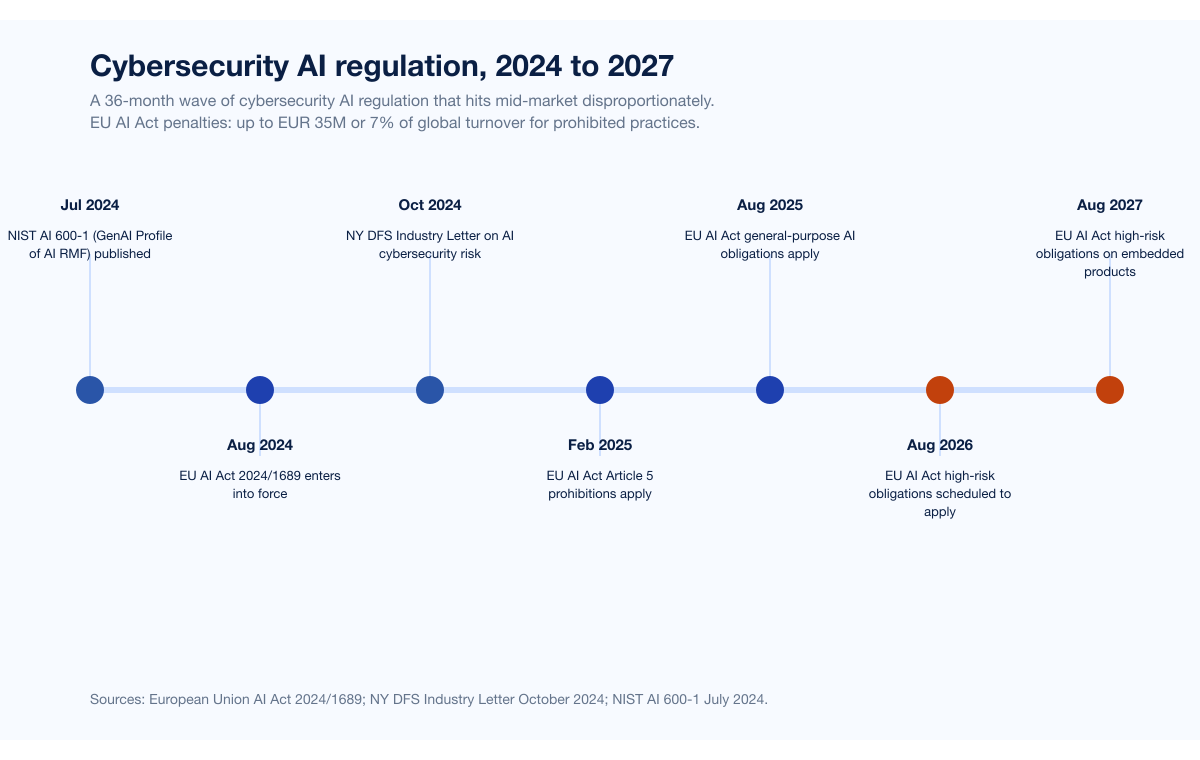

EU AI Act 2024/1689. Entered into force 1 August 2024; high-risk-system obligations scheduled to apply from 2 August 2026 (extended to 2 August 2027 for AI systems embedded in regulated products). Penalties up to EUR 35M or 7 percent of global annual turnover for prohibited practices, up to EUR 15M or 3 percent for high-risk-system non-compliance (European Union, 2024). The European Commission's November 2025 "Digital Omnibus on AI" proposes deferring high-risk compliance to 2 December 2027; the original timeline applies if the omnibus is not formally adopted in time.

NY DFS October 16, 2024 Industry Letter. Cybersecurity Risks Arising from AI: AI-enabled social engineering and deepfakes flagged as one of the most significant threats to the financial-services sector; covered entities directed to deploy authentication factors that withstand AI-manipulated deepfakes (digital certificates, physical security keys) instead of SMS, voice, and video, and to train personnel on secure AI development and on prompt-query hygiene (NY DFS, October 2024).

HackerOne 9th HPSR + Bugcrowd 2026. 70 percent of bug-bounty researchers use AI tools; 82 percent on Bugcrowd. Customer programs with AI in scope rose 270 percent year over year to 1,121 programs; valid prompt-injection reports rose 540 percent; 58 percent of researchers say AI misses business logic and chained exploits and only 12 percent believe AI could replace them (HackerOne, October 2025; Bugcrowd, January 2026).

Key takeaways

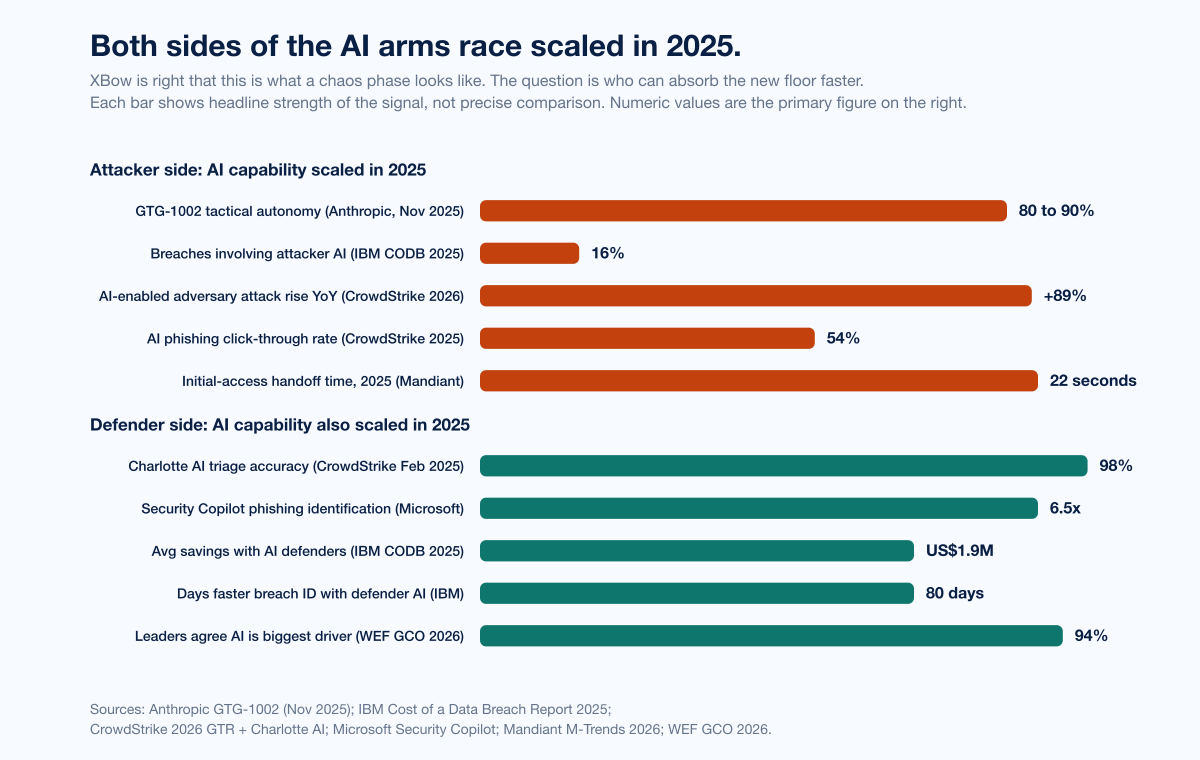

Chaos phase on the attacker side: confirmed. Anthropic GTG-1002, IBM 16 percent, Mandiant 22-second handoff, CrowdStrike 89 percent year-over-year AI-enabled attack rise, and named AI-aware malware families together establish that the attacker side has scaled AI capability into operational reality during 2025.

Defender-side AI scaled too, but unevenly. IBM's US$1.9M savings, CrowdStrike Charlotte AI 98 percent accuracy, and Microsoft Security Copilot 6.5x phishing identification show that well-resourced defenders are pulling AI tooling into production. WEF GCO 2026 data shows the adoption is concentrated in the largest enterprises; mid-market and SMB are visibly behind.

The "phase ending in stability" framing oversimplifies the trajectory. XBow's framing implies a single chaos phase resolving to a single new stable equilibrium. The org-size, regulatory, and underwriting data points toward a multi-band steady state, not a single one.

Regulation does not stabilize the chaos. EU AI Act high-risk obligations (currently 2 August 2026, possibly deferred to 2 December 2027), NY DFS AI cybersecurity expectations, and SEC AI disclosure dynamics layer compliance complexity onto the AI-defender adoption curve. Mid-market organizations bear that load disproportionately because they cannot amortize compliance staff across the same revenue base as the largest enterprises.

Underwriter response is fragmenting too. Cyber insurers have already moved from "do you have MFA and EDR" to "do you assess AI-tool security pre-deployment." The carriers will further fragment the market by pricing AI-defender capability into renewals.

Stingrai's forecast: uneven equilibrium across three bands. Not "chaos resolving to stability" but divergence at different speeds: well-resourced enterprises pulling ahead, mid-market struggling, SMBs disproportionately exposed. Buyers, researchers, and underwriters who plan for uneven equilibrium will outperform those who plan for the average.

Methodology

Date cutoff: April 29, 2026. Lead data is full-year 2024 or full-year 2025 telemetry where a primary publisher has released it; 2026 figures are labeled as forecasts or preliminary numbers. Statistics that could not be reached via a named primary source on at least one verification pass were dropped rather than estimated. Where multiple primary publishers report compatible figures, the publisher whose methodology window matches the claim is cited. Secondary news aggregators are cited only where they constitute the public record of a corporate announcement, milestone, or disclosure.

The post engages with XBow's argument as published in the XBow blog, in particular "The Chaos Phase: How AI is Transforming Cybersecurity Threats", and in the follow-on post on Security in 2026. XBow is treated as a respected peer in the offensive-security category. No hit-piece framing applies. Where Stingrai's perspective differs from XBow's framing, it does so with named primary data, not vendor-vs-vendor mud.

Figure 1: Attacker-side and defender-side AI capability both scaled in 2025. The chaos phase is real on both sides; the question is who can absorb the new floor faster. Sources: Anthropic GTG-1002; IBM Cost of a Data Breach Report 2025; Mandiant M-Trends 2026; CrowdStrike 2026 GTR; Microsoft Security Copilot.

Where XBow is right

XBow's framing is data-anchored on three load-bearing claims, and the public evidence is clearly with them on each.

1. Attacker-side AI moved from research to operations in 2025

GTG-1002 is the single most consequential data point of the 2025 cyber year. An end-to-end agentic operation, instrumented through Claude Code with MCP-connected sub-agents, executed approximately 80 to 90 percent of tactical work across approximately 30 organizations, with humans confined to 4 to 6 critical decision points. Anthropic detected and disrupted the campaign within roughly 10 days of identification in mid-September 2025 (Anthropic, November 13, 2025). The qualitative footnote that Stingrai keeps coming back to is Anthropic's own observation that Claude "frequently overstated findings" and "fabricated data," with only a handful of approximately 30 targets actually compromised. The campaign proved end-to-end agentic feasibility; it also proved validation gaps remain non-trivial.

The population-level corollary is in IBM's 2025 numbers: attacker AI in 16 percent of breaches, AI-phishing 37 percent of attacker-AI cases, AI-deepfake 35 percent (IBM, July 2025). CrowdStrike's 2026 Global Threat Report adds the rate-of-change: an 89 percent year-over-year rise in AI-enabled adversary attacks (CrowdStrike, February 2026). Mandiant's 22-second median initial-access handoff and the appearance of PROMPTFLUX, PROMPTSTEAL, and QUIETVAULT in real intrusion data are the operational signal that AI-aware tooling has crossed from research to live operations (Mandiant M-Trends 2026).

XBow is right: this is what a chaos phase looks like on the attacker side.

2. Defender-side AI is genuinely catching up at the top

XBow's prescription that defenders must operationalize AI is data-supported. CrowdStrike's Charlotte AI Detection Triage, generally available in February 2025, triages detections at 98 percent accuracy and saves 40+ hours of analyst work per week. Microsoft's Security Copilot Phishing Triage Agent identifies 6.5x more malicious emails, lifts verdict accuracy 77 percent, and cuts triage time 78 percent in independent randomized controlled studies, with one customer saving 200 hours per month (Microsoft Tech Community, November 2025).

The single number that sets the stakes for buyers is from IBM: organizations that deployed AI defenses extensively saved nearly US$1.9M per breach and identified breaches 80 days faster than peers (IBM, July 2025). When AI defender economics produce that order-of-magnitude difference, capable enterprises will deploy. We see them do it on engagements.

3. Continuous validation and security chaos engineering are the right posture

XBow's prescriptive framing of continuous validation, security chaos engineering, and stress-tested defenses is direction-correct. The attacker timing data backs this up: when the median initial-access-to-handoff time is 22 seconds, snapshot-based assurance ("we ran the annual pentest in March") is no longer load-bearing for the threat surface. Continuous, automated, AI-augmented validation, run between scoped human-led engagements, is the only posture that matches the cycle time.

Stingrai's PTaaS offering is itself a continuous-validation product, with named senior pentesters running ongoing scope coverage rather than a once-a-year point-in-time test. We agree with XBow on this prescription. The point of disagreement is not the prescription; it is who will actually execute on it across the population.

Where the chaos-phase framing leaves a gap

XBow's framing is a single curve: chaos phase, attacker advantage, then defenders catch up, then equilibrium. The public evidence on defender adoption shows a different shape.

The org-size cliff

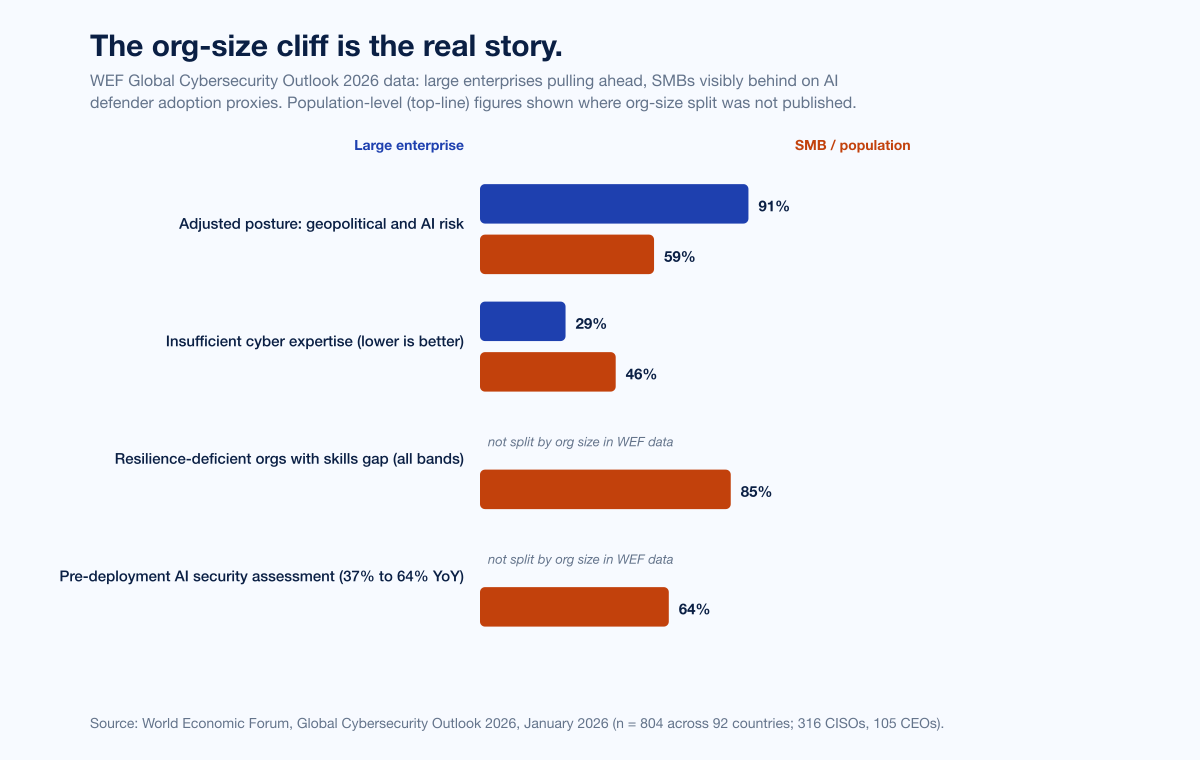

The cleanest evidence of divergence is from the WEF Global Cybersecurity Outlook 2026, drawing on 804 qualified respondents across 92 countries including 316 CISOs and 105 CEOs. Two figures from that report frame the divergence sharply:

91 percent of the largest enterprises adjusted their cybersecurity posture due to geopolitical factors. 59 percent of SMB organizations are doing the same. Posture-adjustment is a leading indicator of AI-defender investment because the same procurement cycles deliver the AI tooling.

46 percent of small organizations report insufficient cyber expertise. 29 percent of large organizations report the same. The gap on expertise widens the gap on AI tooling because AI defender tools require humans who can integrate, tune, and validate them.

Layer on a third number from the WEF dataset: 85 percent of resilience-deficient organizations also have a parallel critical cybersecurity skills gap (WEF Global Cybersecurity Outlook 2026). The skills gap is not orthogonal to the AI adoption gap; the two reinforce each other. Stingrai's companion post on the cybersecurity skills gap tracks this in detail across ISC2, ISACA, NIST CyberSeek, BLS, Fortinet, and Splunk data. The shorthand: the orgs that need AI-defender tooling most are the orgs least equipped to deploy it.

The XBow framing assumes the population behaves uniformly; the WEF data says it does not.

The regulatory load is asymmetric

The next 24 months of cybersecurity AI regulation hit mid-market organizations harder than they hit either Fortune 500 enterprises or sub-50-employee SMBs.

EU AI Act 2024/1689 entered into force 1 August 2024. Article 5 prohibitions applied from 2 February 2025; general-purpose AI obligations from 2 August 2025. High-risk-system obligations are scheduled to apply from 2 August 2026, with the extended deadline of 2 August 2027 for AI systems embedded in regulated products. The European Commission's "Digital Omnibus on AI," published 19 November 2025, has proposed deferring high-risk compliance to 2 December 2027; if the omnibus is not formally adopted in time, the original timeline applies (European Union; DLA Piper).

Penalty surface. Up to EUR 35M or 7 percent of global annual turnover for prohibited practices (Article 99); up to EUR 15M or 3 percent for high-risk-system non-compliance. For an EU-touching mid-market company doing EUR 500M of revenue, 7 percent is EUR 35M. That is not absorbable.

NY DFS Industry Letter, October 16, 2024. Covered entities (the financial-services sector regulated by NY DFS, which is the world's most influential single state-level financial-services regulator) were directed to address AI-enabled social engineering, deepfakes, AI-enhanced cyberattacks, exposure of nonpublic information, and third-party AI risks, with specific guidance to use authentication that withstands AI-manipulated deepfakes (digital certificates, physical security keys, not SMS, voice, or video) (NY DFS).

NIST AI 600-1 (Generative AI Profile of the AI RMF), published 26 July 2024, codifies risk-management actions across governance, content provenance, pre-deployment testing, and incident disclosure (NIST).

OWASP LLM Top 10 v2025 ranks Prompt Injection at LLM01 (OWASP). MITRE ATLAS January 2026 update added 14 new agent-related techniques in 2025 plus three case studies on MCP-related compromises (MITRE ATLAS). These are voluntary standards; they become de-facto mandatory the moment a regulator or insurer cites them.

A US-listed Fortune 500 enterprise has compliance counsel, dedicated AI-governance staff, and the budget to embed legal review across the AI-defender stack. A 200-person fintech in the EU does not. The same regulation that reads as "manageable" at scale reads as "impossible without doubling the GRC team" at mid-market scale. The chaos-phase framing assumes the regulatory load is something every organization absorbs equally; it does not.

Figure 2: WEF Global Cybersecurity Outlook 2026 data on the org-size resilience gap. Larger enterprises are pulling ahead on posture adjustment and AI-defender adoption; SMB and mid-market are visibly behind. Source: WEF GCO 2026.

Cyber insurance fragments the population further

Cyber insurers have already moved AI capability from "nice to have" into underwriting. Coalition's 2026 Cyber Claims Report, drawing on more than 100,000 policy years across the US, Canada, UK, Australia, and Germany, recorded an 86 percent refusal rate on ransomware payments in 2025 (record high) even as average initial ransom demand surged 47 percent year-over-year to more than US$1M. At-Bay's 2026 InsurSec Report measured a 7 percent year-over-year rise in claim frequency; ransomware severity reached US$508K (+16 percent year-over-year); remote-access services were the entry vector for 87 percent of ransomware claims, with VPN compromise alone driving 73 percent of identified-vector intrusions.

The carriers' response to AI risk is already underwriting policy. Marsh McLennan's 2024 cyber market data shows 41 percent of cyber applications denied on first submission, with missing MFA and inadequate endpoint protection the top reasons. Stingrai's companion post on cyber insurance statistics tracks this in detail. The 2026 trend is unmistakable: AI-defender capability (specifically pre-deployment AI security assessment, AI-aware EDR, and SOC AI tooling) is moving into the underwriting questionnaire as a baseline expectation. Carriers that price AI-defender adoption into renewals will further bifurcate the market: well-prepared enterprises will see flat or down renewals, mid-market organizations will see rate increases, and uninsurable SMBs will simply not be offered coverage.

WEF's GCO 2026 surveyed underwriters as part of its 804-respondent dataset. The carriers' message is consistent: AI risk is being priced into renewals, and the price differential between best-in-class and median-buyer is widening.

Stingrai's forecast: uneven equilibrium across three bands

The model that fits the public data is not one curve; it is three. We expect the next 24 months to deliver divergent outcomes by organization band. Each band has its own attacker exposure profile, its own defender adoption trajectory, and its own regulatory and underwriting load.

Band 1: well-resourced enterprises with hybrid AI-defender stacks

The largest enterprises and late-stage scale-ups have the resources, the talent density, and the procurement cycles to operationalize AI-defender tooling at speed. WEF: 91 percent of these organizations adjusted posture for geopolitical and AI risk in the last 12 months; only 29 percent report insufficient cyber expertise. CrowdStrike, Microsoft, and Google Cloud SOC AI tooling moved into production at this band first, and IBM's US$1.9M and 80-day savings figures are concentrated in this segment.

The Band 1 organization in 2026 to 2027 looks like this: AI tooling integrated across SOC, IR, vulnerability management, and identity layers; dedicated AI-governance and AI-red-team functions; named regulatory counsel for the EU AI Act and NY DFS expectations; insurance renewal at flat or down rates; pentest-and-validate cadence is continuous, with hybrid AI-and-human pentesting (XBow-class for continuous coverage; Stingrai-class for scoped depth) inside the same procurement.

These organizations are absorbing the chaos. They are not chaotic any more.

Band 2: mid-market struggling under adoption-plus-compliance load

Mid-market organizations (roughly US$100M to US$2B in revenue, 500 to 5,000 employees) face the worst of three squeezes simultaneously: attacker pressure that does not discriminate by org size, defender-AI adoption costs that scale poorly per-seat, and regulatory expectations that scale on revenue rather than employee count. WEF data shows mid-market organizations sitting between the 91 percent (Band 1) and 59 percent (SMB) adjustment rates, but their compliance load is closer to Band 1's.

The Band 2 organization in 2026 to 2027 looks like this: AI tooling adopted in spots (typically email security and EDR first, SOC and IR later); AI-governance is a part-time CISO responsibility, not a dedicated team; EU AI Act and NY DFS compliance is bolted onto existing GRC capacity; insurance renewals are getting tighter and conditional on AI-defender investments; pentest-and-validate cadence is a mix of continuous (where they can afford it) and annual point-in-time. Stingrai's PTaaS engagements are increasingly weighted to this band: scoped, senior-pentester depth, augmented by AI tooling, framed for boards that need real evidence of resilience.

These organizations are not chaotic; they are stretched. The risk is that "stretched" tips into "exposed" if regulatory deadlines compress faster than AI-defender adoption.

Band 3: SMBs disproportionately exposed

Sub-500-employee SMBs face the same attacker capability pressure as Band 1 enterprises, with materially less capacity to absorb defender-AI cost or regulatory load. WEF: only 59 percent of SMB organizations have factored geopolitically motivated attacks into risk strategy; 46 percent report insufficient cyber expertise; the 85 percent skills-gap-resilience overlap concentrates here.

The Band 3 organization in 2026 to 2027 looks like this: AI defender tooling deployed only as part of bundled MSP / MDR services, with the buyer essentially outsourcing the integration question; AI-governance is whatever the MSP says it is; EU AI Act and NY DFS compliance is contractual offload to vendors; insurance is increasingly a binary (insured or uninsurable) rather than a graduated renewal; pentest-and-validate cadence is annual at best, automated-only at worst.

These organizations are bearing the brunt of the chaos phase the most painfully, because they do not have the resources to absorb it. They are not catching up. They are going to need different solutions: bundled, opinionated, MSP-integrated AI-defender stacks that absorb the integration load on their behalf.

Figure 3: Stingrai's forecast that the chaos phase resolves not to a single equilibrium but to three bands diverging at different speeds. Each band has its own attacker exposure profile, defender adoption trajectory, and regulatory load. Source: Stingrai analysis on top of WEF GCO 2026, IBM CODB 2025, Coalition 2026 Cyber Claims Report, and the EU AI Act timeline.

What changes the trajectory

If the three-band uneven-equilibrium model is the base case, four levers can change the shape of the distribution.

1. Open-source defender-AI tooling

If AI-defender capability remains licensed and bundled into Microsoft, Google, CrowdStrike, and Palo Alto Networks SKUs, the per-seat cost makes Band 2 and Band 3 adoption slow. If high-quality open-source AI-defender tooling proliferates (think Wazuh- or Suricata-equivalent with AI-augmented detection), the floor rises faster across Band 2 and Band 3. The Microsoft Security Copilot 6.5x phishing identification figure and CrowdStrike Charlotte AI 98 percent triage accuracy figure are commercial benchmarks; we expect open-source equivalents to close 50 to 70 percent of that gap within 24 months.

2. Standards convergence

Three standards stacks (NIST AI RMF + AI 600-1, ISO/IEC 42001:2023, and the EU AI Act) are largely compatible at the principle level but differ at the assessment level. If they harmonize (or if the EU AI Act omnibus deferral lands), mid-market compliance load is materially lower. If they fragment further, mid-market load is materially higher. The next 12 months are the deciding window.

3. Insurer underwriting requirements

Cyber insurers have already moved AI-defender capability into renewal questionnaires. If the carriers price AI-defender capability into base premiums (the way they priced MFA in 2022 to 2024), Band 2 organizations get a price-driven push toward AI tooling. If carriers exit the SMB segment (Band 3), the bottom of the market becomes uninsurable; if they stay and price aggressively, the bottom of the market becomes coverage-gated by AI-defender adoption.

4. Skills gap closing

ISC2's 4.8 million workforce gap and the 85 percent WEF resilience-skills overlap are the binding constraint on defender-AI deployment. Splunk's 2025 State of Security found 33 percent of teams plan to fill skills gaps with AI and automation themselves; Gartner projects more than 50 percent of SOC Tier 1 analyst responsibilities will be handled by AI by 2028 (Gartner). If AI-defender tooling closes the skills gap by absorbing Tier 1 work, the bands narrow. If it does not, the bands widen.

Figure 4: The 2024 to 2027 cybersecurity AI regulatory load that hits mid-market disproportionately. Sources: European Union AI Act; NY DFS October 2024 Industry Letter; NIST AI 600-1; OWASP LLM Top 10 v2025; MITRE ATLAS.

Implications for security buyers

The right buyer-side response to the three-band model is not "maximize AI-defender investment" but "honestly identify which band you are in and build for that band's constraints."

If you are Band 1

Operationalize AI defender tooling across the SOC, IR, vulnerability management, identity, and pentest layers. Stand up an AI red team and AI-governance function. Run continuous validation against frontier-model attacker scenarios (OWASP LLM Top 10 v2025, MITRE ATLAS January 2026 update). Treat XBow-class continuous AI pentest as a baseline expectation, layered with Stingrai-class human-led depth on hard categories. Renewal-time, demand AI-defender adoption discounts from your carrier; you are subsidizing the population's mean and you should be priced for it.

If you are Band 2

Do not chase the Band 1 stack. You will burn budget you do not have. Pick the two highest-ROI AI-defender tools (typically email security and SOC triage) and operationalize them deeply before adding more. Get your AI-governance posture documented (NIST AI RMF + EU AI Act applicability + NY DFS expectations as relevant) and treat it as a renewal-cycle differentiator. Pentest-and-validate on a hybrid AI-and-human cadence; Stingrai's PTaaS is built for this band. Insurance: be ready to demonstrate AI-defender adoption when the renewal questionnaire arrives; the carriers are coming for this fast.

If you are Band 3

Outsource the integration question. Pick an MSP / MDR partner whose AI-defender stack is bundled, opinionated, and contractually carries the integration load. Do not try to build the stack yourself; you will lose. Renew insurance early and accept that coverage may become harder over the next 24 months. Run an annual scoped pentest with a senior pentester (not just an automated scan) on the systems that hold your most sensitive data; Stingrai's web application penetration testing and network penetration testing services are scoped to fit Band 3 budgets when the alternative is no human review at all.

Stingrai's role in an uneven-equilibrium market

The Band 1 / Band 2 / Band 3 model is not a marketing frame for Stingrai. It is the operating reality of who we run engagements for. Our customer base spans all three bands, weighted toward Band 2.

Stingrai's hybrid AI-augmented PTaaS posture (cross-link to our best PTaaS providers 2026 ranking, where we benchmark Stingrai against the named US, Canadian, and global PTaaS providers) is built specifically for organizations that need depth on hard categories without the Band 1 budget. Snipe, our internal AI agent trained on more than 6,000 HackerOne disclosures, runs the AI-led recon and known-class detection; senior pentesters keep ownership of business-logic discovery, exploit chaining, impact framing, and remediation guidance. The mix is roughly 30 to 40 percent AI-led and 60 to 70 percent human-led across our typical web-app and PTaaS engagements, calibrated to scope and surface area.

The 2026 to 2027 period is going to be a buyer-side market in which procurement gets sharper. The questions we expect to see more often:

"Show me the engagement where AI handed your senior pentester a chained business-logic finding that no autonomous agent would have shipped on its own."

"What AI tooling do you use, what guardrails apply, and how do you suppress false positives?"

"What is your AI-governance posture (OWASP LLM Top 10 v2025 awareness, MITRE ATLAS coverage, EU AI Act applicability)?"

"How many published CVEs has your team produced in the last 24 months?"

A 2026 buyer in any of the three bands should expect named senior pentesters, public CVE track record (Stingrai's team is at 18, with Ivan Spiridonov 10, Moaaz Taha 5, Victor Villar 3), and transparent AI tooling. The chaos phase does not change the buyer's checklist; it raises the floor on it.

Figure 5: Stingrai's scorecard for the next 24 months: where attacker AI grows fastest, where defender AI consolidates, what shifts the trajectory toward narrower bands, and what reinforces divergence. Sources: Stingrai analysis on top of Anthropic GTG-1002, IBM CODB 2025, Mandiant M-Trends 2026, WEF GCO 2026.

Frequently asked questions

Is XBow's "chaos phase" framing right?

Partly. XBow's named anchor data is solid: Anthropic's GTG-1002 disclosure (80 to 90 percent of tactical work autonomously executed across approximately 30 organizations), IBM's measurement of attacker AI in 1 in 6 (16 percent) of 2025 breaches, Mandiant's 22-second median initial-access-to-handoff time (down from 8+ hours in 2022), and CrowdStrike's 89 percent year-over-year rise in AI-enabled adversary attacks together establish that the chaos phase is real on the attacker side. Stingrai's perspective diverges on the trajectory. The "phase ending in stability" framing implies a single curve resolving to a single equilibrium. The WEF Global Cybersecurity Outlook 2026 data shows a widening cyber resilience gap by organization size: 91 percent of the largest enterprises adjusted cybersecurity posture for geopolitical and AI risk; only 59 percent of SMBs have done the same. Stingrai's forecast for 2026 to 2027 is uneven equilibrium across three bands, not convergence to a single new normal.

What did Anthropic's GTG-1002 disclosure prove?

Anthropic disclosed GTG-1002 on November 13, 2025, as the first publicly documented AI-orchestrated cyber espionage campaign at scale. A Chinese state-sponsored group used Claude Code in an MCP-connected agentic framework to autonomously execute roughly 80 to 90 percent of tactical work against approximately 30 organizations across technology, finance, government, and chemical manufacturing, at thousands of requests per second, with human input limited to 4 to 6 critical decision points per campaign. Anthropic detected the activity in mid-September 2025 and contained it within roughly 10 days. The qualitative footnote that often gets dropped: Anthropic explicitly noted that Claude "frequently overstated findings" and "fabricated data," with only "a handful" of approximately 30 targets actually compromised. End-to-end agentic operations are now operationally feasible; validation gaps remain non-trivial.

Where exactly is the chaos phase in 2026?

On the attacker side: real and operational. Anthropic GTG-1002, IBM 16 percent, Mandiant 22-second handoff, CrowdStrike 89 percent year-over-year AI-enabled adversary rise, named AI-aware malware (PROMPTFLUX, PROMPTSTEAL, QUIETVAULT). On the defender side: scaling unevenly. CrowdStrike Charlotte AI 98 percent triage accuracy and Microsoft Security Copilot 6.5x phishing identification are real, but adoption is concentrated in well-resourced enterprises. WEF GCO 2026 found 94 percent of leaders agree AI is the biggest driver of cybersecurity change, but only 64 percent of organizations have pre-deployment AI-tool security assessment processes (up from 37 percent year-over-year), meaning roughly one in three deploy without governance. The "phase" exists; the population is moving through it at three different speeds.

Will defenders catch up to attackers?

At the top of the population, yes. IBM's measurement that organizations using AI defenses extensively saved nearly US$1.9M per breach and identified breaches 80 days faster is direct evidence. CrowdStrike Charlotte AI and Microsoft Security Copilot Phishing Triage Agent are operationally deployed at thousands of customers. Bug-bounty researchers are 70 percent AI-augmented per HackerOne and 82 percent per Bugcrowd. The defender side is catching up. Where the trajectory diverges is at mid-market and SMB scale: the same WEF data shows 46 percent of small organizations report insufficient cyber expertise, against 29 percent of large organizations, and 85 percent of resilience-deficient organizations have a parallel skills gap. Defenders catch up at the top of the population; the bottom does not, without different solutions.

How should mid-market CISOs prepare?

Three priorities. First, pick the two highest-ROI AI-defender tools (typically email security and SOC triage) and operationalize them deeply before adding more; do not chase the Band 1 stack with Band 2 budget. Second, document AI-governance posture against NIST AI 600-1, OWASP LLM Top 10 v2025, MITRE ATLAS, and (if EU-touching) the EU AI Act timeline; the 2 August 2026 high-risk obligation date drives renewal cycles even before it lands legally. Third, run continuous-validation pentests with hybrid AI-and-human coverage; treat senior-pentester depth on business-logic and chained findings as a renewal-cycle differentiator with insurers. Stingrai's PTaaS is built for this band.

What does the EU AI Act require for cybersecurity?

The EU AI Act 2024/1689 entered into force 1 August 2024. Article 5 prohibitions applied 2 February 2025; general-purpose AI obligations 2 August 2025; high-risk-system obligations are scheduled for 2 August 2026 (extended to 2 August 2027 for AI systems embedded in regulated products). The European Commission's "Digital Omnibus on AI" (19 November 2025) has proposed deferring high-risk compliance to 2 December 2027; if not formally adopted in time, the original timeline applies. Penalties run up to EUR 35M or 7 percent of global annual turnover for prohibited practices, EUR 15M or 3 percent for high-risk-system non-compliance. Cybersecurity-relevant obligations cover risk management systems, technical robustness, monitoring, incident reporting, and human oversight on high-risk AI systems. NY DFS-regulated entities should also note the October 2024 industry letter directing AI-resistant authentication and AI-governance training for personnel.

How are cyber insurers responding to AI risk?

Cyber insurers have already moved AI capability from "nice to have" into underwriting. Coalition's 2026 Cyber Claims Report, drawing on more than 100,000 policy years, found 86 percent of insured businesses refused to pay ransoms in 2025 (record high) even as average initial ransom demand surged 47 percent year-over-year to more than US$1M. At-Bay's 2026 InsurSec Report measured 7 percent year-over-year claim frequency rise; ransomware severity at US$508K (+16 percent year-over-year); remote-access services as the entry vector for 87 percent of ransomware claims; VPN compromise driving 73 percent of identified-vector intrusions. Marsh McLennan's 2024 cyber market data showed 41 percent of cyber applications denied on first submission, with missing MFA and inadequate endpoint protection the top reasons. The 2026 trend: AI-defender capability moves into the underwriting questionnaire, AI-defender adoption discounts open up at the top of the market, and SMBs face a binary insurable / uninsurable question.

What is Stingrai's role in this market?

Stingrai is a 2021-founded offensive-security firm headquartered in Toronto with a London, UK office. Team certifications include OSCE3, OSCP, OSWE, OSED, OSEP, CREST CRT, CISSP, CRTO, GCPN, CRTE, and eWPTX. The team has 18 published CVEs (Ivan Spiridonov 10, Moaaz Taha 5, Victor Villar 3) and presents research at DEFCON and BSIDES. Stingrai's 5.0/5.0 average across 19 Clutch reviews reflects engagement reputation. Snipe, our internal AI agent trained on more than 6,000 HackerOne disclosures, runs AI-led recon and known-class detection; senior pentesters keep ownership of business-logic discovery, exploit chaining, impact framing, and remediation guidance. The mix is roughly 30 to 40 percent AI-led and 60 to 70 percent human-led on typical engagements, with hybrid AI-augmented PTaaS for organizations that need depth without Band 1 budget.

Why is Stingrai writing in response to XBow?

XBow is one of the most visible voices in the AI-offensive-security category and has done genuinely impressive work, including reaching the top of HackerOne's US leaderboard in 90 days, publishing transparent benchmarks against frontier models, and shipping Pentest On-Demand for self-service AI pentesting. The two firms occupy adjacent corners of the same market: XBow is AI-first autonomous offensive security; Stingrai is human-led offensive security with AI augmentation. The postures are complementary rather than oppositional. XBow's chaos-phase framing is direction-correct on the attacker side; Stingrai's contribution to the conversation is the trajectory analysis: not a single chaos phase resolving to stability, but uneven equilibrium across three bands. The next 24 months of buyer, regulator, and underwriter decisions will turn on which model the field uses to plan.

Where can I read more from Stingrai on AI security?

Three companion posts cover the broader picture. Stingrai's AI Cyber Attack Statistics 2026 compiles 89 verified figures from 26 named primary publishers on the offensive side, including the full GTG-1002 disclosure detail and Anthropic's August 2025 report. The AI Cybersecurity Threats 2026 post covers the defender-side reference set: NIST AI 100-1 + AI 600-1, MITRE ATLAS v5.4.0, OWASP LLM Top 10 v2025, ISO/IEC 42001:2023, the EU AI Act, UK AISI, ENISA, IBM CODB 2025 shadow-AI cost, HiddenLayer, Microsoft Security Copilot, and CrowdStrike Charlotte AI. The XBow Mythos response engages XBow's earlier "AI hacking, open to all" argument with the human-AI hybrid floor-vs-ceiling lens. Stingrai's PTaaS service and web application penetration testing are how the human-AI hybrid posture shows up in actual engagements.

The bottom line

XBow has named the moment well. The chaos phase is real on the attacker side: GTG-1002 demonstrated end-to-end agentic feasibility, IBM put the population number at 1 in 6, Mandiant compressed the operational tempo to 22 seconds, and CrowdStrike measured an 89 percent year-over-year rise in AI-enabled adversary activity. The defender side scaled too: IBM's US$1.9M and 80-day savings, Charlotte AI's 98 percent triage accuracy, and Microsoft Security Copilot's 6.5x phishing identification mean the arms race is bidirectional, not one-sided.

What the chaos-phase framing leaves implicit is that the defender adoption curve is not a single curve. WEF's Global Cybersecurity Outlook 2026 data shows a widening cyber resilience gap by organization size: 91 percent of the largest enterprises adjusted posture for geopolitical and AI risk, against 59 percent of SMBs. The EU AI Act high-risk timeline (currently 2 August 2026, possibly deferred to 2 December 2027), NY DFS expectations, and underwriter response add a regulatory and economic load that hits mid-market disproportionately. The 2026 to 2027 outcome that fits the public data is uneven equilibrium across three bands, not convergence to a single new normal.

For Stingrai, the practical conclusion is the same one we have been operating on for the last 12 months. Help Band 1 organizations operationalize hybrid AI-defender stacks with senior-pentester depth on hard categories. Help Band 2 organizations pick the two highest-ROI AI defender tools and prove resilience to insurers and regulators. Help Band 3 organizations outsource the integration question and run a single annual scoped pentest with a real human in the loop. Treat the chaos phase as a population-level event that hits each band differently, rather than a market-wide event that resolves uniformly.

XBow's argument deserves engagement. The chaos phase is real. The next 24 months will not deliver a single stable equilibrium; they will deliver three. The buyers, researchers, vendors, and underwriters who plan for divergence will outperform the ones who plan for the average.

References

XBow. "The Chaos Phase: How AI is Transforming Cybersecurity Threats". 2025-2026.

XBow. "Security in 2026: What Breaks, What Scales, and What Survives". 2026.

XBow. "The road to Top 1: How XBOW did it". July 2025.

XBow. "XBOW Raises $120M to Scale its Autonomous Hacker". March 18, 2026.

Anthropic. "Disrupting the first reported AI-orchestrated cyber espionage campaign". November 13, 2025.

Anthropic. "Detecting and countering misuse of AI: August 2025". August 27, 2025.

OpenAI. "Disrupting malicious uses of AI: October 2025". October 2025.

OpenAI. "Disrupting malicious uses of AI: June 2025". June 2025.

IBM. "Cost of a Data Breach Report 2025" press release. July 30, 2025.

IBM. "2025 Cost of a Data Breach Report: Navigating the AI rush". July 2025.

CrowdStrike. "2025 Global Threat Report". February 2025.

CrowdStrike. "2026 Global Threat Report findings". February 2026.

CrowdStrike. "Charlotte AI Detection Triage". February 2025.

Mandiant / Google Cloud. "M-Trends 2026". March 2026.

Microsoft. "Cyber Signals Issue 9: AI-Powered Deception". April 2025.

Microsoft. "Security Copilot for SOC: bringing agentic AI to every defender". November 2025.

Microsoft. "Microsoft Digital Defense Report 2025". October 2025.

World Economic Forum. "Global Cybersecurity Outlook 2026". January 2026.

World Economic Forum. "Global Cybersecurity Outlook 2026 (PDF)". January 2026.

HackerOne. "9th Annual Hacker-Powered Security Report". October 2025.

HackerOne. "210% Spike in AI Vulnerability Reports" press release. October 2025.

Bugcrowd. "Inside the Mind of a Hacker 2026". January 2026.

European Union. "AI Act 2024/1689 regulatory framework". Entered into force August 1, 2024.

European Union AI Act. "Article 99: Penalties".

DLA Piper. "Latest wave of obligations under the EU AI Act take effect". August 2025.

NY Department of Financial Services. "Industry Letter: Cybersecurity Risks Arising from AI". October 16, 2024.

NIST. "AI Risk Management Framework: Generative AI Profile (NIST AI 600-1)". July 26, 2024.

OWASP. "Top 10 for LLM Applications v2025". 2025.

MITRE. "ATLAS adversarial AI knowledge base". January 2026 update.

UK AI Security Institute. "Frontier AI cyber capability research". 2025-2026.

Coalition. "2026 Cyber Claims Report". April 2026.

At-Bay. "2026 InsurSec Report (Help Net Security summary)". April 2026.

Howden. "2025 Cyber Report: Rebooting Growth". September 2025.

Gartner. "AI Applications Will Drive 50 Percent of Cybersecurity Incident Response Efforts by 2028". March 2026.

CyberScoop. "Is XBOW's success the beginning of the end of human-led bug hunting? Not yet.". 2025.

Stingrai. AI Cyber Attack Statistics 2026.

Stingrai. AI Cybersecurity Threats 2026.

Stingrai. Cybersecurity Skills Gap Statistics 2026.

Stingrai. Cyber Insurance Statistics 2026.

Stingrai. AI Hacking Goes Mainstream: Stingrai's response to XBow's Mythos argument.

Stingrai. Best PTaaS Providers 2026.