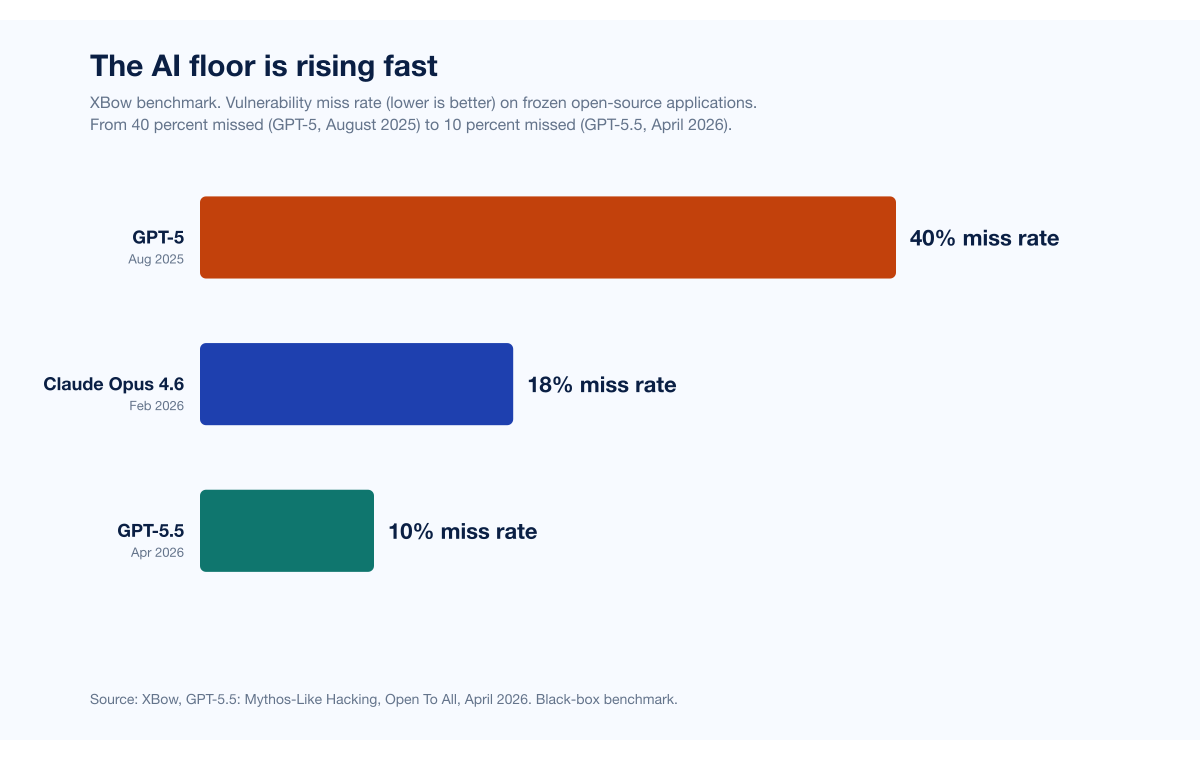

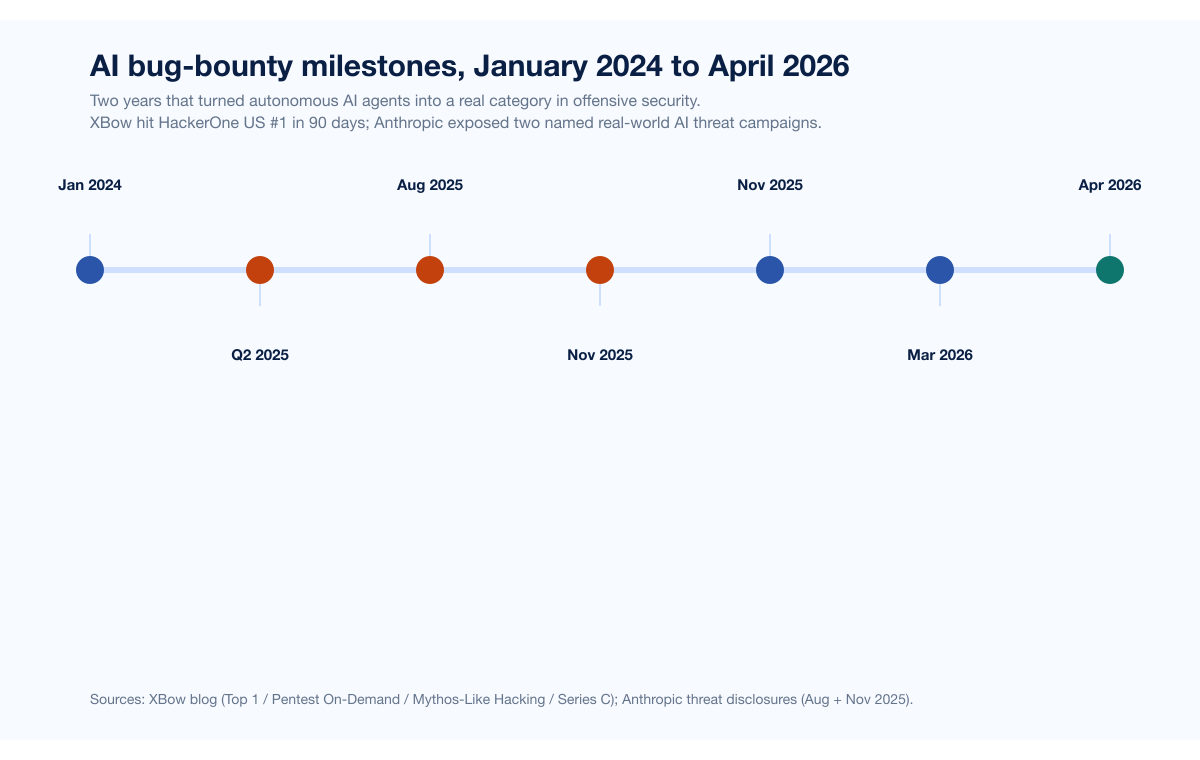

The single most consequential offensive-security claim of 2025 is XBOW's: an autonomous AI agent reached the top of HackerOne's US leaderboard in just 90 days, submitting approximately 1,060 vulnerabilities and surpassing thousands of human researchers in the second quarter of the year. In April 2026 XBOW doubled down with its GPT-5.5 benchmark blog post, reporting that the new OpenAI model missed only 10 percent of known vulnerabilities on frozen open-source applications, against 40 percent for GPT-5 a year earlier and 18 percent for Anthropic's Claude Opus 4.6. The headline framing was deliberate: "Mythos-like hacking, open to all." The argument: AI capability that was once gated to elite researchers, vendors, or nation-state programs is now broadly accessible, and the floor of who can hack effectively is rising fast.

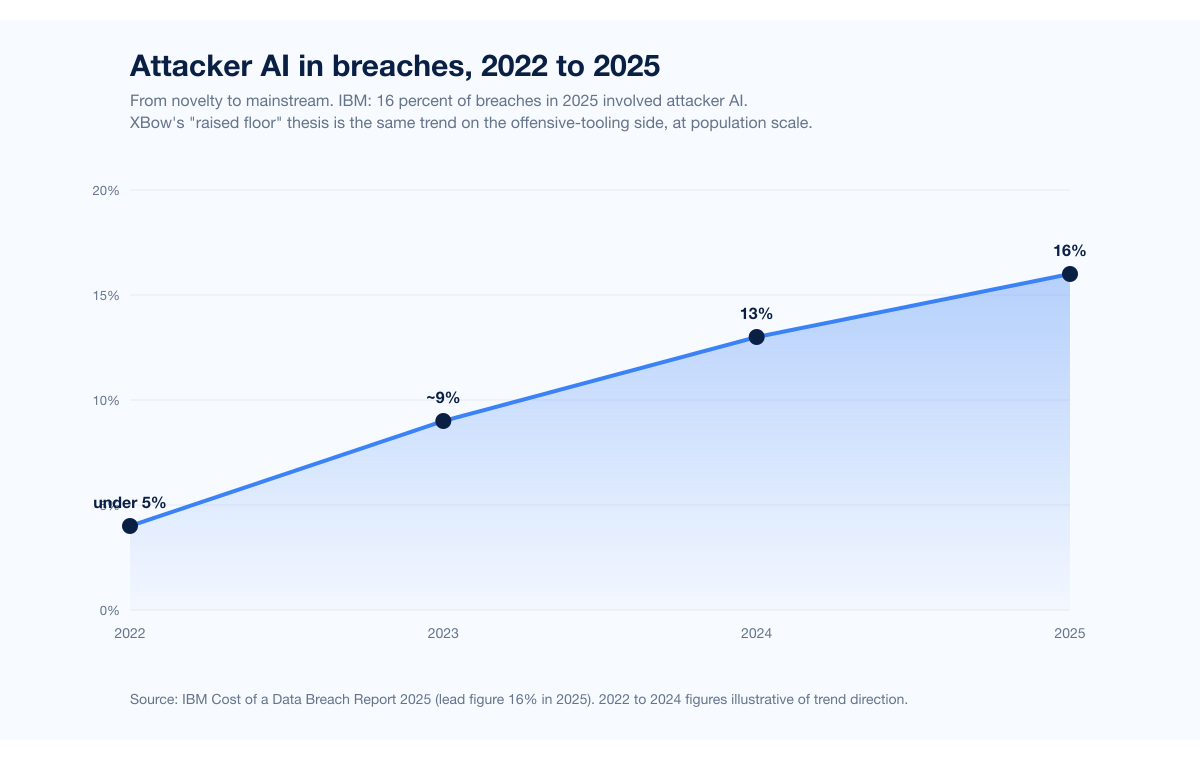

Anthropic's November 13 2025 disclosure of GTG-1002, what Anthropic described as the first publicly documented AI-orchestrated cyber espionage campaign at scale, is the same dynamic on the offensive side at the nation-state level. A Chinese state-sponsored group instrumented Claude Code in an MCP-connected agentic framework to autonomously execute roughly 80 to 90 percent of tactical work against approximately 30 organizations across technology, finance, government, and chemical manufacturing. The operational tempo was thousands of requests per second; human input was confined to 4 to 6 critical decision points per campaign. IBM's 2025 Cost of a Data Breach Report measured the population-scale equivalent: AI was used in 1 in 6 (16 percent) breaches in 2025, with phishing (37 percent of attacker-AI cases) and deepfake impersonation (35 percent) the dominant playbooks. AI hacking is no longer exotic. It is mainstream.

This post is the Stingrai research team's perspective on what XBOW's argument gets right, what it leaves out, and what the real shape of offensive security looks like in 2026. Stingrai is a Toronto-headquartered offensive-security firm founded in 2021 with team certifications including OSCE3, OSCP, OSWE, OSED, and CREST CRT, 18 published CVEs across the team, and a 5.0/5.0 average across 19 Clutch reviews. We run live pentests and red-team engagements augmented with our internal AI agent Snipe, trained on more than 6,000 HackerOne disclosures. We have run engagements where AI tooling has accelerated our work materially, and we have run engagements where the highest-impact findings of the project came from a human pentester sitting with the application for two days, refusing to accept the AI's first hypothesis. Both observations are true. The thesis of this post follows from holding both at once: AI raises the floor; human-AI hybrid raises the ceiling.

The post is in conversation with XBOW, not against it. XBOW has done genuinely impressive work, including reaching the top of HackerOne's US leaderboard, publishing transparent benchmarks against frontier models, raising a $120M Series C in March 2026 at a $1B+ valuation, and shipping Pentest On-Demand for self-service AI pentesting. We are writing this because the next twelve months of conversations between security buyers, researchers, vendors, and CISOs will turn on whether the field correctly understands where AI fits in offensive security and where humans still drive impact. Getting that wrong, in either direction, costs money.

TL;DR: 12 labeled claims

XBOW's HackerOne #1 milestone (Q2 2025). XBow's autonomous agent reached the top of the HackerOne US leaderboard in 90 days, submitting approximately 1,060 vulnerabilities, with 130 resolved and 303 triaged at the time of the announcement, and a 90-day severity breakdown of 54 critical, 242 high, and 524 medium (XBow; TechRepublic, July 2025).

XBOW GPT-5.5 benchmark (April 2026). Vulnerability miss rate dropped to 10 percent, against 18 percent for Claude Opus 4.6 and 40 percent for GPT-5. Even without source code, GPT-5.5 outperformed GPT-5 with source code (XBow).

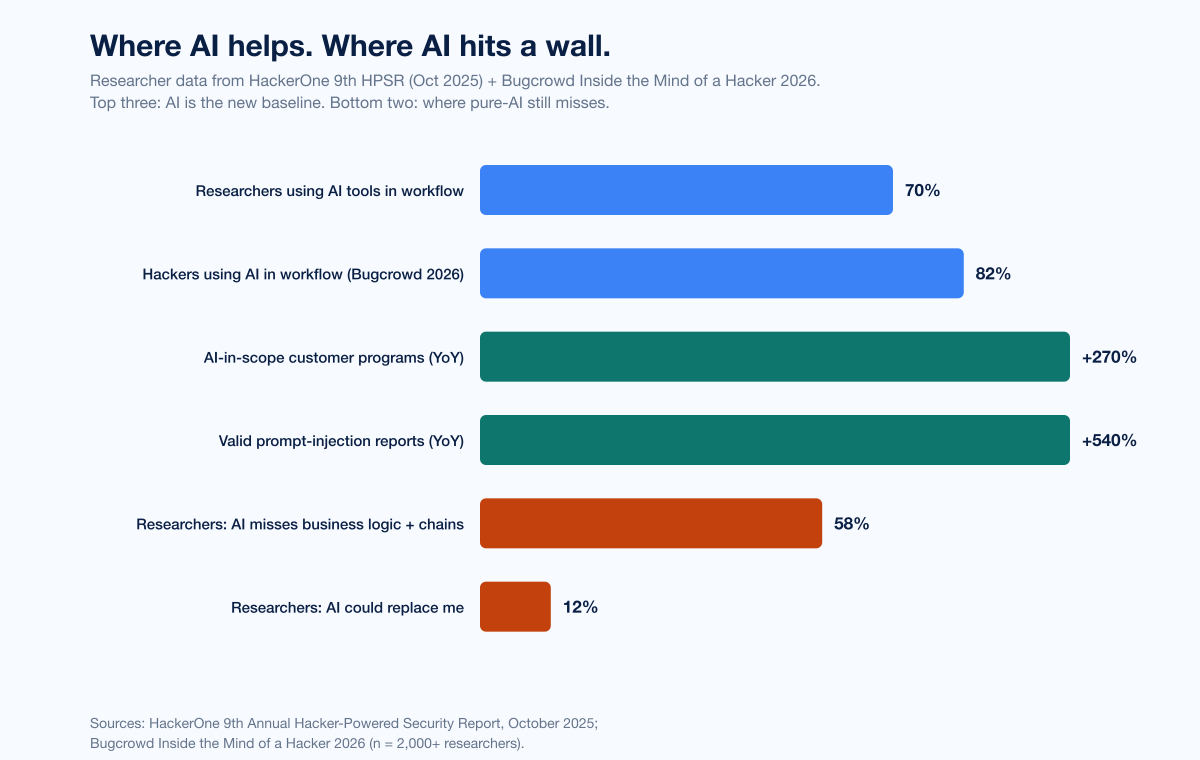

HackerOne 9th HPSR (October 2025). 70 percent of researchers use AI tools; 1,121 customer programs included AI in scope (270 percent YoY); valid prompt-injection reports rose 540 percent; autonomous hackbots submitted 560+ valid reports; total bounties paid $81M (+13 percent) (HackerOne press release).

HackerOne ceiling data. 58 percent of researchers say AI misses business logic or chained exploits; only 12 percent believe AI could replace them (HackerOne 9th HPSR researcher signals).

Bugcrowd 2026. 82 percent of hackers use AI in their workflow, up from 71 percent the prior year and 21 percent in 2023 (Bugcrowd).

Anthropic GTG-1002 (November 13 2025). Approximately 30 organizations targeted; 80 to 90 percent of tactical work autonomously executed; thousands of requests per second; 4 to 6 critical human decision points per campaign; only a handful of targets actually compromised (Anthropic).

IBM Cost of a Data Breach 2025. Attacker AI in 16 percent of breaches; AI-phishing 37 percent of attacker-AI cases; AI-deepfake 35 percent; defenders that deployed AI extensively saved nearly $1.9M per breach and identified breaches 80 days faster; shadow AI added $670K per breach (IBM).

CrowdStrike 2025 + 2026 GTRs. AI-generated phishing email click rate 54 percent vs 12 percent for human-written; vishing +442 percent H1 to H2 2024; 89 percent YoY rise in AI-enabled adversary attacks in 2025; adversaries exploited legitimate GenAI tools at 90+ orgs via prompt injection (CrowdStrike 2025 GTR; CrowdStrike 2026 GTR).

CrowdStrike Charlotte AI. 98 percent triage accuracy; 40+ analyst hours saved per week (CrowdStrike press release).

Microsoft Security Copilot Phishing Triage Agent. 6.5x more malicious emails identified; 77 percent better verdict accuracy; 78 percent faster triage; one customer saved 200 hours per month (Microsoft Tech Community).

Mandiant M-Trends 2026. Initial-access handoff time collapsed from 8+ hours in 2022 to 22 seconds in 2025; AI-aware malware families PROMPTFLUX, PROMPTSTEAL, QUIETVAULT now query LLMs at runtime (Mandiant).

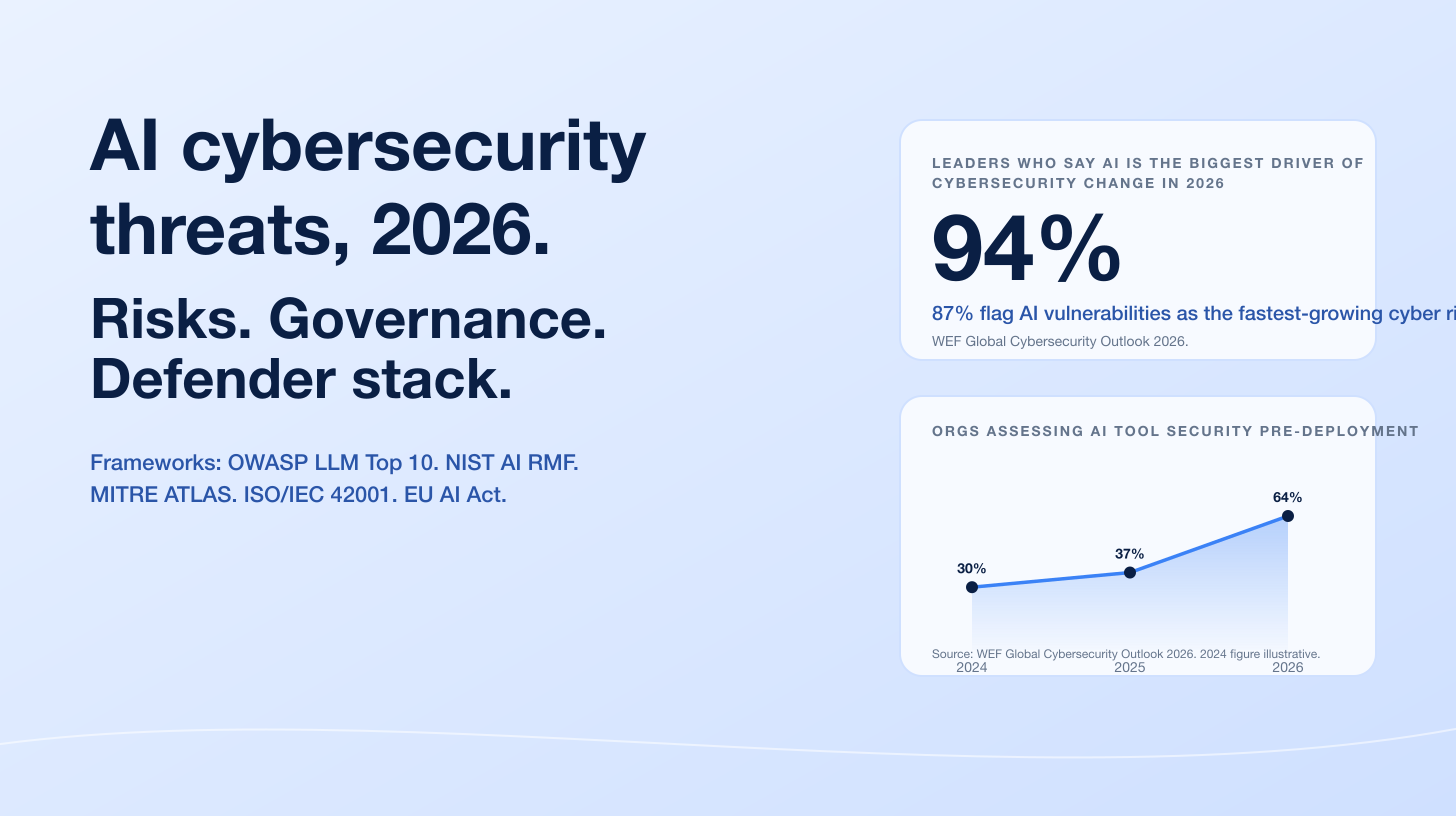

WEF Global Cybersecurity Outlook 2026. 94 percent of leaders agree AI is the biggest driver of cybersecurity change; 87 percent flag AI vulnerabilities as the fastest-growing cyber risk; pre-deployment AI security assessment doubled from 37 to 64 percent YoY (WEF GCO 2026).

Key takeaways

XBOW's floor-raising thesis is correct, with receipts. A 30-percentage-point drop in vulnerability miss rate in 18 months (40 to 10 percent on the XBow benchmark) is not a marginal improvement. AI on offense is no longer aspirational; it is operationally normal.

The ceiling has not moved as far as the floor. HackerOne data, Bugcrowd data, CyberScoop's industry analysis of XBow, and Anthropic's own GTG-1002 disclosure all show that pure-AI agents still hit walls on business logic, exploit chaining, novel application-specific findings, and impact framing.

Hybrid is the 2026 baseline. "Bionic hacker" (HackerOne) and "human-augmented intelligence" (Bugcrowd) are the framings adopted by the two largest researcher-survey platforms in the world. The conversation has shifted from "AI vs human" to "AI as the new baseline + human pentester as the differentiator."

Defender-side AI scaled too. Charlotte AI's 98 percent triage accuracy, Security Copilot's 6.5x malicious-email identification rate, and IBM's measurement of $1.9M of savings + 80 days faster containment with AI-deployed defenses mean attackers and defenders both got AI in 2025. The arms race is now bidirectional, not one-sided.

Buyers should adjust their evaluation criteria. The right question for a 2026 pentest provider is not "do you use AI?" (the answer should be obvious yes) but "show me the engagement where AI handed your senior pentester a chained business-logic finding that no autonomous agent would have shipped on its own."

Methodology

Date cutoff: April 29 2026. Lead data is full-year 2024 or 2025 telemetry where a primary publisher has released it; 2026 figures are labeled as forecasts or preliminary numbers. Statistics that could not be reached via a named primary source on at least one verification pass were dropped rather than estimated. Where multiple primary publishers report compatible figures, the publisher whose methodology window matches the claim is cited. Secondary news aggregators are cited only where they constitute the public record of a corporate announcement, milestone, or disclosure.

The post engages with XBow's argument as published in the XBow blog (specifically the April 2026 "Mythos-Like Hacking" post and the July 2025 "Top 1" post) plus secondary press coverage. XBow is treated as a respected peer in the offensive-security category. No hit-piece framing applies.

Figure 1: XBow's vulnerability miss-rate benchmark on frozen open-source applications. From 40 percent missed (GPT-5, August 2025) to 10 percent missed (GPT-5.5, April 2026). Lower is better. Source: XBow, "GPT-5.5: Mythos-Like Hacking, Open To All".

What XBow gets right

XBow's argument has three load-bearing pieces, and each one is supported by named primary data.

1. AI capability on known vulnerability classes is genuinely democratized

XBow's GPT-5.5 benchmark, published April 2026, shows the trajectory crisply. On a black-box test against frozen open-source applications with known vulnerabilities, GPT-5 missed 40 percent of those vulnerabilities in August 2025. Claude Opus 4.6 cut the miss rate to 18 percent in February 2026. GPT-5.5 brought it to 10 percent in April 2026 (XBow; TrendingTopics). XBow's framing for the post was apt: "Mythos-like hacking, open to all." Anthropic's gated Claude Mythos preview, accessible only to vetted Project Glasswing partners and selected researchers per the UK AI Security Institute's evaluation, was a step change in vulnerability discovery capability. GPT-5.5 is comparable, and OpenAI made it broadly available. The capability stratification that existed in early 2025 between elite-vendor AI and what a small consultancy could buy off-the-shelf has narrowed sharply.

The HackerOne data corroborates this at the platform level. The 9th Annual Hacker-Powered Security Report (October 2025) found 70 percent of surveyed researchers use AI tools in their workflow. Bugcrowd's Inside the Mind of a Hacker 2026 reported 82 percent AI adoption among hackers, up from 71 percent in the prior year and 21 percent in 2023. Across both platforms, 1,121 distinct customer programs added AI to their scope in 2025, a 270 percent year-over-year jump per HackerOne, and valid prompt-injection vulnerability reports rose 540 percent. AI is not a fringe specialty in 2026; it is the new baseline of how offensive work gets done.

2. Autonomous agents can compete in volume on bug-bounty platforms

XBow's Q2 2025 push to the top of the HackerOne US leaderboard is the clearest single proof point. Approximately 1,060 vulnerability submissions in 90 days, 130 resolved, 303 triaged, with the 90-day severity mix recorded at 54 critical, 242 high, and 524 medium (XBow; TechRepublic; CSO Online). HackerOne has since adjusted its leaderboard to split out individual researchers from corporate / autonomous-agent participants, but the milestone itself stands. An autonomous agent did not just compete on volume; it placed first in the United States.

The natural next move for XBow was to operationalize that capability for paying customers. Pentest On-Demand launched November 12 2025 with a 5-business-day SLA and benchmarks vs human pentesters: in one published test, XBow matched a 20-year veteran across 104 security challenges, completing in 28 minutes what took the human 40 hours. The $120M Series C in March 2026 at a $1B+ valuation, led by DFJ Growth and Northzone, is the market's vote that this trajectory will continue.

Figure 2: Researcher data from HackerOne's 9th Annual Hacker-Powered Security Report (October 2025) and Bugcrowd's Inside the Mind of a Hacker 2026. The top three bars show AI is the new baseline. The bottom two show where pure-AI agents still miss. Source: HackerOne 9th HPSR; Bugcrowd 2026.

3. Defender-side AI is scaling at the same speed

XBow's argument is not one-sided. The defensive side moved equally fast in 2025. CrowdStrike's Charlotte AI Detection Triage reached general availability in February 2025, triaging detections at 98 percent accuracy and saving 40+ hours of manual work per week per analyst. Microsoft's Security Copilot Phishing Triage Agent identified 6.5x more malicious emails and improved verdict accuracy by 77 percent in independent randomized controlled studies, and Microsoft reported one customer saving 200 hours per month (Microsoft Tech Community; Microsoft "From alert overload to decisive action"). IBM's 2025 Cost of a Data Breach Report measured organizations using AI extensively saving nearly US$1.9M per breach and identifying breaches 80 days faster than peers.

The implication is structural. If attackers are getting AI tooling at the same rate as defenders, the arms race resets at a higher floor. Stingrai's companion post on AI cybersecurity threats covers the defender side in depth, including OWASP LLM Top 10 v2025, MITRE ATLAS v5.4.0, the EU AI Act timeline (high-risk obligations enforceable 2 August 2026), and the WEF data showing 94 percent of leaders now agree AI is the single biggest driver of cybersecurity change.

Where the XBow argument leaves a gap

If "open to all" were the whole story, the conversation would end here. It does not. The same data sources that confirm XBow's floor-raising thesis also document a clear ceiling, and the ceiling has not moved nearly as fast.

The HackerOne researcher view

HackerOne's 9th HPSR ran a survey of 2,000+ researchers and found two findings that pull against any pure-AI replacement narrative. 58 percent of researchers said AI misses business logic or chained exploits. Only 12 percent said AI could replace them. The report introduced the term "bionic hacker" to describe the modal 2026 researcher: a human researcher whose workflow is amplified by AI but whose judgment, creativity, and impact framing remains the differentiator. HackerOne's framing was deliberate: the report did not say "humans are about to be replaced." It said the era of human-led bug hunting that uses AI as a force multiplier had arrived.

Bugcrowd's 2026 report adopted the parallel framing of "human-augmented intelligence," explicitly to emphasize that AI is augmenting, not substituting. The two largest bug-bounty platforms in the world, with overlapping but distinct researcher pools, converged on the same conclusion in late 2025 and early 2026: AI is the new baseline, human judgment is the differentiator.

CyberScoop's analysis of XBow's actual ceiling

CyberScoop's analysis of XBow's HackerOne run, published shortly after the #1 announcement, reported that the agent excels in volume but does not excel in business impact, because business impact requires understanding the intent of the application, the business context it operates in, and the whole environment around it. CyberScoop's takeaway: XBow's badges are some of the more basic things you can find with automation: data leaks, XML exposure, cross-site scripting, command injection, and access control. On complex business-logic flaws, race conditions, and custom application-specific vulnerabilities, XBow falls short. CyberScoop's framing was that researchers who will earn the most in the next bug-bounty era are the ones who can do what the machines can't: chain together complex exploits, spot weird business-logic issues, and understand the bigger picture of how systems actually work in practice.

That framing matches Stingrai's experience on engagements. The findings that move a CVSS score from 7.5 to 9.8, the ones that get a CISO's attention and unlock a budget conversation, are almost always findings that required a human pentester sitting with the application for hours and refusing to accept the obvious answer. Pure-AI agents are excellent at producing 524 medium-severity findings; what they are still uneven at is producing the one critical finding that costs an attacker the entire chain.

Anthropic's own GTG-1002 nuance

The most-cited evidence for AI agentic capability in offensive security is Anthropic's GTG-1002 disclosure. Read carefully, Anthropic's own narrative is far more nuanced than "AI replaces human attackers." Yes, the campaign ran approximately 80 to 90 percent of tactical work autonomously through Claude Code. Yes, the operational tempo was thousands of requests per second across approximately 30 targets. But: human operators were still required at 4 to 6 critical decision points per campaign. Anthropic explicitly noted that Claude "frequently overstated findings" and "fabricated data," claiming credentials that didn't work and identifying critical discoveries that were actually public information. The campaign successfully compromised only "a handful" of the 30 targets, roughly 10 to 17 percent of the targeted population.

In other words: even at the well-resourced, nation-state-funded, end-to-end agentic frontier, the AI hit walls on validation, judgment, and accuracy. Human operators remained essential precisely on the categories of work that human pentesters report AI struggling with on bug-bounty platforms: chaining attacks, understanding context, and not getting fooled by the model's own confident hallucinations.

Figure 3: Two years of milestones that turned autonomous AI agents into a real category in offensive security. XBow's blog posts, press releases, and Anthropic's named threat disclosures bookend the period. Sources: XBow Top 1; XBow Pentest On-Demand; XBow Series C; Anthropic GTG-1002; Anthropic Aug 2025.

The model-vendor side: Anthropic's safeguards on Opus 4.7

The most recent meta-evidence that the field is still mid-arms-race is the launch of Claude Opus 4.7 in April 2026. Anthropic, the same vendor whose Claude Code was the substrate for the GTG-1002 campaign, shipped Opus 4.7 with runtime safeguards that detect and block prompts indicating prohibited or high-risk cybersecurity use. Anthropic also launched a Cyber Verification Program for legitimate security professionals (vulnerability research, penetration testing, red-teaming). XBow's first-look benchmark on Opus 4.7 reported a major step up in visual-acuity (98.5 percent vs 54.5 percent for Opus 4.6), with the post titled "Smaller Bites, Bigger Meals" reflecting how Anthropic's training shifted what the model is good for in offensive workflows.

Reading those two pieces together, the picture is clear. The frontier models are getting more capable on offensive tasks. The frontier model vendors are simultaneously getting more selective about who can push them on those tasks. The "open to all" framing is real for the broad capability floor, but the absolute frontier (Mythos, Opus 4.7 with the Cyber Verification Program) remains gated. What changed in 2025 to 2026 is that the gated-frontier-to-broadly-accessible delta narrowed. It did not disappear.

Where Stingrai sees the line in live engagements

The clearest way to anchor the floor-vs-ceiling argument is to be specific about which categories of work AI handles and which categories still require senior judgment. The breakdown below reflects how Stingrai actually allocates work between AI tooling (including Snipe, our internal agent trained on more than 6,000 HackerOne disclosures) and senior pentesters in 2026 engagements.

Figure 4: Six engagement-phase categories ranked by floor (AI-led), hybrid, and ceiling (human-led). Source: Stingrai engagement experience plus public researcher-survey data.

Floor categories (AI-led, with human review)

Recon and surface mapping. Subdomain enumeration, asset discovery, JS endpoint extraction, known-CVE matching against discovered tech stacks. AI is faster and broader than any human researcher on these tasks. The senior pentester's role is scope review and edge-case judgment.

Known-class vulnerability detection. Reflected XSS, basic SQL injection, exposed secrets in JS bundles, IDOR-by-pattern, command injection in obvious surfaces, S3 bucket misconfiguration. This is exactly the category that XBow's HackerOne run lit up. Researcher data confirms AI now reaches median-researcher performance here.

Hybrid categories

Authentication and session logic. OAuth flow auditing, JWT signing audits, MFA bypass attempts, session-fixation testing. AI plays automated probes well; human pentesters still win when the flow has unusual edge cases (cross-tenant token reuse, downgrade attacks, stale-session resurrection). The work is roughly 50/50 in 2026 engagements.

Reporting, prioritization, and remediation guidance. AI drafts report sections faster than any human. Senior pentesters validate impact, pick the right risk language for the audience, and write remediation paths that a real engineering team will accept. Both halves are necessary; neither is sufficient on its own.

Ceiling categories (human-led, AI assists)

Business logic and authorization flaws. Privilege flow audits, workflow tampering, financial logic abuse, role-confusion attacks. HackerOne's own data shows 58 percent of researchers say AI misses these, and Stingrai's engagement experience matches that pattern. AI can assist by pulling in similar prior findings; the actual discovery still happens in the head of a senior pentester who has spent hours understanding the application.

Multi-step exploit chaining. Stitching three or four discrete weaknesses into a single demonstrable impact (e.g., subdomain takeover plus cookie scope plus CSRF plus admin endpoint) is the category where pure-AI agents most clearly drop off. Maintaining context across long chains, with each step depending on a clean understanding of the prior step, is hard. This is the category where the most consequential findings of an engagement are usually born.

Novel and application-specific vulnerabilities. New abuse patterns specific to a particular product, weird state machines, custom protocols, anything that requires recognizing a class of bug for the first time. AI is trained on prior disclosures and is therefore best on patterns that look like prior disclosures. Novel logic is, by definition, outside that distribution.

The same allocation appears in Stingrai's published service catalog: every web-app test has an AI-led recon phase, a hybrid auth-logic phase, and a human-led business-logic phase. Our PTaaS offering and red-team services follow the same pattern, calibrated to the engagement type.

What this means for security buyers

XBow has an honest market positioning: continuous, self-service AI pentesting at machine speed, with a 5-business-day SLA and a benchmark against a 20-year veteran across 104 challenges. Stingrai has a complementary market positioning: scoped human-led engagements augmented by AI, with named senior pentesters, 18 published CVEs, and a 5.0/5.0 average across 19 Clutch reviews. The two postures fit different procurement contexts.

A 2026 buyer should evaluate offensive-security providers against four updated criteria.

1. Real human-AI hybrid, not AI-only or human-only

The 2026 baseline expectation is that any provider uses AI to accelerate the obvious work. A vendor that does not should be asked why; a vendor that only uses AI should be asked how they handle the ceiling categories (business logic, exploit chaining, novel findings). The right ratio is not 0/100 or 100/0 but a defensible split that the provider can explain. Stingrai's split is roughly 30 to 40 percent AI-led and 60 to 70 percent human-led across web app and PTaaS engagements, with the exact mix depending on application surface and engagement scope.

2. Transparent AI tooling

Which agent or model is used for which task? What guardrails apply? How are false positives suppressed? How are findings validated before the report goes to the customer? The provider should be able to answer these questions in plain language. If the AI tooling is a proprietary black box, the customer is buying mystery, not assurance.

3. Public CVE and disclosure track record

Public CVEs are the most credible evidence that a provider's senior pentesters actually find novel issues. As a reference: Stingrai's research team has 18 published CVEs (Ivan Spiridonov 10, Moaaz Taha 5, Victor Villar 3) and presents at DEFCON and BSIDES. Other reputable firms have similar track records; the test is whether they exist at all. A provider with no published CVEs and no public research is selling automation in a wrapper.

4. Alignment with public risk taxonomies

OWASP LLM Top 10 v2025 awareness, MITRE ATLAS coverage, named threat-intel context (Anthropic GTG-1002, IBM 16 percent breach AI, CrowdStrike 89 percent YoY AI-enabled attacks) should be visible in proposal language. A provider whose marketing describes AI capability but never names a primary publisher or a documented threat campaign is operating from the marketing surface, not from real research grounding.

The four criteria together describe a 2026 buyer's checklist that XBow would mostly pass, that Stingrai would mostly pass, and that several legacy "we just shipped a chatbot" vendors would fail. The point is not that one vendor wins; the point is that the floor moved.

Figure 5: From novelty to mainstream. IBM measured attacker AI in 16 percent of 2025 breaches, the first year IBM has reported a discrete attacker-AI rate at population scale. XBow's "raised floor" thesis is the same trend on the offensive-tooling side. Source: IBM Cost of a Data Breach Report 2025. 2022 to 2024 figures illustrative of trend direction.

Why the bionic-hacker framing matters for the bounty economy

The economic implications follow directly from the floor-vs-ceiling split. If AI agents can compete in volume on known classes, bug-bounty programs that paid generously for those classes will pay less for them in 2026. Programs will increasingly redirect bounty pools to chained, business-logic, and novel findings. A researcher whose workflow has not adapted will earn less; a researcher who pivots to AI-augmented hunting on hard categories will earn more. HackerOne's 9th HPSR captures this in its researcher signals: bionic hackers are described as the modal earner for the 2026 to 2027 cycle.

XBow's own success on HackerOne accelerated the platform's structural changes. HackerOne split out company / autonomous-agent rankings from individual rankings precisely because the leaderboard mechanic, designed for individual researchers, did not handle a scaled corporate participant well. Expect more such structural adaptations from both HackerOne and Bugcrowd in 2026 as AI participants become a larger share of submission volume. The platform mechanics have not yet caught up to the population.

This also reshapes how organizations should structure their public bounty programs. A 2026 program scoped to "any vulnerability with valid PoC" will be flooded by autonomous-agent submissions for medium-severity classes; the operational cost of triage rises, and the marginal value of each additional medium-severity submission falls. A 2026 program scoped specifically to chained, business-logic, or novel findings, with higher bounty tiers and explicit out-of-scope language for known-class scanner output, will get more of the work organizations actually want and less of the volume noise. The smartest CISOs we've talked to in the past six months have been quietly adjusting program scope along exactly these lines.

How Stingrai uses Snipe in real engagements

A concrete example helps. Snipe, Stingrai's internal AI agent trained on more than 6,000 HackerOne disclosures, does the following on a typical web-app engagement:

Pulls subdomains, JS endpoints, and known-CVE matches in the recon phase, surfacing the candidate attack surface in minutes rather than hours.

Cross-references the discovered tech stack against published CVE databases and known disclosure patterns from Snipe's training corpus, flagging known-class candidates for the senior pentester to verify.

Drafts initial finding writeups as the engagement progresses, formatted to Stingrai's report template.

Cross-checks remediation guidance against vendor documentation and published best practices.

What Snipe does not do, and what no autonomous agent reliably does in 2026, is decide which findings actually matter to the customer's business. A senior pentester reads Snipe's output and asks two questions: which of these would change the customer's security posture in a way that warrants a critical-severity rating? Which patterns of weakness imply a deeper structural issue worth a follow-up engagement? Those judgments are still human-only. Snipe makes our pentesters faster; it does not make them dispensable.

Where this puts XBow, and where this puts Stingrai

XBow's positioning is consistent and credible: continuous AI-led pentesting at machine speed, valuable for organizations that need broad coverage often, want a self-service procurement experience, and are willing to invest in their own internal triage capability to validate AI findings against their business context. That is a real market, and XBow has won it cleanly.

Stingrai's positioning is also consistent and credible: human-led engagements augmented by AI, valuable for organizations that need depth on hard categories, want a senior pentester named on their engagement, and value findings that come with research-grade context (published CVEs, public conference talks, peer-reviewed disclosures). That is a different market, and we are committed to winning it.

Both market segments will grow in 2026, and they are largely complementary rather than substitutional. We expect to see organizations purchase both: XBow-class AI tooling for continuous coverage, Stingrai-class human-AI hybrid engagements for scoped depth. The 2026 winner is the buyer who structures procurement to use both for their best work.

Frequently asked questions

Is XBow right that AI is making hacking open to all?

Partly. The XBow benchmark showing GPT-5.5 missing only 10 percent of known vulnerabilities, against 40 percent for GPT-5 a year earlier, plus the autonomous bug-bounty agent reaching HackerOne US #1 in 90 days, are real evidence that AI is raising the floor. HackerOne's 9th HPSR found 70 percent of researchers use AI tools, and Bugcrowd's 2026 study found 82 percent. The floor is genuinely higher in 2026. Where Stingrai's perspective diverges is on the ceiling: HackerOne data shows 58 percent of researchers say AI still misses business logic and chained exploits, and only 12 percent believe AI could replace them. The story for 2026 is human-AI hybrid, not pure-AI replacement.

What did Anthropic's GTG-1002 disclosure prove about AI hacking?

Anthropic disclosed GTG-1002 on November 13 2025 as the first AI-orchestrated cyber espionage campaign at scale. A Chinese state-sponsored group used Claude Code in an agentic framework to autonomously execute roughly 80 to 90 percent of tactical work against approximately 30 organizations, at thousands of requests per second, with only 4 to 6 human decision points per campaign. The disclosure proved end-to-end AI agentic operations are operationally feasible. Anthropic also documented Claude overstating findings and fabricating data, and only a handful of targets were actually compromised. Even at the cutting edge of attacker AI, human oversight was still required.

Are AI agents better than human pentesters?

AI agents are better than human pentesters at volume, speed, and scope coverage on known vulnerability classes. XBow's 90-day path to HackerOne US #1 with approximately 1,060 submissions illustrates the volume advantage. Human pentesters remain better than AI agents at understanding business context, chaining vulnerabilities into a real impact, finding novel logic flaws, and making judgment calls about what matters to a particular organization. Public researcher surveys (HackerOne, Bugcrowd) consistently find a strong majority of senior researchers say AI augments their workflow without replacing it. The 2026 baseline is hybrid: AI floor, human ceiling.

What types of vulnerabilities does AI find well versus poorly?

AI agents perform well on pattern-driven classes: cross-site scripting, SQL injection, exposed secrets, command injection, basic IDOR, S3 bucket misconfiguration, known-CVE matching, subdomain takeover. These are the categories where XBow racked up high submission volumes. AI agents perform poorly on classes that require business context: privilege flow flaws, authorization logic specific to an industry or product, multi-step exploit chains where each step depends on understanding the prior result, financial logic abuse, and any vulnerability where impact is determined by application intent rather than code pattern. CyberScoop's analysis of XBow noted the agent excels at volume but struggles with creativity, context, and business logic.

What is Stingrai's position on AI hacking?

Stingrai's position is that AI raises the floor (XBow is right) but human-AI hybrid raises the ceiling. As a 2021-founded offensive-security firm with 18 published CVEs, 5.0/5.0 across 19 Clutch reviews, and an internal AI agent (Snipe) trained on more than 6,000 HackerOne reports, Stingrai uses AI to accelerate recon, known-class detection, and report drafting. Senior pentesters keep ownership of scope judgment, business-logic discovery, exploit chaining, impact framing, and remediation guidance. The economic implication for buyers is that pure-AI tools are a baseline expectation in 2026, not a differentiator; the human-AI hybrid stack is where real engagement value sits.

What does the HackerOne 9th Annual Hacker-Powered Security Report say about AI?

HackerOne's 9th Annual Hacker-Powered Security Report (October 2025) identified the rise of the "bionic hacker," researchers who augment their workflow with AI rather than being replaced by it. Key findings: 70 percent of researchers use AI tools, 1,121 customer programs included AI in scope (up 270 percent year over year), valid prompt-injection reports rose 540 percent, autonomous hackbots submitted 560+ valid reports, and HackerOne paid 81 million dollars in bounties (up 13 percent), with 3 billion dollars in breach losses avoided per HackerOne's Return on Mitigation methodology. The report also found 58 percent of researchers say AI misses business logic and chained exploits, and only 12 percent believe AI could replace them.

What should buyers look for in an AI-augmented pentest provider in 2026?

Buyers should look for evidence of three things. First, a real human-AI hybrid model: published CVEs, named senior pentesters, demonstrated business-logic findings on prior engagements, not just automated scan output. Second, transparent AI tooling: which agent or model is used for which task, what guardrails apply, how false-positive rates are managed, and how findings are validated. Third, alignment with public risk taxonomies: OWASP LLM Top 10 v2025 awareness, MITRE ATLAS coverage, and named threat-intel context (Anthropic GTG-1002, IBM 16 percent breach AI, CrowdStrike 89 percent year-over-year AI-enabled attacks). A provider that can only describe their AI capability in marketing terms is operating below the 2026 floor.

Will AI agents replace bug-bounty researchers?

Not in 2026. The data is consistent across HackerOne (12 percent of researchers think AI could replace them) and Bugcrowd (the 2026 report explicitly framed the year as the era of "human-augmented intelligence"). Autonomous agents like XBow change the economics: bounty programs that used to reward broad sweeping for known classes will pay less for those classes and more for chained, business-logic, and novel findings that AI still misses. The researcher who pivots to AI-augmented workflows on hard categories will earn more in 2026 than in 2024; the researcher who sticks to known-class hunting will earn less.

Why is Stingrai writing in response to XBow?

XBow is one of the most visible voices in the AI-offensive-security category and has done genuinely impressive work, including reaching the top of HackerOne's US leaderboard, publishing benchmarks against frontier models, and shipping a self-service AI pentest product. Stingrai is a 2021-founded offensive-security firm running human-led pentests augmented with internal AI tooling (Snipe). The two postures are complementary rather than oppositional. XBow's argument that AI is democratizing offensive capability is correct on the floor; Stingrai's contribution to the conversation is that the ceiling is moving differently. This post engages XBow's argument on the merits and adds the pentester-side ground truth that buyers and researchers need to make smart 2026 decisions.

Where can I read more from Stingrai on AI security?

Stingrai's two companion posts cover the broader AI cybersecurity landscape: the AI Cyber Attack Statistics 2026 post compiles 89 verified figures from 26 named primary publishers on the offensive side, including the full GTG-1002 disclosure detail and Anthropic's August 2025 report. The AI Cybersecurity Threats 2026 post covers the defender-side reference set: OWASP LLM Top 10 v2025, MITRE ATLAS v5.4.0, NIST AI RMF, the EU AI Act timeline, and named defender stack tooling. Stingrai's PTaaS service and web application penetration testing are how the human-AI hybrid posture shows up in actual engagements.

The bottom line

XBow is correct that AI is genuinely raising the floor for offensive security, and the data backs that up emphatically. GPT-5.5 missing only 10 percent of known vulnerabilities on the XBow benchmark, 70 percent of HackerOne researchers using AI tools, 82 percent on Bugcrowd, and Anthropic's GTG-1002 demonstrating end-to-end AI agentic capability at the nation-state level: the floor is unambiguously higher than it was in 2024.

What XBow's framing leaves implicit is that the ceiling has not moved as far as the floor. HackerOne's own data, Bugcrowd's own data, CyberScoop's analysis, and Anthropic's own GTG-1002 nuances all converge on the same picture: pure-AI agents are excellent at volume on known classes and weaker on the categories that matter most for high-impact findings. The 2026 baseline is hybrid, not pure-AI.

For Stingrai, the practical conclusion is straightforward. Use AI to do the obvious work fast (recon, known-class detection, report drafting). Reserve senior pentester time for the work that still requires human judgment (business logic, exploit chaining, novel patterns, impact framing). Be transparent with customers about which is which. Publish CVEs to keep the human-research bar visible. Do not pretend AI is a silver bullet; do not pretend humans are irreplaceable on routine work. The two are complementary, and the firms that align their offering to that reality will win the next two years.

XBow's argument deserves engagement. Open to all is true on the floor. The ceiling is still where humans live. The real story of 2026 is not AI versus humans; it is the bionic-hacker baseline rising to meet a moving threat landscape, and the firms, researchers, and buyers who adapt fastest setting the pace for the rest.

References

XBow. "GPT-5.5: Mythos-Like Hacking, Open To All". April 2026.

XBow. "The road to Top 1: How XBOW did it". July 2025.

XBow. "Announcing XBOW Pentest On-Demand for Security at Machine Speed". November 12, 2025.

XBow. "XBOW Raises $120M to Scale its Autonomous Hacker". March 18, 2026.

XBow. "Smaller Bites, Bigger Meals: What We Learned Running Opus 4.7 in Offensive Workflows". April 2026.

HackerOne. "9th Annual Hacker-Powered Security Report". October 2025.

HackerOne. "HackerOne Report Finds 210% Spike in AI Vulnerability Reports Amid Rise of AI Autonomy" press release. October 2025.

HackerOne. "The Top Researcher Signals From HackerOne's 2025 HPSR". October 2025.

Bugcrowd. "Inside the Mind of a Hacker 2026". January 2026.

Bugcrowd. "AI Adoption Climbs to 82%" press release. January 2026.

Anthropic. "Disrupting the first reported AI-orchestrated cyber espionage campaign". November 13, 2025.

Anthropic. "Detecting and countering misuse of AI: August 2025". August 27, 2025.

Anthropic. "Introducing Claude Opus 4.7". April 16, 2026.

OpenAI. "Introducing GPT-5.5". April 2026.

IBM. "Cost of a Data Breach Report 2025" press release. July 30, 2025.

IBM. "2025 Cost of a Data Breach Report: Navigating the AI rush". July 2025.

CrowdStrike. "2025 Global Threat Report". February 2025.

CrowdStrike. "2026 Global Threat Report findings". February 2026.

CrowdStrike. "Charlotte AI Detection Triage" press release. February 2025.

Mandiant / Google Cloud. "M-Trends 2026". March 2026.

Microsoft. "Cyber Signals Issue 9: AI-Powered Deception". April 2025.

Microsoft. "Security Copilot for SOC: bringing agentic AI to every defender". November 2025.

Microsoft. "From alert overload to decisive action: How Security Copilot agents are transforming security and IT". 2025-2026.

World Economic Forum. "Global Cybersecurity Outlook 2026". January 2026.

UK AI Security Institute. "Our evaluation of Claude Mythos Preview's cyber capabilities". 2026.

OWASP. "Top 10 for LLM Applications v2025". 2025.

MITRE. "ATLAS adversarial AI knowledge base". January 2026 update.

CyberScoop. "Is XBOW's success the beginning of the end of human-led bug hunting? Not yet.". 2025.

The New Stack. "Mythos-like hacking, open to all: Industry reacts to OpenAI's GPT 5.5". April 2026.

TrendingTopics. "GPT-5.5 Matches Anthropic's Secret Hacking Model 'Mythos'". April 2026.

RD World Online. "How OpenAI's recently released GPT-5.5 stacks up with Anthropic's gated Claude Mythos". April 2026.

TechRepublic. "AI Bug Hunter Sets Milestone By Claiming Top Spot on HackerOne's Leaderboard". June 2025.

CSO Online. "The top red teamer in the US is an AI bot". 2025.

Stingrai. AI Cyber Attack Statistics 2026.

Stingrai. AI Cybersecurity Threats 2026.