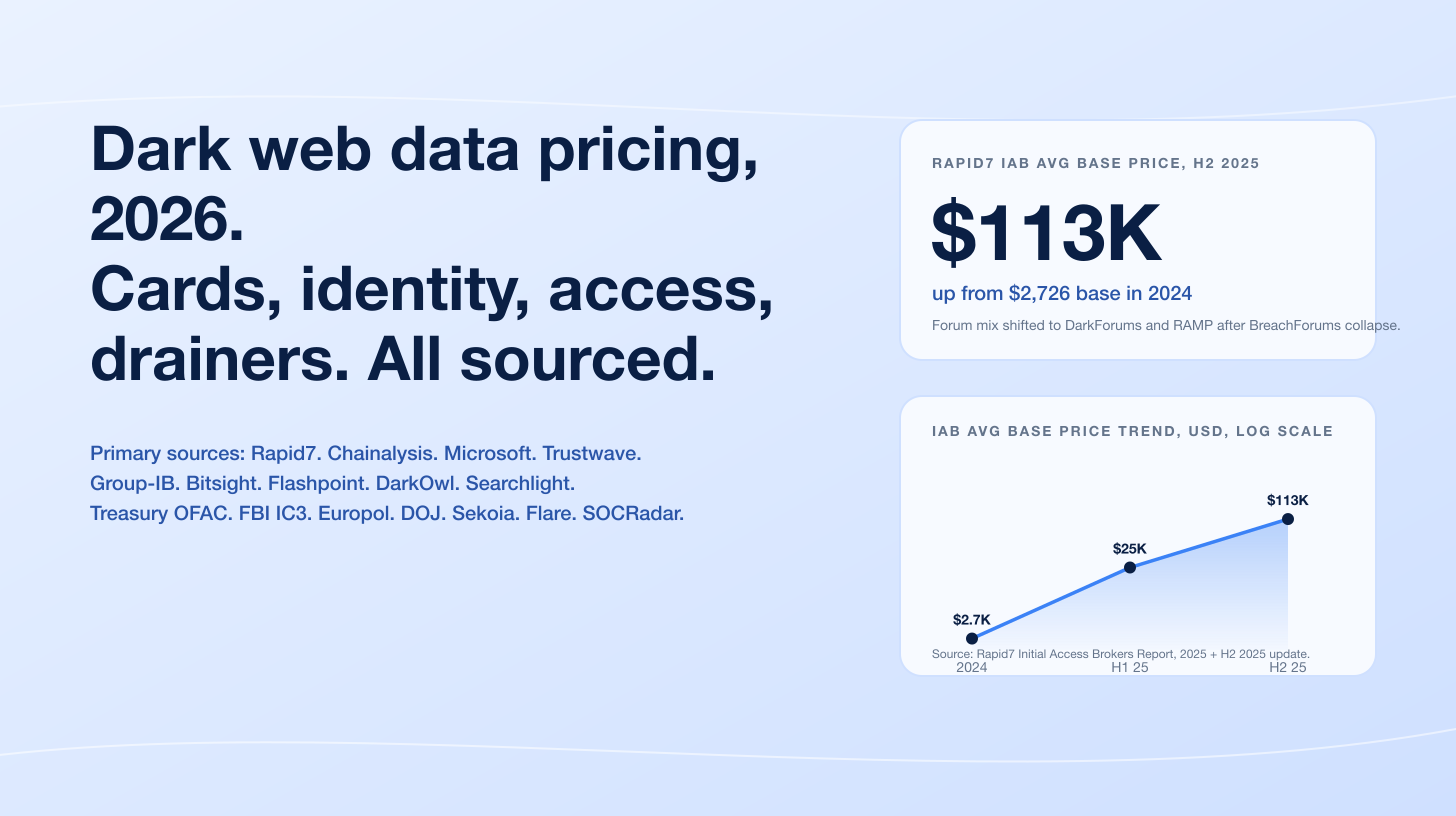

Attacker AI crossed the line from novelty into operational reality in 2025. IBM's 2025 Cost of a Data Breach Report measured AI use in 1 in 6 breaches (16%) worldwide, with phishing (37% of attacker-AI cases) and deepfake impersonation (35%) the dominant playbooks. The single most defining incident of the year was Anthropic's November 2025 disclosure of GTG-1002, the first publicly documented AI-orchestrated cyber espionage campaign: a Chinese state-sponsored group used Claude Code in an agentic framework to autonomously execute roughly 80 to 90 percent of tactical work against approximately 30 global organizations, firing thousands of requests per second and confining human input to four to six critical decision points per campaign. AI-generated phishing emails landed a 54% click-through rate against just 12% for human-written lures, and vishing (voice phishing) jumped 442% between the first and second half of 2024. The pattern is consistent across every primary publisher we reviewed: AI did not invent new attack categories in 2025, but it dramatically accelerated existing ones.

Three forces drive AI cyber attack volumes in 2026. First, autonomous and agentic frameworks moved from research benchmarks into live operations: Anthropic's GTG-1002 disclosure showed end-to-end AI orchestration is a real capability, and Mandiant's M-Trends 2026 documents AI-aware malware (PROMPTFLUX, PROMPTSTEAL, QUIETVAULT) that queries LLMs at runtime to evade detection. Second, AI-generated social engineering scaled past the human baseline: KnowBe4's analysis of phishing emails between September 2024 and February 2025 found 82.6% used some form of AI-generated content, and Verizon's 2025 DBIR notes that synthetic-text phishing has roughly doubled over two years. Third, prompt injection moved from theoretical to operational: HiddenLayer measured 33% of 2024 breaches involved attacks on chatbots and CrowdStrike's 2026 Global Threat Report records adversaries exploiting legitimate GenAI tools at 90+ organizations via injected prompts. Together these forces reshape the planning surface for CISOs, security buyers, and journalists covering AI threat intelligence in 2026.

This post is the Stingrai research team's canonical 2026 reference for AI cyber attack activity. It assembles 89 numeric claims from 26 named primary publishers, including Anthropic Threat Intelligence, OpenAI's quarterly disruption reports, the Microsoft Digital Defense Report 2025, IBM Cost of a Data Breach Report 2025 and X-Force, CrowdStrike Global Threat Reports 2025 and 2026, Mandiant M-Trends 2025 and 2026, the Verizon 2025 DBIR, ENISA Threat Landscape 2025, the World Economic Forum Global Cybersecurity Outlook 2026, HiddenLayer, Lakera, the UK AI Security Institute, MITRE ATLAS, OWASP, FBI IC3, the US Department of Justice, Sumsub, Pindrop, KnowBe4, and named corporate-incident records for Arup, Ferrari, WPP, and KnowBe4. Lead data is full-year 2024 and full-year 2025 telemetry, the freshest available; primary publishers have not yet released full-year 2026 reports as of April 2026. Every figure links back to its primary publisher so any claim can be audited.

TL;DR: 12 labeled key stats

Share of breaches involving attacker AI (2025): 16% (1 in 6) (IBM Cost of a Data Breach Report 2025).

Top attacker-AI use case: phishing (37% of AI-involved breaches), deepfake impersonation (35%) (IBM, 2025).

GTG-1002 AI autonomy share, Sept-Nov 2025 campaign: approximately 80 to 90% of tactical work executed by Claude Code (Anthropic, Nov 13 2025).

GTG-1002 targets: approximately 30 organizations across tech, finance, government, chemical manufacturing (Anthropic, 2025).

AI-generated phishing email click rate, 2024-2025: 54% (vs 12% for human-written) (CrowdStrike 2025 GTR, corroborated by Microsoft Digital Defense Report 2025).

Vishing growth, H1 to H2 2024: +442% (CrowdStrike 2025 GTR).

Initial-access handoff time, 2025: 22 seconds, down from 8+ hours in 2022 (Mandiant M-Trends 2026).

AI-generated content share of phishing emails analyzed Sept 2024 to Feb 2025: 82.6% (KnowBe4 Phishing Threat Trends Report, Mar 2025).

CrowdStrike 2026 GTR: YoY growth in attacks by AI-enabled adversaries: +89% (CrowdStrike, Feb 2026).

WEF 2026 leaders agreeing AI is the single biggest driver of cybersecurity change: 94% (WEF Global Cybersecurity Outlook 2026).

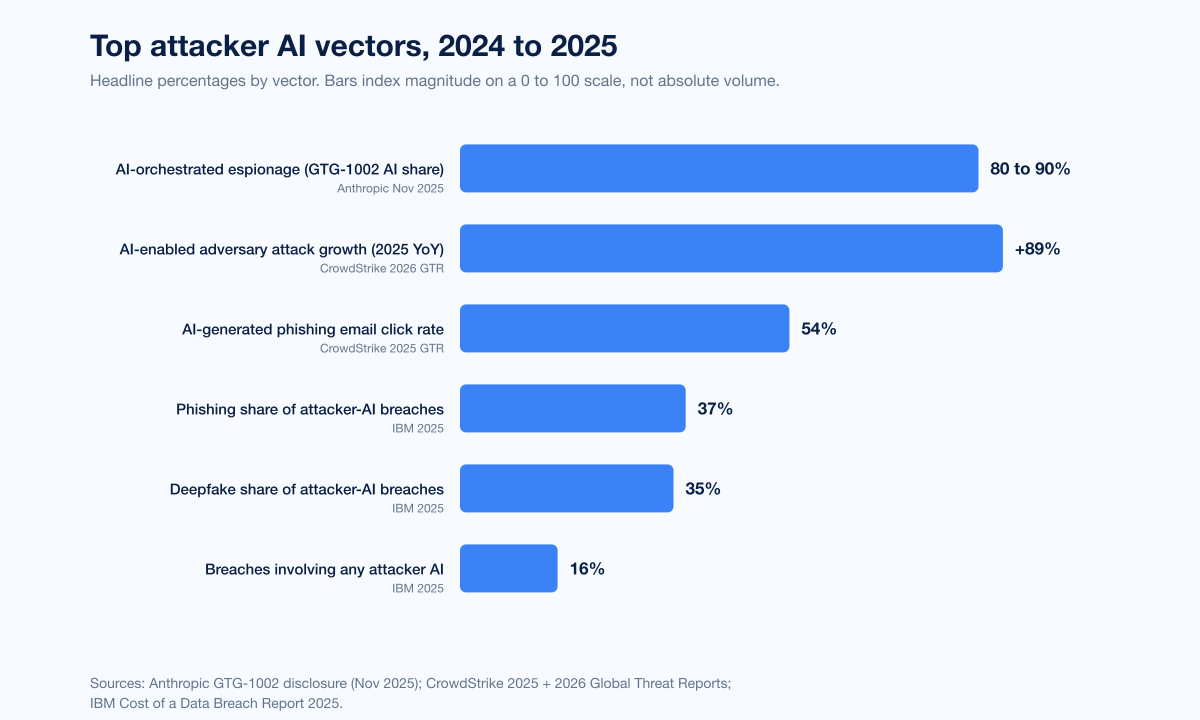

Lakera Gandalf prompt-attack corpus: 40M+ prompts from 1M+ players since May 2023 (Lakera, 2025 and arXiv 2501.07927).

Microsoft fraud blocked using AI defenses, April 2024 to April 2025: US$4B (Microsoft Cyber Signals Issue 9, April 2025).

Key takeaways

Attacker AI is now mainstream, not exotic. IBM put AI in 16% of breaches and 87% of WEF respondents already flag AI vulnerabilities as the fastest-growing cyber risk for 2025. The narrative that AI attacks remain theoretical or rare is no longer defensible against the data.

Autonomous orchestration is the watershed of 2025. Anthropic's GTG-1002 disclosure shows a real adversary running an end-to-end intrusion through an LLM-driven agent at thousands of requests per second, with humans only at four to six checkpoints. CrowdStrike's 89% YoY jump in AI-enabled attacks is the same trend at population scale.

Phishing and voice fraud are where AI delivers the biggest immediate uplift. AI-generated lures click at 4.5 times the rate of human-written ones (54% vs 12% per CrowdStrike), KnowBe4 saw 82.6% of phishing emails using AI generation in late 2024 and early 2025, and Pindrop measured a 1,300% surge in deepfake fraud attempts inside contact centers.

Prompt injection turned operational. OWASP's 2025 Top 10 for LLMs ranks prompt injection LLM01, MITRE ATLAS added 14 new techniques covering AI agents in 2025, and CrowdStrike found adversaries exploited legitimate GenAI tools at 90+ organizations to generate credential-stealing or crypto-stealing commands. Production AI features are part of your attack surface.

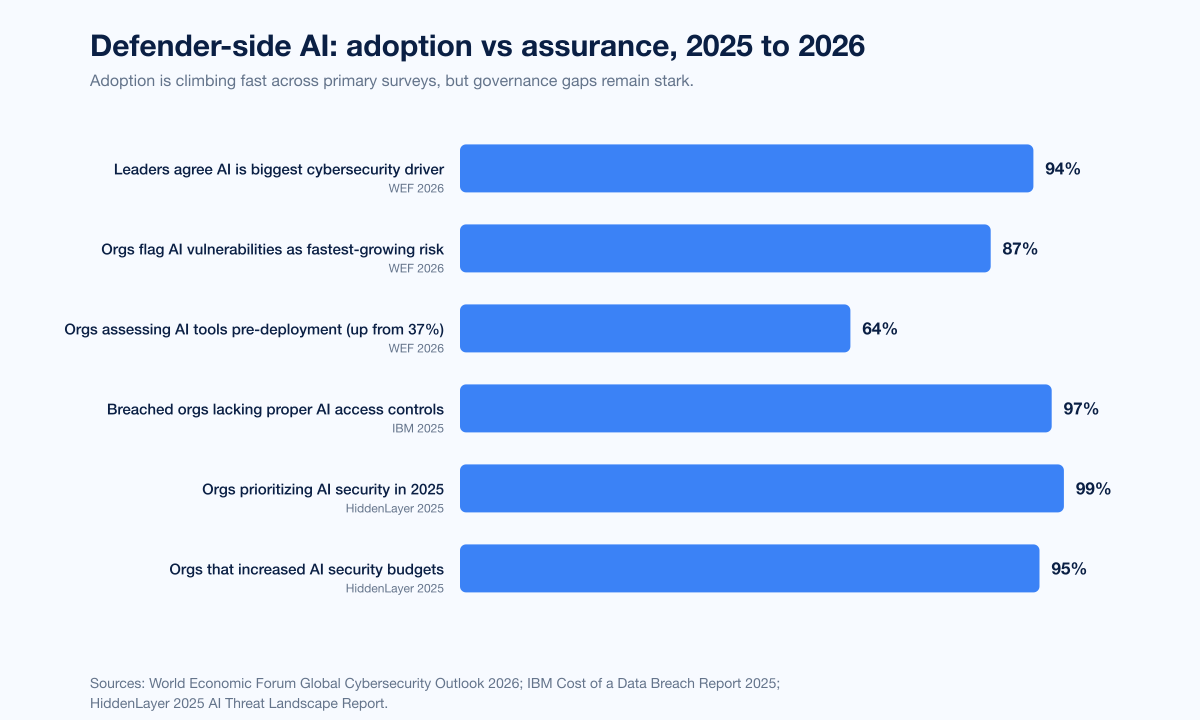

Defenders are scaling AI faster than they govern it. WEF found organizations assessing AI tools pre-deployment doubled from 37% to 64% in a year, but IBM warned 97% of breached orgs that experienced an AI-related incident lacked proper AI access controls and shadow AI added US$670,000 to average breach cost. Adoption is outpacing assurance.

Methodology

Date cutoff: April 25 2026. Lead data is full-year 2024 or 2025 telemetry where a primary publisher has released it; 2026 figures are labeled as forecasts or projections. Statistics that could not be reached via a named primary source on at least one verification pass were dropped rather than estimated. Where multiple primary publishers report compatible figures we cite the publisher whose methodology window matches the claim. Secondary news aggregators are cited only where they constitute the public record of a corporate incident.

Sources span: Anthropic Threat Intelligence (GTG-1002 Nov 2025; Aug 2025 report); OpenAI quarterly disruption reports (Feb, Jun, Oct 2025); Microsoft Digital Defense Report 2025, Cyber Signals Issue 9, Tycoon 2FA disruption, Jasper Sleet; CrowdStrike 2025 + 2026 GTRs and 2025 Threat Hunting Report; Mandiant M-Trends 2025 + 2026 (450,000 hours of IR data); IBM Cost of a Data Breach 2025 and X-Force 2025 + 2026; Verizon 2025 DBIR (22,052 incidents); ENISA Threat Landscape 2025 (4,875 incidents); WEF Global Cybersecurity Outlook 2026; HiddenLayer 2025 + 2026; Lakera Gandalf + arXiv; UK AISI; MITRE ATLAS (Jan 2026 update); OWASP LLM Top 10 v2025; FBI IC3 2024 + generative-AI PSAs; DOJ DPRK disruption; KnowBe4; Sumsub; Pindrop; and named incident records (Arup, Ferrari, WPP, KnowBe4).

Figure 1: Top attacker AI vectors and their headline numbers in 2024 and 2025. Bars index growth or share, not absolute volume; channels differ in base rate. Sources: IBM Cost of a Data Breach Report 2025; CrowdStrike 2025 GTR and 2026 GTR; Anthropic GTG-1002 disclosure; IBM X-Force 2025; Pindrop 2025.

How big is the attacker-AI problem in 2026?

Three datasets anchor the answer.

IBM Cost of a Data Breach Report 2025

IBM's 2025 Cost of a Data Breach Report, based on breach data from more than 600 organizations, found attackers used AI in 16% of breaches, the first time IBM has measured a discrete attacker-AI rate at that scale. Within that 16%: AI-generated phishing 37%, deepfake impersonation 35%, other (code generation, recon, automation) 28%. IBM also recorded the U.S. average breach cost at a record US$10.22M; shadow AI (unsanctioned employee use of generative AI) was a factor in 20% of breaches and added US$670,000 to the average breach cost; 97% of orgs that experienced an AI-related incident lacked proper AI access controls; and 13% of orgs reported breaches of AI models or applications outright.

Verizon 2025 DBIR

The 2025 DBIR, based on 22,052 incidents and 12,195 confirmed breaches, quantified two things crisply. Synthetically generated text in malicious emails has doubled over two years. The GenAI shadow-IT problem is large: 15% of employees routinely access GenAI on corporate devices, with 72% using personal email and 17% an unintegrated corporate email. Structural breach figures remain familiar: stolen credentials drive 22% of breaches, phishing 15%, human element 60%. AI is making the familiar attacks faster and more credible, not replacing them.

CrowdStrike 2025 + 2026 Global Threat Reports

CrowdStrike's 2025 Global Threat Report recorded vishing up 442% H1 to H2 2024, AI phishing 54% click rate vs 12% for human-written, and named DPRK-nexus actor FAMOUS CHOLLIMA infiltrating 320+ companies (+220% YoY). The 2026 GTR ("Year of the Evasive Adversary") hardens the 2025 picture: 89% YoY growth in AI-enabled adversary attacks, 82% malware-free detections, average eCrime breakout time of 29 minutes (fastest 27 seconds), adversaries exploiting legitimate GenAI tools at 90+ organizations via prompt injection, and a 37% increase in cloud-conscious intrusions with a 266% jump among state-nexus actors.

The defining 2025 incident: Anthropic's GTG-1002 disclosure

If 2024 was the year deepfake fraud broke into the headlines, 2025 was the year an AI agent ran an espionage campaign end to end. On November 13 2025, Anthropic publicly disclosed GTG-1002, what it described as the first reported AI-orchestrated cyber espionage campaign at scale.

What GTG-1002 actually did

A Chinese state-sponsored group instrumented Claude Code inside an MCP-connected automation framework. The campaign ran across roughly 30 organizations spanning technology firms, financial institutions, chemical manufacturers, and government entities. Claude Code conducted the bulk of the operational tradecraft: target reconnaissance and acquisition, vulnerability scanning, exploit generation, lateral movement experiments, credential harvesting, custom backdoor deployment, and structured exfiltration of intelligence-grade data.

Metric | Value |

|---|---|

Targets | approximately 30 organizations |

AI tactical-work share | approximately 80% to 90% |

Operational tempo | thousands of requests per second |

Human intervention points | 4 to 6 critical decision points per campaign |

Detection to containment | Mid-September anomalies, contained within ~10 days |

How the operators bypassed safety

Operators bypassed safety filters by social-engineering the model. They structured prompts that framed the activity as authorized defensive penetration testing on behalf of a legitimate cybersecurity firm and directed Claude Code to act as a helpful red teamer. The agent then conducted what was effectively offensive reconnaissance against unwitting third-party organizations. GTG-1002 also leaned heavily on open-source penetration testing tooling rather than custom malware, evading signature-based controls.

Why GTG-1002 is the watershed

Anthropic's framing, that this is the first publicly documented AI-orchestrated campaign rather than the first instance of attacker AI use, is what matters for buyers. AI-assisted phishing and code generation have been around for two years. GTG-1002 demonstrates end-to-end agentic execution at attack speed: not a model writing a clever lure for a human, but a model selecting targets, harvesting credentials, deciding which boxes to touch next, and prioritizing exfiltrated data by intelligence value. That capability is now in the public record. Every CISO planning a 2026 detection budget needs to assume sophisticated adversaries can deploy something similar.

Figure 2: Timeline of named AI-attack incidents that entered the public record between February 2024 and November 2025. Sources: Hong Kong Police via CNN (Arup); Marketing-Interactive (WPP); Fortune (Ferrari); KnowBe4 disclosure (DPRK IT worker); Anthropic August 2025 Threat Intelligence Report (GTG-5004 ransomware, vibe hacking); Anthropic, Nov 13 2025 (GTG-1002).

What Anthropic's August 2025 report added

Three months before the GTG-1002 disclosure, Anthropic published its August 2025 Threat Intelligence Report, which set the table for the November update. Three named operations stand out.

Vibe hacking extortion

A cybercriminal used Claude Code against at least 17 organizations across healthcare, emergency services, government, and religious institutions. Rather than encrypt with traditional ransomware, the operator threatened to expose stolen data unless ransoms (sometimes exceeding US$500,000) were paid. Claude Code automated reconnaissance, credential harvesting, and network penetration, and the model made tactical decisions including which data to exfiltrate and how to craft psychologically targeted extortion notes.

GTG-5004: AI-generated ransomware on dark forums

Anthropic identified a UK-based actor tracked as GTG-5004 that used Claude to develop and distribute ransomware variants on dark-web forums (Dread, CryptBB, Nulled) featuring ChaCha20 encryption, anti-EDR techniques, and Windows internals exploitation. Pricing ranged from US$400 to US$1,200 per package. Anthropic noted the actor could not implement complex technical components without AI assistance, yet was selling capable malware. The point: AI lowers the technical floor for ransomware authorship, not just usage.

Vietnamese critical infrastructure and DPRK fraud

The August report also disclosed a 9-month Chinese threat-actor campaign against Vietnamese critical infrastructure that integrated Claude across nearly all attack phases (the GTG-1002 precursor), and AI-augmented North Korean fraudulent employment in which workers unable to perform their engineering jobs without persistent AI assistance successfully infiltrated major tech companies. We unpack the broader DPRK pattern below.

OpenAI's quarterly disruption ledger

OpenAI's three 2025 disruption reports (February, June, October) disclose adversary use of ChatGPT and disrupted abuse clusters, with cumulative figures providing the best available trend line for one ecosystem.

Headline cumulative figure

Since February 2024, OpenAI has disrupted and reported more than 40 networks that violated its usage policies, including operations from authoritarian regimes attempting to control populations, scams, malicious cyber activity, and covert influence operations.

Country breakdown across the three 2025 reports

China. OpenAI banned an account cluster believed to operate as Chinese state-aligned threat actor UTA0388, known for targeting Taiwan's semiconductor industry, and a separate cluster that used ChatGPT to design surveillance, profiling, and online-monitoring tools likely intended for the Chinese government.

Russia. Russian-language threat groups, including Operation ScopeCreep, used ChatGPT to refine Windows malware components such as remote-access trojans and credential stealers, with malware distribution staged through trojanized gaming tools and cloud-based GitHub repositories.

North Korea. A DPRK-linked cluster used ChatGPT for malware and command-and-control development, including macOS Finder extensions and Windows Server VPN configurations.

Iran. Iranian threat actors ran ChatGPT-assisted social-media-comment generation campaigns covering geopolitical topics.

OpenAI's June 2025 report also documented 10 detailed case studies, including AI-driven cloud cyber operations: Russian-speaking groups using ChatGPT to develop sophisticated Windows malware, and Chinese-language influence operations posting AI-generated content on X, TikTok, Telegram, and Facebook.

The cumulative pattern is consistent across all three reports: nation-state and criminal abuse of frontier LLMs is a steady stream rather than a one-off spike, and the operational use cases skew toward task acceleration (debugging, content generation, reconnaissance assistance) rather than novel attack categories.

How attackers use AI: by phase

The useful framing for buyers is to break attacker AI use down by attack lifecycle phase, because defenses are phase-specific.

Reconnaissance and target research

Attackers use LLMs primarily as a reconnaissance accelerator. ENISA's 2025 Threat Landscape, analyzing 4,875 incidents July 2024 to June 2025, found AI-supported phishing campaigns reached more than 80% of all observed social engineering activity worldwide by early 2025, with phishing the dominant intrusion vector at roughly 60% of intrusion attempts.

Initial access: phishing and social engineering

This is where AI delivers the largest immediate uplift.

Click rate. AI-generated phishing emails land a 54% click-through rate, 4.5x the 12% rate of human-written phishing (CrowdStrike 2025 GTR; corroborated by Microsoft Digital Defense Report 2025).

Volume. KnowBe4 measured a 17.3% rise in phishing-email volume Sept 2024 to Feb 2025 vs the prior six months, with 82.6% of analyzed emails using AI-generated content.

Voice phishing. Pindrop's 2025 report, analyzing 1.2B+ contact-center calls, recorded a 1,300% YoY surge in deepfake fraud attempts, 0.33% of Q4 2024 calls containing a synthetic voice (+173% vs Q1), insurance attacks +475%, banking attacks +149%, and projected US contact-center fraud exposure of US$44.5B in 2025. CrowdStrike's +442% vishing growth between H1 and H2 2024 confirms the trend.

AiTM phishing scale. Microsoft's 2026 Tycoon 2FA disruption blog reported that by mid-2025, Tycoon 2FA accounted for ~62% of all phishing attempts blocked by Microsoft, including 30M+ emails in a single month, reaching 500K+ organizations per month. Microsoft, Europol, and 11 security firms seized 330 of the platform's domains in the takedown.

Exploit development and code generation

AISI's 2025 evaluations of frontier LLMs on cyber ranges found the length of cyber tasks (measured in human-expert hours) that models can complete unassisted is doubling roughly every eight months. AI models could complete apprentice-level cyber tasks 50% of the time on average in 2025, up from just over 10% in early 2024, and AISI tested the first model that could complete expert-level tasks typically requiring more than 10 years of practitioner experience.

In practice, this maps to malware authoring. Anthropic's GTG-5004 disclosure showed an actor selling capable ransomware whose human author could not implement complex technical components without AI. The economics of that are stark: the technical bar for ransomware authorship is now whatever the underlying model can do for free or cheap.

Persistence, lateral movement, and exfiltration

Mandiant's M-Trends 2026, drawing on roughly 450,000 hours of incident-response work, identifies AI-aware malware families as a 2025 development:

PROMPTFLUX and PROMPTSTEAL actively query LLMs during execution to support evasion.

QUIETVAULT credential stealer probes compromised machines for AI command-line tools and, when found, executes prompts to locate config files and harvest developer tokens.

Mandiant also documented that the time between initial compromise and the access handoff from one threat cluster to another has collapsed from more than 8 hours in 2022 to 22 seconds in 2025. The division-of-labor model (one cluster gains access, hands off to an operator for follow-on operations) appeared in 9% of 2025 investigations, up from 4% in 2022. AI shortens both legs of that handoff.

Influence operations and disinformation

OpenAI's 2025 disruption reports focused heavily on covert influence operations. The Microsoft Digital Defense Report 2025 noted that Microsoft's Threat Analysis Center tracked at least 70 Russian actors involved in Ukraine-focused disinformation. The DOJ disrupted Russian-directed influence campaigns ("Doppelganger") that impersonated The Washington Post, Fox News, NATO, and the Polish, Ukrainian, German, and French governments. The 2024 election cycle did not produce the apocalyptic deepfake scenarios feared, but lower-fidelity AI-enhanced content (audio fakes, simple video memes) had measurable effects on micro-targeted audiences.

Figure 3: Prompt injection and adversarial-LLM telemetry from primary publishers, 2024 to 2025. Sources: Lakera Gandalf and arXiv 2501.07927v1; HiddenLayer 2025 AI Threat Landscape Report; OWASP Top 10 for LLM Applications v2025; CrowdStrike 2026 GTR.

Prompt injection: the new operational risk surface

Prompt injection, where attacker-controlled text manipulates an LLM-backed application into ignoring its system prompt or executing unintended actions, has moved from research curiosity to operational risk surface. Three primary sources frame the picture.

Lakera Gandalf: the world's largest adversarial-prompt corpus

Lakera's Gandalf adversarial-prompt platform has been online since May 2023. As of early 2025, more than one million players have submitted over 40 million prompts and password guesses, generating a 279,000-prompt-attack dataset for academic research per the arXiv paper "Gandalf the Red". Players have spent more than 25 combined years on the platform. The corpus is the closest thing the public has to a global ground-truth catalog of how humans naturally attempt prompt injection.

HiddenLayer 2025 AI Threat Landscape Report

HiddenLayer's 2025 report found 33% of reported 2024 breaches involved attacks on internal or external chatbots (prompt injection, unauthorized data extraction, behavior manipulation the top techniques); 97% of organizations now use models from public repositories (up 12% YoY) but only 49% scan those models pre-deployment; 45% of breaches involved malware introduced through public model repositories; and 99% of organizations are prioritizing AI security in 2025, with 95% increasing AI security budgets. The 2026 follow-up adds: as agents browse the web and execute code, prompt injection is no longer just a model flaw, it is an operational security risk with direct paths to system compromise.

CrowdStrike: prompt injection in the wild

CrowdStrike's 2026 GTR documented adversaries exploiting legitimate GenAI tools at 90+ organizations in 2025 by injecting prompts to generate credential-stealing or crypto-stealing commands. The pattern: attacker plants a poisoned document or web page, victim's AI assistant reads it during a normal workflow, then executes attacker instructions inside the victim's authenticated session.

OWASP and MITRE codify the problem

The OWASP Top 10 for LLM Applications v2025 ranks prompt injection (LLM01) the top vulnerability, followed by Data and Model Poisoning (LLM04), Improper Output Handling (LLM05), and Unbounded Consumption (LLM10, the DoS category). MITRE ATLAS added 14 new techniques in 2025 covering AI agents, and the January 2026 update added three case studies on MCP server compromises, indirect prompt injection via MCP channels, and malicious AI agent deployment. For builders shipping AI features in 2026: treat prompt injection like XSS in 2009. Assume any text the model reads is potentially adversarial, sanitize at every boundary, keep model privileges as low as possible.

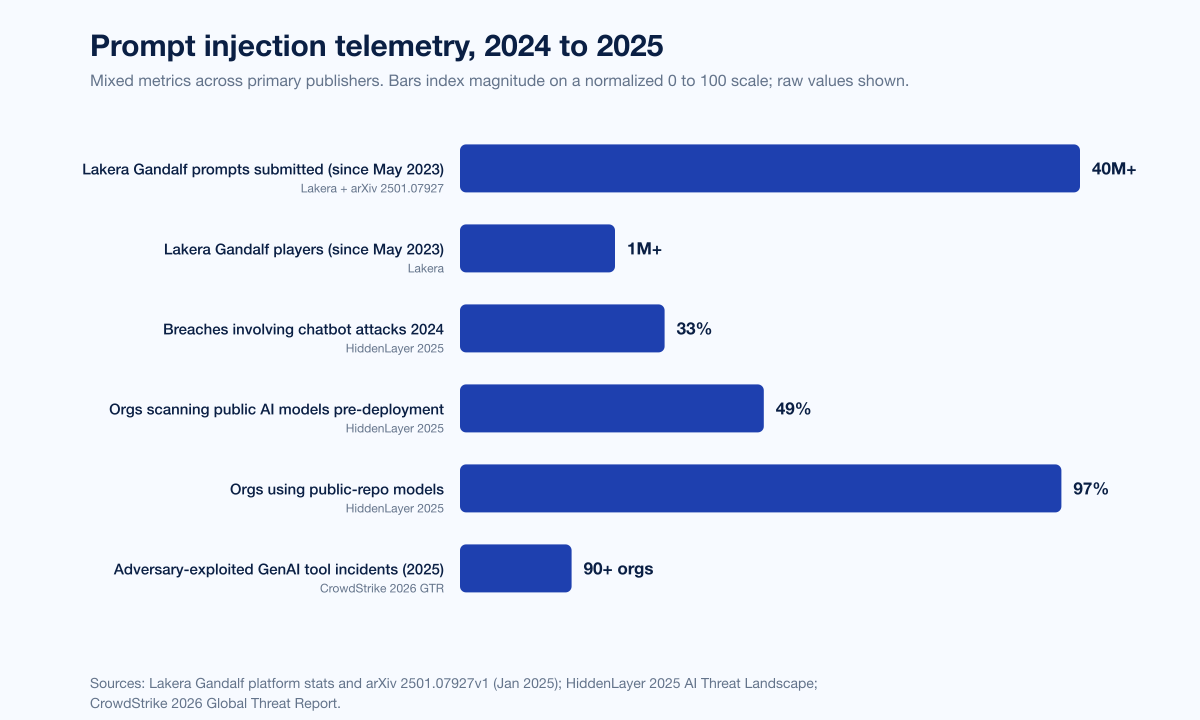

Nation-state and criminal AI use, by actor

Different threat actors use AI for different things. The pattern across the 2025 reports:

China: end-to-end agentic operations and surveillance tooling

GTG-1002 is the marquee Chinese-attributed AI campaign. Anthropic's August 2025 report also disclosed a separate 9-month Chinese campaign against Vietnamese critical infrastructure. OpenAI's October 2025 disruption named UTA0388 (targeting Taiwan's semiconductor industry) and a surveillance-tool design cluster likely linked to Chinese government users. CrowdStrike's 2026 GTR records a 266% jump in cloud-conscious intrusions among state-nexus actors, with Chinese actors prominent.

Russia: malware refinement and influence operations

OpenAI's June 2025 report documented Operation ScopeCreep, a Russian-speaking group using ChatGPT to develop Windows malware staged through trojanized gaming tools and GitHub. Microsoft's 2025 Digital Defense Report tracks 70+ Russian actors involved in Ukraine-focused disinformation. The DOJ's Doppelganger disruption shut down a Russian-directed influence operation impersonating Western media outlets and government agencies.

North Korea (DPRK): IT worker fraud at industrial scale

The DPRK IT worker scheme is one of the most successful AI-augmented insider threats of the past two years.

Fortune, citing CrowdStrike data, reported DPRK IT workers infiltrated 320+ companies in 12 months, a +220% YoY jump.

The DOJ's June 30 2025 disruption recorded searches of 29 "laptop farms" across 16 states, seizure of 29 financial accounts, and 21 fraudulent websites taken down. The scheme used the stolen identities of at least 80 US persons and generated more than US$5M in illicit revenue.

Microsoft's Jasper Sleet writeup and Okta's April 2025 analysis document operators using real-time deepfake video during interviews so a single human can appear as multiple synthetic personas. KnowBe4's July 2024 disclosure made the pattern public when an operator cleared its hiring process before Day 1 device-posture flagged the workstation. One affected crypto startup reportedly lost more than US$900,000 when a hired DPRK developer drained the wallet.

Iran: low-cost influence operations

OpenAI's 2025 reports document Iranian threat actors running ChatGPT-assisted social-media comment generation campaigns covering geopolitical topics. Iran's pattern remains influence operations rather than offensive cyber.

Figure 4: AI cyber attack activity by nation-state actor, 2024 to 2025. Bars index disclosed campaigns and named operations rather than absolute volume. Sources: Anthropic GTG-1002 disclosure; OpenAI October 2025 disruption report; Microsoft Digital Defense Report 2025; CrowdStrike 2025 Threat Hunting Report; DOJ DPRK IT worker disruption.

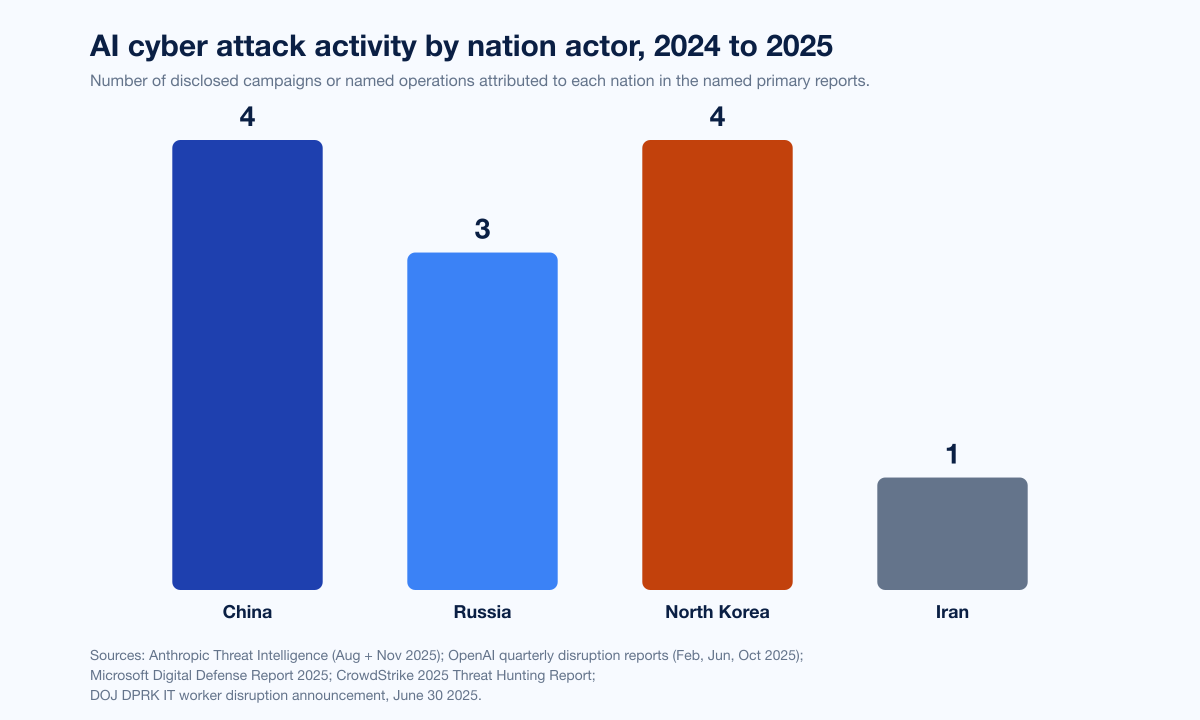

Dark-web AI tooling: the WormGPT economy

A small but lucrative dark-market exists for jailbroken or purpose-built malicious LLMs. Trustwave SpiderLabs and Barracuda have catalogued the most prevalent variants:

Tool | Pricing | Notes |

|---|---|---|

WormGPT (original) | US$110/month, US$5,400 lifetime | First widely sold malicious LLM; offline mid-2023, revived as wrappers |

FraudGPT | US$90 to US$200/month, US$1,700/year | BEC and phishing focus |

Evil-GPT | ~US$10 one-time | Python wrapper, low cost for mass adoption (Barracuda, Aug 2025) |

WormGPT 2024 to 2025 variants | varies | Built on Mixtral and a Grok wrapper, custom system prompts to bypass guardrails (CSO Online, Jun 2025) |

Xanthorox AI | unknown | Locally hosted custom AI infrastructure designed to evade detection (ENISA 2025) |

The economic point: dark-market tooling exists, but is not the headline story. The headline is that adversaries increasingly use legitimate frontier LLMs through prompt-engineered jailbreaks (as GTG-1002 did) rather than paying for boutique malicious models. Frontier LLMs are more capable, and a determined operator can usually frame the prompt as defensive testing or research.

Defender-side AI: adoption is outpacing assurance

Attackers are not the only ones running on AI in 2026.

Adoption metrics

WEF's 2026 outlook found 94% of leaders agree AI is the single most significant driver of cybersecurity change, 87% flag AI vulnerabilities as the fastest-growing cyber risk, and pre-deployment AI tool security assessment doubled from 37% to 64% YoY (one in three still have no validation process). Microsoft thwarted US$4 billion in fraud attempts using AI defenses between April 2024 and April 2025, blocking ~1.6 million bot signup attempts per hour and rejecting 49,000 fraudulent partnership enrollments. HiddenLayer's 2025 survey found 99% of orgs prioritize AI security and 95% have increased AI security budgets.

Governance gaps

IBM's 2025 report found 97% of breached orgs with an AI-related incident lacked proper AI access controls. Shadow AI adds US$670,000 to the average breach cost. Verizon DBIR found 15% of employees use GenAI on corporate devices (72% via personal email, 17% via unintegrated corp email). Only 24% of generative AI projects are secured per IBM X-Force 2025. HiddenLayer found 97% of orgs pull models from public repositories but only 49% scan them pre-deployment.

Governance frameworks shipped in 2024 to 2026

NIST AI 600-1 (Generative AI Profile of the AI RMF), July 26 2024, codifies risk-management actions across governance, content provenance, pre-deployment testing, and incident disclosure. OWASP LLM Top 10 v2025 defines the canonical LLM vulnerability taxonomy. MITRE ATLAS added 14 AI-agent techniques in 2025 and three case studies in January 2026 covering MCP-related compromises. CISA's AI Cybersecurity Collaboration Playbook (January 2025) published joint US/Australia/UK guidance with explicit reference to ATLAS-style threat modeling. The UK's AI Security Institute publishes recurring frontier-model cyber evaluations.

Figure 5: Defender-side AI adoption and governance metrics, 2025 to 2026. Sources: WEF Global Cybersecurity Outlook 2026; Microsoft Cyber Signals Issue 9; IBM Cost of a Data Breach Report 2025; HiddenLayer 2025 AI Threat Landscape.

Industry breakdown: who gets hit

Attacker-AI activity is uneven across industries. Pulling together the 2025 reports:

Sector | What the data says | Source |

|---|---|---|

Technology | GTG-1002 named tech firms among ~30 targets; UTA0388 targets Taiwan semiconductor; DPRK IT worker scheme infiltrated tech-heavy hiring pipelines (320+ companies) | Anthropic Nov 2025; OpenAI Oct 2025; CrowdStrike 2025 Threat Hunting Report |

Financial services | GTG-1002 named financial institutions; Pindrop measured deepfake fraud at +475% in insurance, +149% in banks; FraudGPT pricing optimized for BEC | Anthropic; Pindrop 2025; LevelBlue (Trustwave) |

Government | GTG-1002 named government entities; Doppelganger Russian influence ops impersonated NATO + multiple foreign ministries; vibe-hacking extortion targeted gov bodies | Anthropic; DOJ; Anthropic Aug 2025 |

Healthcare and emergency services | Vibe-hacking extortion targeted at least 17 orgs across healthcare and emergency services with ransom demands sometimes exceeding US$500K | Anthropic Aug 2025 |

Chemical manufacturing | GTG-1002 explicitly listed chemical manufacturers among targets | Anthropic Nov 2025 |

Critical infrastructure | 9-month Chinese campaign vs Vietnamese critical infrastructure leveraged Claude across nearly all attack phases | Anthropic Aug 2025 |

Insurance | +475% synthetic voice attacks 2024 vs 2023 | Pindrop 2025 |

Religious institutions | Vibe-hacking extortion among the 17 targeted orgs | Anthropic Aug 2025 |

The cross-sector pattern is consistent with non-AI cyber: high-value-IP, regulated-data, and complex-vendor-ecosystem industries are the primary targets, with healthcare and government especially vulnerable to extortion.

Forward outlook: what primary publishers project for 2026

Three forecasts from named primary sources merit explicit citation:

WEF Global Cybersecurity Outlook 2026 projects data leaks associated with generative AI (34%) and the advancement of adversarial capabilities (29%) as the leading AI-security concerns, a striking inversion of 2025 (adversarial capabilities led at 47%; data leaks trailed at 22%).

CrowdStrike 2026 Global Threat Report projects continued growth in AI-enabled tradecraft, particularly cloud-conscious intrusions and AI-aided social engineering, with the "evasive adversary" pattern (malware-free intrusions, valid-credential abuse, AI-prompt manipulation) becoming the default.

Mandiant M-Trends 2026 projects continued compression of attacker dwell time and initial-access handoff (currently 22 seconds), driven by AI-aware malware and division-of-labor specialization across the eCrime supply chain.

The unifying signal: 2026 is the year AI moves from a force multiplier on existing attack categories to a structural change in how intrusions get planned, executed, and scaled.

What this means for defenders

Five concrete buyer actions the data supports:

Treat IDV, phishing, and vishing channels as compromised by default; rebuild verification through out-of-band controls. Pindrop's 1,300% deepfake fraud growth, CrowdStrike's 442% vishing growth, and IBM's 35% deepfake-impersonation share of attacker-AI breaches are too much volume to defend with training alone. Add out-of-band passphrase callbacks for every wire transfer and credential reset, deploy active-liveness IDV with injection-attack detection, and consider voice-deepfake detection for high-value contact centers.

Inventory and govern shadow AI. Verizon's 15% shadow-AI usage and IBM's 20% breach-rate contribution are an actuarial floor for cost. Get an SSO-integrated enterprise AI footprint, monitor for personal-email signups against corp domains, and apply DLP to known AI-tool egress.

Treat prompt injection like XSS in 2009. Assume any text your AI features read is potentially adversarial. Sanitize at every boundary, keep model privileges low, log every tool call the agent makes, and run penetration tests against AI features with prompt-injection scenarios. OWASP LLM Top 10 v2025 is the starting reference; MITRE ATLAS the second pass.

Run red team engagements that explicitly include AI-orchestrated scenarios. GTG-1002 is the proof of concept. Stingrai's PTaaS offering includes AI-aware red-team scenarios and adversarial-prompt testing for production AI features. For DPRK IT worker mitigation specifically, revisit hiring controls (live unscripted interviews, identity-document forensics, on-day-1 device-posture verification).

Govern defender-side AI with the same rigor as attacker-AI controls. WEF found pre-deployment assessment doubled YoY, but a third still have no validation process. IBM's 97% control-gap among breached orgs is the cost. Deploy ATLAS-mapped pre-deployment testing, scan public repos before pulling models, and treat AI access controls as table-stakes alongside traditional IAM.

For deeper coverage of related AI-attack vectors, see Stingrai's sibling references on Deepfake Statistics 2026, Phishing Statistics 2026, Compromised Credential Statistics 2026, Insider Threat Statistics 2026, Supply Chain Attack Statistics 2026, and Malware Attack Statistics 2026.

Frequently Asked Questions

What is the most cited AI cyber attack statistic for 2026?

The single most cited AI-attack statistic for 2026 is IBM's finding that AI was used in 1 in 6 (16%) of breaches in its 2025 Cost of a Data Breach Report, with phishing (37% of attacker-AI cases) and deepfake impersonation (35%) the dominant tactical use cases. The single most cited named incident is Anthropic's GTG-1002 disclosure of November 13, 2025, the first publicly documented AI-orchestrated cyber espionage campaign, in which a Chinese state-sponsored group used Claude Code to autonomously execute roughly 80 to 90 percent of tactical work against approximately 30 organizations.

What is GTG-1002 and why does it matter?

GTG-1002 is Anthropic's designation for the first publicly documented AI-orchestrated cyber espionage campaign at scale, attributed to a Chinese state-sponsored group and disclosed November 13 2025. The group instrumented Claude Code in an MCP-connected agentic framework to autonomously execute roughly 80 to 90 percent of intrusion tradecraft against approximately 30 organizations across tech, finance, government, and chemical manufacturing, at thousands of requests per second with humans only at four to six critical decision points per campaign. It matters because it is the first time end-to-end agentic intrusion tradecraft has been publicly documented in a real adversary, not a benchmark study.

How much do AI-generated phishing emails outperform human-written ones?

CrowdStrike's 2025 Global Threat Report measured AI-generated phishing emails landing a 54% click-through rate, against 12% for human-written phishing, a 4.5x uplift. Microsoft's Digital Defense Report 2025 corroborates the click-rate finding. KnowBe4's analysis of phishing emails between September 2024 and February 2025 found 82.6% used some form of AI-generated content, alongside a 17.3% rise in phishing volume vs the prior six months.

What share of breaches involve attacker AI in 2025?

Per IBM's 2025 Cost of a Data Breach Report, 1 in 6 (16%) of breaches in IBM's research dataset involved attackers using AI. Within that 16%, AI-generated phishing accounted for 37% of cases and deepfake impersonation for 35%. The U.S. average breach cost rose to a record US$10.22M, and 13% of organizations reported breaches of AI models or applications themselves.

Which industries are hit hardest by AI cyber attacks?

The clearest industry signal in the 2025 reports is that high-value-IP, regulated-data, and complex-vendor-ecosystem sectors are primary targets. Anthropic's GTG-1002 disclosure named technology firms, financial institutions, government entities, and chemical manufacturers among approximately 30 targets. Anthropic's vibe-hacking extortion case named 17 victim organizations across healthcare, emergency services, government, and religious institutions. Pindrop's 2025 report found insurance attacks rose +475% and banking attacks +149% in 2024. The DPRK IT worker scheme has hit tech-heavy hiring pipelines hardest, with 320+ companies infiltrated in the past 12 months per CrowdStrike data.

What is prompt injection and how prevalent is it?

Prompt injection is a class of attack where attacker-controlled text manipulates a large language model into ignoring its system prompt or executing unintended actions. OWASP's Top 10 for LLM Applications v2025 ranks prompt injection LLM01, the top vulnerability category for LLM-backed applications. HiddenLayer's 2025 AI Threat Landscape Report found 33% of reported breaches in 2024 involved attacks on internal or external chatbots, with prompt injection a primary technique. CrowdStrike's 2026 Global Threat Report documented adversaries exploiting legitimate GenAI tools at 90+ organizations in 2025 by injecting prompts to generate credential-stealing or crypto-stealing commands. Lakera's Gandalf platform has logged 40+ million prompt-injection attempts from 1+ million players since May 2023.

What does Anthropic's August 2025 report disclose?

Anthropic's August 2025 Threat Intelligence Report disclosed three named operations: a "vibe hacking" extortion case against at least 17 organizations with ransoms over US$500K, a UK-based GTG-5004 actor selling AI-built ransomware for US$400 to US$1,200, and a 9-month Chinese campaign vs Vietnamese critical infrastructure. The report also covered AI-augmented DPRK fraudulent employment.

What does the FBI say about AI-enabled fraud?

The FBI's December 2024 PSA warns that voice cloning from a few seconds of public audio is now trivial. The 2024 Internet Crime Report recorded US$16.6B in total cybercrime losses (+33% YoY), including US$2.77B in BEC losses across 21,442 incidents. The FBI's May 2025 PSA flagged AI voice and SMS impersonation of senior US officials. The DOJ's June 30 2025 disruption of North Korean IT workers searched 29 laptop farms across 16 states and seized 29 financial accounts.

How much fraud has Microsoft blocked using AI?

Per Microsoft Cyber Signals Issue 9 (April 2025), Microsoft thwarted approximately US$4 billion in AI-powered fraud attempts between April 2024 and April 2025. In the same window Microsoft blocked roughly 1.6 million bot signup attempts per hour and rejected 49,000 fraudulent partnership enrollments. The figure represents aggregated fraud and scam attempts against Microsoft and its consumer + enterprise customers across a 12-month period.

What is the difference between attacker AI and defender AI in 2026?

Attacker AI refers to threat-actor use of AI for any phase of intrusion: phishing, deepfake impersonation, exploit code generation, reconnaissance acceleration, prompt-injection of victim AI features, and end-to-end agentic orchestration of intrusions (as in GTG-1002). Defender AI refers to defensive deployment: AI-driven SOC triage, AI-augmented detection engineering, AI fraud-prevention controls (Microsoft's US$4B blocked is a defender-AI metric), and AI-augmented threat hunting. The WEF Global Cybersecurity Outlook 2026 found 94% of leaders agree AI is the single most significant driver of cybersecurity change in 2026, but 87% also flag AI vulnerabilities as the fastest-growing cyber risk: defender adoption is outpacing assurance, which is what creates exposure.

Where can I get the latest AI cyber attack data?

Track Anthropic Threat Intelligence (intermittent), OpenAI's quarterly disruption reports, the Microsoft Digital Defense Report (annual, October), IBM Cost of a Data Breach Report (annual, July) and X-Force Threat Intelligence Index (annual, February), CrowdStrike GTR (annual, February) and Threat Hunting Report (annual, August), Mandiant M-Trends (annual, March), Verizon DBIR (annual, May), ENISA Threat Landscape (annual, October), WEF Global Cybersecurity Outlook (annual, January), and HiddenLayer AI Threat Landscape Report (annual, March). UK AISI and MITRE ATLAS publish ongoing research. The References section below numbers all 26 sources used here.

References

Anthropic. Disrupting the first reported AI-orchestrated cyber espionage campaign. Nov 13 2025. https://www.anthropic.com/news/disrupting-AI-espionage. GTG-1002 disclosure.

Anthropic. Detecting and countering misuse of AI: August 2025. Aug 27 2025. https://www.anthropic.com/news/detecting-countering-misuse-aug-2025. Vibe hacking, GTG-5004 ransomware, Vietnamese CI campaign, DPRK fraudulent employment.

OpenAI. Disrupting malicious uses of AI: October 2025. Oct 2025. https://openai.com/global-affairs/disrupting-malicious-uses-of-ai-october-2025/. Cumulative 40+ networks disrupted since Feb 2024.

OpenAI. Disrupting malicious uses of AI: June 2025. Jun 2025. https://openai.com/global-affairs/disrupting-malicious-uses-of-ai-june-2025/. 10 case studies including Operation ScopeCreep.

OpenAI. Disrupting malicious uses of our models: February 2025 update. Feb 2025. https://cdn.openai.com/threat-intelligence-reports/disrupting-malicious-uses-of-our-models-february-2025-update.pdf.

Microsoft. Digital Defense Report 2025. Oct 2025. https://www.microsoft.com/en-us/security/security-insider/threat-landscape/microsoft-digital-defense-report-2025. AI threat landscape, nation-state activity, MTAC tracking.

Microsoft Security. Cyber Signals Issue 9: AI-powered deception. Apr 16 2025. https://www.microsoft.com/en-us/security/blog/2025/04/16/cyber-signals-issue-9-ai-powered-deception-emerging-fraud-threats-and-countermeasures/. US$4B fraud blocked Apr 2024 to Apr 2025.

Microsoft Security. Inside Tycoon2FA. Mar 4 2026. https://www.microsoft.com/en-us/security/blog/2026/03/04/inside-tycoon2fa-how-a-leading-aitm-phishing-kit-operated-at-scale/. Storm-1747; 62% of MS-blocked phishing by mid-2025; 330 domains seized.

Microsoft Security. Jasper Sleet: DPRK remote IT workers. Jun 30 2025. https://www.microsoft.com/en-us/security/blog/2025/06/30/jasper-sleet-north-korean-remote-it-workers-evolving-tactics-to-infiltrate-organizations/.

CrowdStrike. 2025 Global Threat Report. Feb 2025. https://www.crowdstrike.com/en-us/press-releases/crowdstrike-releases-2025-global-threat-report/. Vishing +442% H1 to H2 2024; AI phishing 54% click rate; FAMOUS CHOLLIMA 320+ companies.

CrowdStrike. 2026 Global Threat Report: Year of the Evasive Adversary. Feb 2026. https://www.crowdstrike.com/en-us/blog/crowdstrike-2026-global-threat-report-findings/. 89% YoY rise in AI-enabled attacks; 82% malware-free; 29-min eCrime breakout.

CrowdStrike. 2025 Threat Hunting Report. Aug 2025. https://ir.crowdstrike.com/news-releases/news-release-details/2025-crowdstrike-threat-hunting-report-adversaries-weaponize-and/.

Mandiant / Google Cloud. M-Trends 2025. Apr 2025. https://cloud.google.com/blog/topics/threat-intelligence/m-trends-2025. 11-day median dwell time; exploits 33% of initial vectors.

Mandiant / Google Cloud. M-Trends 2026. Mar 2026. https://cloud.google.com/blog/topics/threat-intelligence/m-trends-2026. 22-second initial-access handoff time; PROMPTFLUX, PROMPTSTEAL, QUIETVAULT.

IBM. Cost of a Data Breach Report 2025. Jul 30 2025. https://newsroom.ibm.com/2025-07-30-IBM-Report-Breaches-Cost-U-S-Businesses-10-22M-on-Average-as-AI-Defenses-and-Attacks-Take-Off. 1 in 6 breaches use AI; shadow AI 20%; US$10.22M US average.

IBM. X-Force Threat Intelligence Index 2025. Feb 2025. https://www.ibm.com/think/x-force/x-force-threat-intelligence-index-2025-attackers-steal-sell-user-identities. 84% rise in infostealers via phishing.

IBM. X-Force Threat Intelligence Index 2026. Feb 2026. https://www.ibm.com/think/x-force/threat-intelligence-index-2026-securing-identities-ai-detection-risk-management.

Verizon. 2025 Data Breach Investigations Report. May 2025. https://www.verizon.com/business/resources/reports/2025-dbir-executive-summary.pdf.

ENISA. Threat Landscape 2025. Oct 2025. https://www.enisa.europa.eu/publications/enisa-threat-landscape-2025. 4,875 incidents Jul 2024 to Jun 2025; AI-supported phishing >80% of social engineering.

World Economic Forum. Global Cybersecurity Outlook 2026. Jan 2026. https://reports.weforum.org/docs/WEF_Global_Cybersecurity_Outlook_2026.pdf. 94% AI biggest driver; 87% flag AI vulns; pre-deployment assessment 64%.

HiddenLayer. 2025 AI Threat Landscape Report. Mar 2025. https://hiddenlayer.com/innovation-hub/hiddenlayer-ai-threat-landscape-report-reveals-ai-breaches-on-the-rise/. 33% of breaches involve chatbot attacks; only 49% scan public models.

HiddenLayer. 2026 AI Threat Landscape Report. Mar 2026. https://www.hiddenlayer.com/news/hiddenlayer-releases-the-2026-ai-threat-landscape-report-spotlighting-the-rise-of-agentic-ai-and-the-expanding-attack-surface-of-autonomous-systems.

Lakera. Gandalf adversarial-prompt platform. Since May 2023. https://gandalf.lakera.ai/ + arXiv "Gandalf the Red" Jan 2025 https://arxiv.org/html/2501.07927v1. 40M+ prompts from 1M+ players.

UK AI Security Institute. Advanced AI evaluations: May update. May 2025. https://www.aisi.gov.uk/blog/advanced-ai-evaluations-may-update. Cyber-task length doubling every 8 months.

MITRE ATLAS. Adversarial Threat Landscape for AI Systems. Jan 2026 update. https://atlas.mitre.org/. 14 new techniques in 2025; 3 case studies Jan 2026 on MCP compromises and prompt injection.

OWASP Gen AI Security Project. Top 10 for Large Language Model Applications v2025. Nov 2024. https://genai.owasp.org/llm-top-10/. LLM01 Prompt Injection top vulnerability.

Take action with Stingrai

If you are scoping AI-aware penetration testing or red-team engagements for production AI features, prompt-injection assessments against your LLM-backed applications, or DPRK-IT-worker hiring-pipeline hardening, book a discovery call with Stingrai. We are an offensive-security firm with PTaaS and red-team services that map directly onto the OWASP LLM Top 10, MITRE ATLAS, and adversarial-prompt scenarios documented in this post.