The single most informative document about Tor's anonymity model is not a peer-reviewed paper or a Tor Project announcement. It is a 2013 NSA training slide deck, leaked by Edward Snowden and published by The Guardian, titled "Tor Stinks." The deck concedes, in agency vernacular, that the United States' most resourced signals intelligence apparatus could not deanonymize Tor users at scale. It then describes the workarounds: a Firefox type-confusion exploit codenamed EgotisticalGiraffe delivered through a server-side packet-injection program called QUANTUM and a payload complex called FoxAcid. The exploit broke Tor users by breaking their browsers, not the Tor protocol. The slide deck is a clearer description of how Tor anonymity actually fails than most academic surveys.

The Tor protocol is real. The anonymity property it provides is also real. But that property is narrow, conditional, and adversary-relative, and a confident answer to "how anonymous is the dark web?" requires admitting all three of those qualifiers. Stingrai's research team has published 18 CVEs and routinely advises journalists, founders, and activists on the practical limits of network anonymity. This post is the user-side companion to our How Law Enforcement Tracks Dark Web Criminals explainer; where that post covers the operator side, this one covers the threat model from a user's perspective.

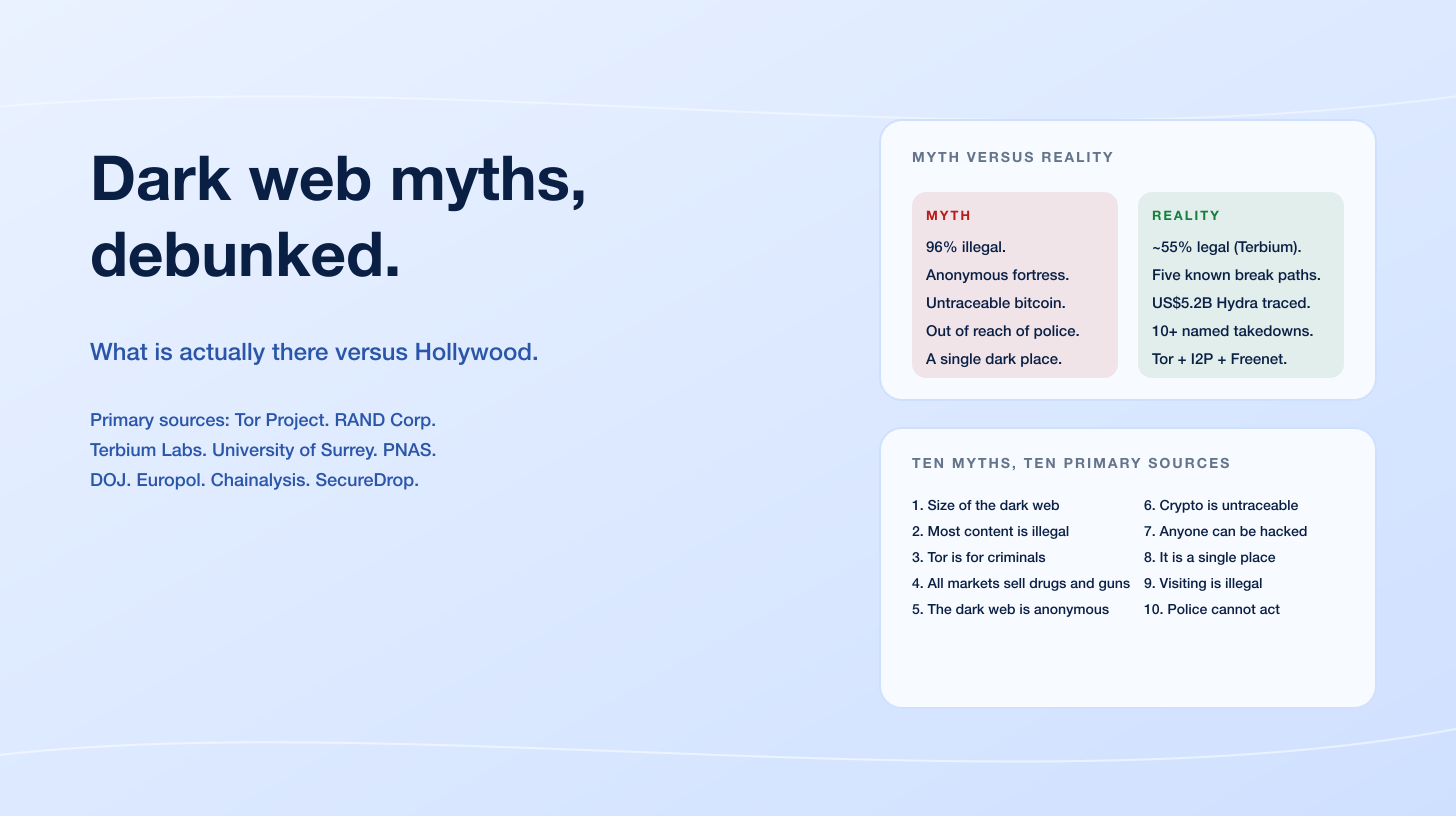

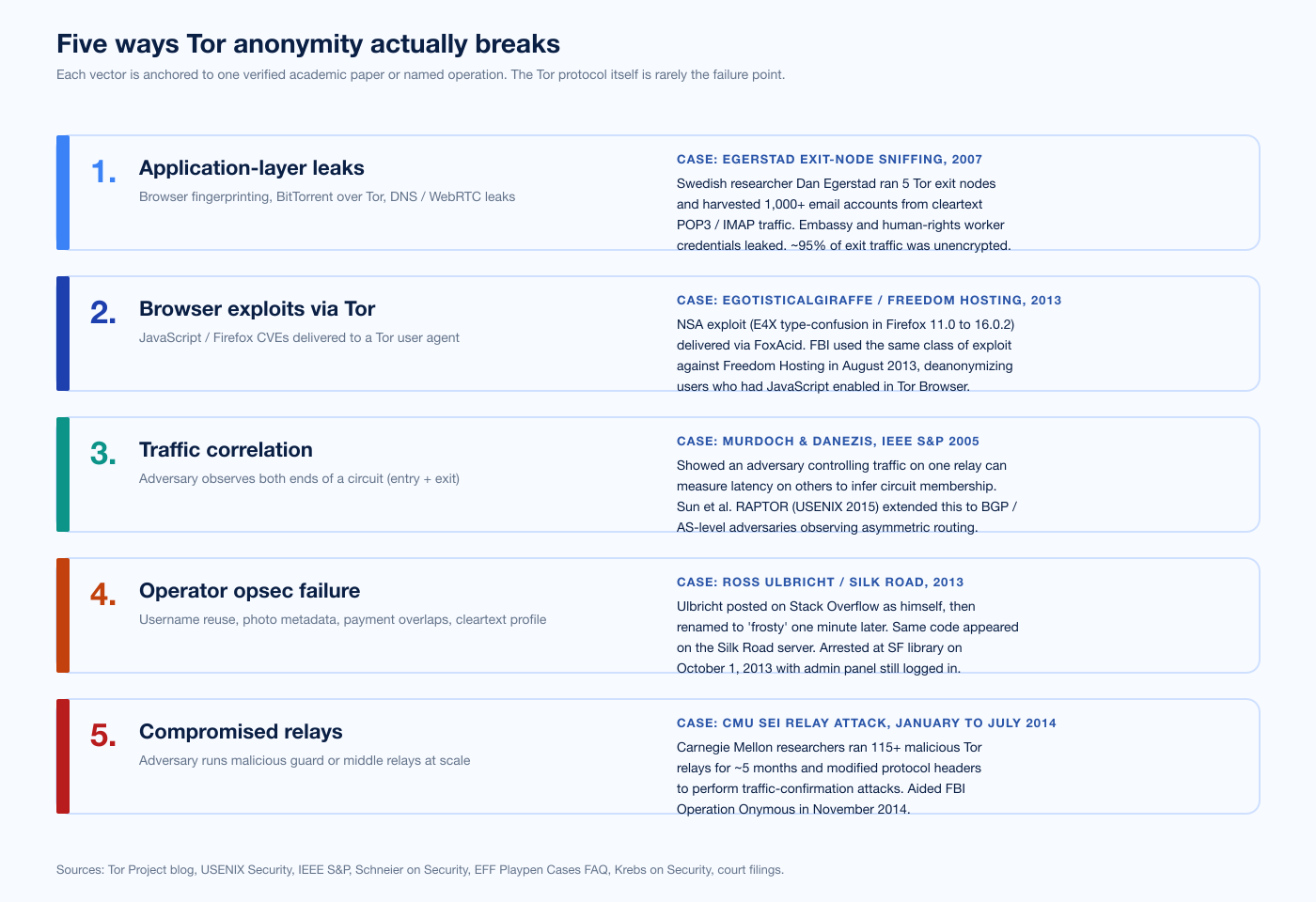

TL;DR: five ways Tor anonymity actually breaks

Application-layer leaks. Anything outside Tor Browser routes around the protocol. Dan Egerstad operated 5 Tor exit nodes in 2007 and harvested more than 1,000 cleartext POP3 / IMAP accounts, including embassy and human-rights worker credentials. BitTorrent over Tor leaks the real IP through tracker announces (INRIA, arXiv 1004.1461). DNS leaks happen if Tor is bolted onto a regular browser instead of using Tor Browser.

Browser exploits delivered through Tor. Tor Browser is a hardened Firefox build, and any Firefox CVE that survives the hardening is also a Tor Browser CVE. The NSA's EgotisticalGiraffe (Firefox E4X type confusion in versions 11.0 to 16.0.2) was the original tool against Freedom Hosting in August 2013. The FBI's Operation Pacifier deployed a Network Investigative Technique against Playpen for 13 days in 2015 and recovered real IP addresses for more than 1,300 visitors.

Traffic correlation. An adversary that can observe both ends of a circuit can correlate timing and packet sizes to infer the link. Murdoch and Danezis (IEEE S&P 2005) showed this with a partial-network adversary; Sun et al. (USENIX Security 2015) extended it to BGP-level routing manipulation; Kwon et al. (USENIX Security 2015) demonstrated 88% deanonymization of monitored hidden services through circuit fingerprinting.

Operator OPSEC failure. Most named operator arrests trace back to a username or email reuse predating the dark web service. Ross Ulbricht renamed his Stack Overflow account from his real name to "frosty" one minute after posting; the same Tor-via-PHP code appeared on the Silk Road server.

Compromised relays. Carnegie Mellon University's Software Engineering Institute operated more than 115 malicious Tor relays from January 30 through July 4, 2014, modifying relay-early protocol headers to perform traffic-confirmation attacks. The Tor Project published a security advisory. The data informed Operation Onymous in November 2014.

Figure 1: Five vectors that carry every documented Tor anonymity failure on record. The Tor protocol is rarely the weak link. The application layer above it, the browser inside it, an adversary outside it, the operator who runs a service on it, or the relays that carry it: that is where anonymity breaks. Sources: USENIX Security, IEEE S&P, ACM CCS, Tor Project security advisories, Schneier on Security, Krebs on Security, FBI court filings.

Key takeaways

Tor's anonymity is real, and it is narrow. The protocol does what its design paper promises: it hides the user's IP from the destination and the destination from the user's ISP, and it gives the user three layers of encryption so that no single relay sees both ends. That is a meaningful guarantee against an ordinary network observer. It is not a guarantee against a global passive adversary, against a powerful AS-level adversary that can observe both ends through routing manipulation, or against an attacker who delivers a browser exploit to the user.

Most documented anonymity failures are above the protocol. A user who runs Tor Browser default settings on a fully patched system, who never logs in to a real-name account, who uses HTTPS or onion services for end-to-end encryption, and who keeps JavaScript at the Safest mode is genuinely hard to deanonymize. A user who runs Tor outside Tor Browser, who logs in to anything tied to a real identity, who lets BitTorrent route over Tor, or who runs an outdated browser is not.

Operator anonymity is harder than user anonymity. Running a hidden service that interacts with thousands of users for years requires perfect operational discipline across the server configuration, payment infrastructure, forum cross-posting, and writing style. Real operators rarely satisfy all four. That asymmetry is the real reason most named takedowns of dark web markets are operator-side, not user-side.

Tails OS and Whonix VMs raise the floor. Tor Browser is the floor of Tor anonymity. Tails (an amnesic live-USB OS that routes everything through Tor and forgets the session at shutdown) and Whonix (a two-VM gateway and workstation isolation that enforces Tor at the network layer) raise the floor by blocking application-layer leaks at the operating-system level.

Anonymity is probabilistic, not absolute. A user is anonymous against a particular adversary with a particular budget for a particular session. The same user can be deanonymized by a different adversary with a different budget. Building a working mental model means thinking in adversaries, not in absolute statements.

What "the dark web" actually is

In ordinary usage the "dark web" almost always means Tor onion services (formerly called hidden services). I2P and Freenet exist; this post focuses on Tor because Tor accounts for the overwhelming majority of dark-web activity and because the academic and forensic literature on Tor is the deepest. The Tor Project metrics dashboard shows roughly 2.5M daily active relay users worldwide in 2025, varying from about 2.88M to 6.75M depending on censorship-related events. A PNAS paper by Jardine, Lindner, and Owenson measured that approximately 6.7% of daily Tor client engagement is with onion services, and approximately 85% is plain web browsing through Tor. The dark web is not the bulk of Tor traffic; it is a slice.

The Tor network in 2025 runs on roughly 8,000 active relays. Of those, about 2,500 are exit relays, about 5,300 are guard-eligible, and approximately 2,000 bridges provide entry to users in censored regions through pluggable transports like obfs4, Snowflake, Conjure, and WebTunnel. Tor's v2 onion services were retired on July 15, 2021; modern v3 addresses are 56 characters long, use SHA3 / ed25519 / curve25519 cryptography, and are widely considered cryptographically intact in 2026.

The political geography matters. The United States accounts for roughly 18.12% of directly-connecting Tor browser users (Nov 2024 to Feb 2025 series), with Germany second and Finland third by share. Russia leads in mean daily Tor users among censored regions, often above 10,000. The Tor Project's 2025 anti-censorship blog documents heavy use of Snowflake and WebTunnel by users in Iran and Russia, two adversaries that have invested significantly in Tor blocking.

How Tor anonymity actually works (the part that holds)

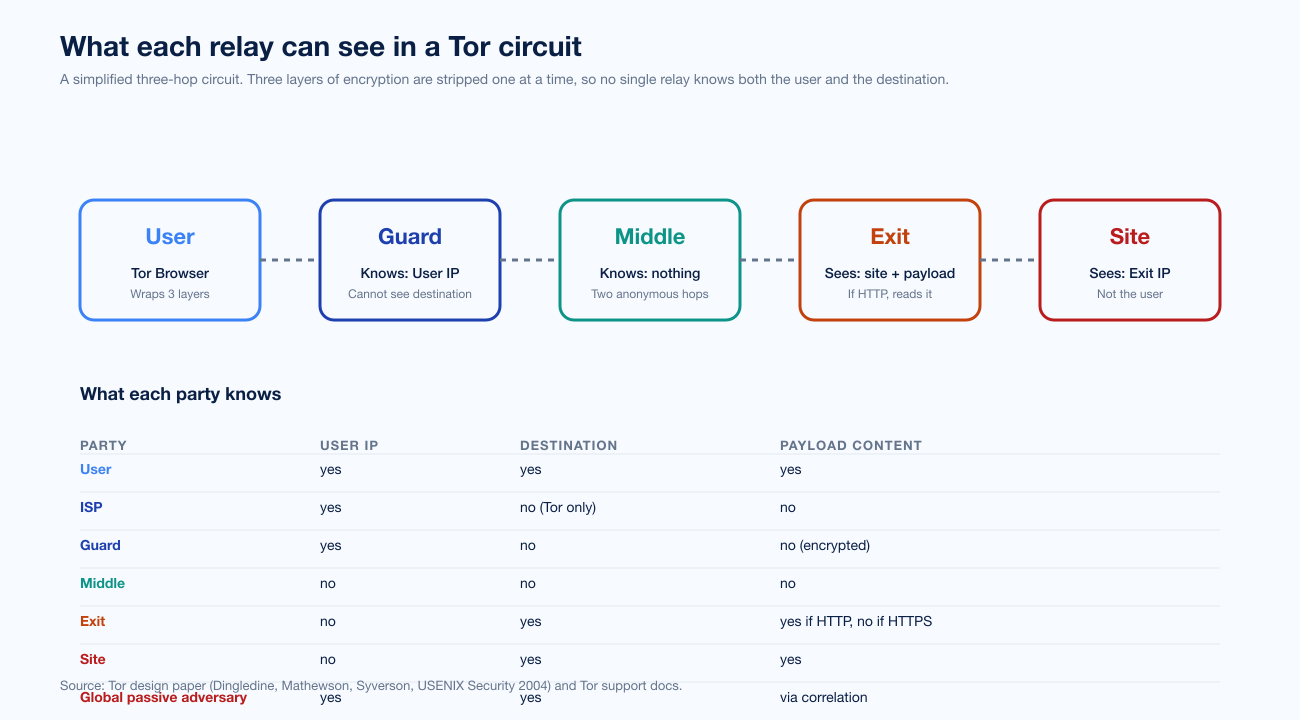

Tor wraps a user's traffic in three layers of encryption and routes it through three relays. The first relay is the guard: it sees the user's real IP address but cannot read the encrypted payload underneath the outer layer, and it does not know the destination. The second relay is the middle: it sees neither the user's IP nor the destination, and it strips one layer of encryption. The third relay is the exit: it sees the destination, removes the innermost Tor encryption layer, and forwards the request to the destination using whatever protocol the user chose (HTTP, HTTPS, SMTP, etc.). For onion services, there is no exit; the connection terminates at a rendezvous point inside the Tor network and the hidden service's location is never published in cleartext.

The Tor design paper (Dingledine, Mathewson, Syverson, USENIX Security 2004) lays out the threat model. Tor protects against a single-network observer: an ISP that watches your connection, an attacker on your local Wi-Fi, an AS that sees one side of your traffic. Tor explicitly does not protect against a global passive adversary, defined as an adversary that can observe both ends of every Tor circuit simultaneously. Tor also does not protect against attacks above the protocol: it does not stop a Firefox CVE from running, it does not stop you from logging in to a real-name account, and it does not stop your operating system from leaking DNS resolution.

Figure 2: A simplified three-hop Tor circuit. The visibility table shows what each party in the path observes. The guard relay knows the user IP but not the destination; the exit knows the destination but not the user; the middle knows neither. A global passive adversary, by definition, observes both ends and can correlate timing and packet sizes to infer the link. Sources: Tor design paper (Dingledine et al., USENIX Security 2004), Tor Project support documentation.

The three-hop default is a deliberate design tradeoff. One hop would be a trivial proxy with no anonymity property. Two hops would let an adversary that controlled both ends correlate trivially. Three hops give a structural argument that an attacker who controls neither the guard nor the exit cannot link traffic. Guard rotation matters: a Tor client picks one or a small number of guards and reuses them for two to three months in the current protocol (down from 30 to 60 days in older versions, and following research recommendations like Hopper et al.'s One Fast Guard for Life that suggested 9-month rotation). Reusing a guard reduces the chance that any particular session will, by sheer bad luck, get a malicious guard.

What Tor does not protect against

Tor's design paper is unusually honest about what the protocol does not do. The Tor Project's "Am I totally anonymous if I use Tor?" FAQ is even more explicit. The five sections below organize that admission into the user-side threat-model categories every reader needs to internalize.

1. Application-layer leaks

Tor is a network protocol. It does not know what your browser, your operating system, your BitTorrent client, or your Skype connection is doing on top of it. Anything that bypasses the Tor circuit (a DNS query that resolves locally, a BitTorrent tracker announce that contains your real IP, a WebRTC connection that opens a STUN exchange against a public server) is an anonymity break that the Tor protocol cannot prevent.

The cleanest case study is Dan Egerstad's 2007 demonstration. A Swedish security researcher, Egerstad operated five Tor exit nodes for several months and monitored the cleartext traffic passing through them. He harvested credentials for more than 1,000 email accounts (POP3 and IMAP, unencrypted at the time) including embassy workers, human rights organizations, and Fortune 500 employees. The technical lesson is that Tor wraps the path, not the payload. The lesson today is mitigated by HTTPS adoption (Cloudflare's Radar shows roughly 95%+ of measured web traffic is HTTPS in 2025) but not eliminated. The exit still sees the destination domain even when the payload is encrypted, and still sees the IP for non-HTTPS protocols (SMTP, IMAP, MySQL, SSH).

A second case study is the 2010 INRIA paper Compromising Tor Anonymity Exploiting P2P Information Leakage (Manils et al.), which showed that BitTorrent over Tor leaks the user's real IP through application-layer announcements made by the BitTorrent client to trackers. The same logic applies to any P2P or VoIP protocol that embeds endpoint information in the payload.

A third case study is DNS. Tor Browser bundles its own DNS resolution through the Tor exit. A user who configures a regular browser to use a SOCKS5 proxy pointed at Tor without also tunneling DNS through that proxy will leak the destination domain to the local resolver, which is typically the ISP. This is why the Tor Project ships an entire browser bundle rather than asking users to configure a regular browser as a Tor client.

2. Browser exploits delivered through Tor

Tor Browser is built on Firefox ESR with hardening. Hardening reduces but does not eliminate Firefox's attack surface. Any Firefox CVE that survives the hardening is also a Tor Browser CVE, and any browser exploit that runs to completion can read the user's real IP from the operating-system network stack and exfiltrate it through any non-Tor channel.

The canonical example is the NSA's EgotisticalGiraffe. In October 2013, The Guardian published a leaked NSA training deck describing a Firefox E4X (an XML extension to JavaScript) type-confusion vulnerability that worked against Firefox 11.0 through 16.0.2 and the Firefox 10.0 ESR base used in Tor Browser at the time. The exploit was delivered through QUANTUM (a server-side packet-injection program that watched for Tor user identification via XKeyscore) and FoxAcid (a payload-server complex that hosted the exploit and modular post-exploitation code). The vulnerability had been silently fixed in Firefox 17 (November 2012); the leaked deck noted that the NSA had not circumvented the fix as of January 2013, which is the kind of gap that closes within months on either side.

The FBI used the same technique class against Freedom Hosting in August 2013. Eric Eoin Marques, the operator, was arrested in Ireland on August 1, 2013 on a US extradition warrant. Within days, Freedom Hosting services began serving an embedded JavaScript exploit that read the Windows hostname and MAC address of visitors and reported it back to US law enforcement infrastructure. The FBI confirmed responsibility in a September 2013 Dublin court filing. Marques was sentenced to 27 years on September 6, 2021.

The FBI's Operation Pacifier is the most legally consequential example. After seizing the Playpen child-exploitation server in February 2015, the FBI hosted Playpen for 13 days from offices in Newington, VA. During those 13 days, every visitor received a Network Investigative Technique payload that exploited a Firefox vulnerability to copy the user's real IP and MAC information back to FBI infrastructure in Alexandria. Playpen had 215,000 registered users at takedown; the NIT identified more than 1,300 of them, with more than 350 charged in the United States and arrests reported in 120 countries. The Operation Pacifier litigation (multiple federal courts, Rule 41 challenges, the FBI's refusal to disclose the NIT source code) produced the most extensive judicial record of NIT-based investigations in US case law, and the 2016 Rule 41 amendments partially addressed the geographic-warrant gap.

The lesson for Tor users is simple. Tor Browser is a hardened browser, but a hardened browser is still a browser. Disabling JavaScript at Tor Browser's Safest mode meaningfully reduces the attack surface; running an unpatched build does not.

3. Traffic correlation

A global passive adversary that can observe both ends of every Tor circuit can correlate timing and packet sizes to infer which user is talking to which destination. The Tor design paper concedes this. The academic literature has progressively chipped away at the bet that no real adversary has that capability.

The seminal paper is Murdoch and Danezis, "Low-Cost Traffic Analysis of Tor" (IEEE S&P 2005). The authors showed that an adversary controlling traffic on one Tor relay could measure latency on others to infer which relays were on a target circuit, with modest operational capability and partial network access. Two decades later, this remains the foundational reference for low-cost traffic analysis on Tor.

Sun et al., "RAPTOR: Routing Attacks on Privacy in Tor" (Princeton, USENIX Security 2015) is the modern extension. AS-level adversaries can exploit asymmetric routing to observe at least one direction of user traffic at both ends of a Tor circuit; BGP churn naturally widens observation over time; strategic adversaries can deliberately hijack BGP paths to deanonymize users of specific guards. The attacks were demonstrated against a live Tor network.

Kwon et al., "Circuit Fingerprinting Attacks: Passive Deanonymization of Tor Hidden Services" (USENIX Security 2015) targeted hidden-service circuits specifically. Circuit-establishment patterns of hidden-service connections are visibly different from regular browsing, and a single malicious guard relay can identify hidden-service clients and operators with greater than 98% true positive rate. In an open-world setting (50 monitored hidden services), the approach achieved 88% identification with a 7.8% false positive rate.

Johnson et al., "Users Get Routed: Traffic Correlation on Tor by Realistic Adversaries" (ACM CCS 2013) modeled realistic AS-level adversaries and showed that, for a typical user, an AS-level adversary observing entry could deanonymize a Tor session within 3 months with high probability. The threat model is closer to a real ISP than to a hypothetical global passive adversary.

Traffic correlation is real, well-studied, and increasingly practical for adversaries with control over Internet routing. It is not the dominant cause of named user-side anonymity failures (those come from application-layer or browser-exploit attacks), but it is the dominant cause of academic skepticism about Tor's resistance to nation-state adversaries.

4. Operator OPSEC failure

Most named takedowns of dark web operators trace back to a username collision, a forum cross-post, or a payment trail that predates the dark web service. Ross Ulbricht is the canonical case. He created a Stack Overflow account on March 3, 2012 under his real name and posted a question asking how to connect to a Tor hidden service using PHP and curl. One minute later, he renamed the account to "frosty" and changed the email to a frosty@frosty mailbox. The same code appeared on the Silk Road server when the FBI seized it in October 2013. The system user account on that server was named frosty. The asymmetric SSH key pair on the server matched the Stack Overflow account.

That chain took IRS Special Agent Gary Alford years to assemble. Earlier evidence pointed in the same direction: an "altoid" handle on Bitcoin Talk in 2011 promoting a then-new "anonymous market," matching posts on the Shroomery site listing the marketplace, and a Gmail address resolving to rossulbricht@gmail.com. By the time Ulbricht was arrested at the Glen Park Branch Library in San Francisco on October 1, 2013 with the Silk Road admin panel logged in as "Dread Pirate Roberts," the identification chain was overwhelming.

The lesson generalizes. Maintaining perfect operational discipline across thousands of small interactions over years is genuinely hard. An investigator only needs one mistake. Across most named dark web takedowns, the operator's anonymity broke through human-side discipline failures, not through cryptographic attacks on Tor.

5. Compromised relays

The Tor design paper assumes that not too many relays will be malicious at the same time, and that malicious relays will be detected and removed. That assumption has been tested. The clearest case is the CMU SEI Tor relay attack of 2014. Researchers at Carnegie Mellon University's Software Engineering Institute operated more than 115 malicious relays from January 30 through July 4, 2014. Those relays modified Tor protocol headers (specifically, the relay-early cell type used during circuit construction) to encode information that allowed a colluding guard to identify hidden-service circuits.

The Tor Project's July 30, 2014 security advisory described the attack and removed the malicious relays. CMU SEI's Black Hat 2014 talk on the attack was withdrawn before the conference. The attack data fed Operation Onymous, the November 2014 17-country operation that produced 17 arrests and 400+ hidden-service seizures including Silk Road 2.0. CMU has stated that it received no payments from the FBI for the attack but acknowledged complying with subpoenas.

The case is significant. It demonstrates that running a meaningful fraction of malicious Tor relays is operationally feasible (a university research center pulled it off), that protocol-level traffic confirmation against onion services is viable when the adversary controls both the guard and the introduction-point side, and that the Tor Project's response (detection, advisory, protocol fix) closes specific holes but cannot prevent a sufficiently determined adversary from running malicious relays for some period before detection. The 2017 introduction of Tor onion services v3 addressed several known weaknesses in v2 (longer addresses, stronger cryptography); v2 was formally retired on July 15, 2021 and v3 is widely considered cryptographically intact in 2026.

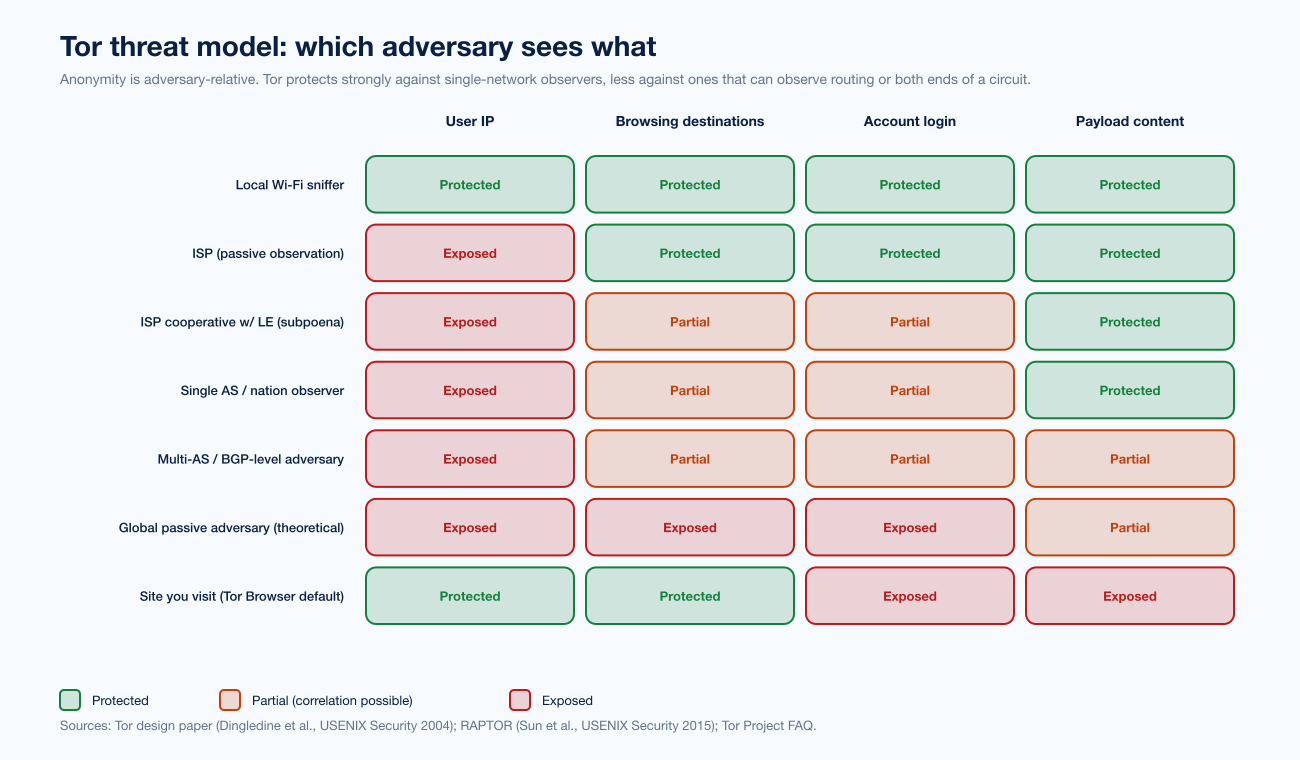

A threat model you can actually use

The single most useful skill for thinking about Tor anonymity is to drop the absolutist framing. "Is Tor anonymous?" is the wrong question. The right question is "anonymous against which adversary, for which session, with which OPSEC stack?" The matrix below organizes the seven adversary classes most readers will care about against the four assets that matter (user IP, browsing destinations, account login, payload content).

Figure 3: A practical threat-model matrix. Tor protects strongly against single-network observers (local Wi-Fi sniffer, passive ISP). It protects partially against ISPs that can subpoena routing data, single-AS observers, and BGP-level adversaries. It does not protect against a global passive adversary (theoretically) or against the destination site itself if the user logs in to a real-name account. Sources: Tor design paper (Dingledine et al., USENIX Security 2004), RAPTOR (Sun et al., USENIX Security 2015), Tor Project FAQ.

The matrix exposes three patterns. First, the user IP column is well-protected against most ordinary adversaries; that is the thing Tor is best at. Second, the payload-content column depends entirely on whether the user's traffic is end-to-end encrypted; HTTPS or onion-service termination protects content from the exit relay regardless of the adversary's network position. Third, the account-login column is exposed to the destination site by definition. If you log in to your Gmail through Tor, Google sees the Tor exit IP plus your real-name account; deanonymization to the destination is automatic. That is not a Tor failure; it is a misuse.

A different way to read the matrix is by adversary tier. Tier 1 (single-network observer): Tor is highly effective. Tier 2 (cooperative ISP, single AS, single nation-state observer): Tor still meaningfully raises the cost of deanonymization, especially for ordinary sessions, but the academic literature shows correlation attacks are practical given enough observation time. Tier 3 (multi-AS, BGP-level, global passive adversary): Tor's anonymity property degrades; this is the regime where journalists facing nation-state adversaries should layer Tails OS and disciplined OPSEC on top of Tor and assume any single channel can be broken.

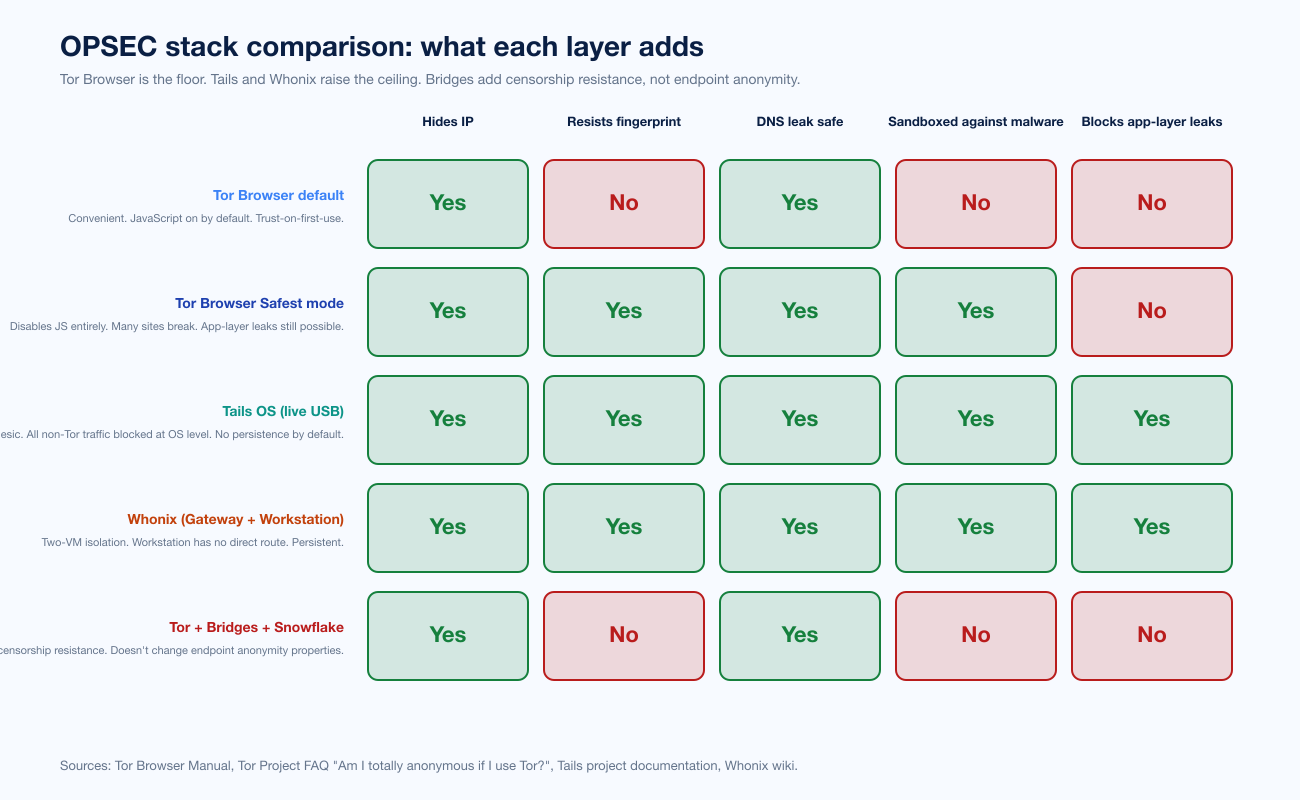

OPSEC stack: how to actually use Tor in 2026

Tor Browser is the floor of Tor anonymity. Most readers get to "good enough" by using Tor Browser at default settings, on a fully patched system, without logging in to anything tied to a real identity, and with HTTPS or onion-service termination on every session. The Tor Project's own FAQ is the canonical guidance.

For higher-stakes use cases (journalists in authoritarian states, activists, sensitive sources, security researchers operating in contested networks), there are three layers above the Tor Browser default that meaningfully raise the bar.

Figure 4: A comparative OPSEC stack table. Tor Browser default is the floor. Tor Browser Safest mode disables JavaScript and substantially reduces browser-exploit risk. Tails OS routes all non-Tor traffic to /dev/null at the OS level and forgets the session at shutdown. Whonix uses a two-VM architecture so that the workstation has no direct route to the Internet and Tor is enforced at the network layer. Tor + bridges + Snowflake adds censorship resistance but does not change endpoint anonymity. Sources: Tor Browser Manual, Tails project documentation, Whonix wiki, Tor Project FAQ.

Tor Browser Safest mode. Tor Browser ships with three security levels: Standard (JavaScript enabled, default), Safer (disables JIT and HTTP requests outside HTTPS), and Safest (disables JavaScript entirely). Most browser-exploit-class attacks against Tor users have required JavaScript execution to deliver the payload. EgotisticalGiraffe required E4X. The FBI's Playpen NIT required JavaScript. Disabling JavaScript breaks many sites; it also removes the dominant attack vector for the most consequential class of Tor-user deanonymization.

Tails OS (The Amnesic Incognito Live System) is a Debian-based live operating system designed to boot from a USB stick or DVD. By default, Tails routes all network traffic through Tor and blocks everything else at the OS level. It is amnesic: at shutdown, it forgets the session unless the user has enabled an encrypted persistent volume. Tails is the de facto choice for journalists, activists, and security researchers who need to leave no trace on the host.

Whonix is a two-VM architecture. The Whonix Gateway maintains the Tor connection. The Whonix Workstation has no direct Internet route at all; its only network adapter is connected to the Gateway. Even if an application inside the Workstation is compromised and tries to leak the user's real IP, the Workstation does not know what the real IP is. Whonix is the de facto choice for persistent pseudonymous workspaces.

Tor + bridges + Snowflake. Bridges (private, unlisted Tor relays) and pluggable transports (obfs4, Snowflake, Conjure, WebTunnel) let users in censored regions disguise their Tor connection. This adds censorship resistance, not endpoint anonymity. A user in Iran or Russia who needs both should layer bridges on top of one of the layers above.

Rule of thumb: if your threat model includes a determined nation-state adversary, you need at least Tails on top of the Tor Browser default. If your threat model is "a curious ISP" or "a corporate network admin," Tor Browser default is enough. If your threat model includes physical seizure of your laptop, Tails is the only layer that solves the problem because it does not write to disk by default.

Common myths the literature actually addresses

"The dark web is mostly illegal." The most-cited measurement comes from a 2016 Terbium Labs study of 400 randomly selected .onion sites: more than half of measured domains were legal in content, and of the catalogued illegal domains, 75%+ were marketplaces. The PNAS paper cited above measures 6.7% of daily Tor client engagement going to onion services classified as predominantly illicit. Meaningful, but not "mostly." Many .onion services are personal blogs, mirrors of clearnet news sites, and academic projects.

"Tor was created by the US government to spy on people." The protocol was developed at the US Naval Research Laboratory in the late 1990s and early 2000s and remains funded in part by the US government. The Tor Project is a US 501(c)(3) non-profit. Government funding is not a backdoor; the protocol is open-source, peer-reviewed, and has survived two decades of academic adversarial scrutiny. The "Tor Stinks" NSA deck is itself the strongest contrary evidence: if the NSA had a backdoor, the deck would not concede that population-scale deanonymization was infeasible.

"VPN + Tor is more anonymous than Tor alone." The Tor Project's official position is more nuanced. A VPN before Tor hides the fact of Tor use from your ISP but adds the VPN as a single point of trust. A VPN after Tor lets the VPN see the unencrypted destination, which is bad unless you trust the VPN as much as the destination. The cleaner approach for hiding Tor use from an ISP is a Tor bridge with a pluggable transport.

"Onion services are more secure than clearnet through Tor." Onion services give end-to-end encryption between the user's Tor client and the hidden service without involving an exit relay. They do not protect against an adversary that has compromised the operator's infrastructure or against application-layer issues. A misconfigured onion service can leak its real IP through HTTP server-status pages, email-based password resets, CDN integrations, or TLS certificates that name the real domain.

"My ISP cannot tell I am using Tor." Without a bridge or pluggable transport, the answer is exactly the opposite. Tor's default guard relays are publicly listed. An ISP can trivially see a connection going to a Tor relay; what the ISP cannot see is the destination beyond the Tor exit. To hide the fact of Tor use itself, use a bridge.

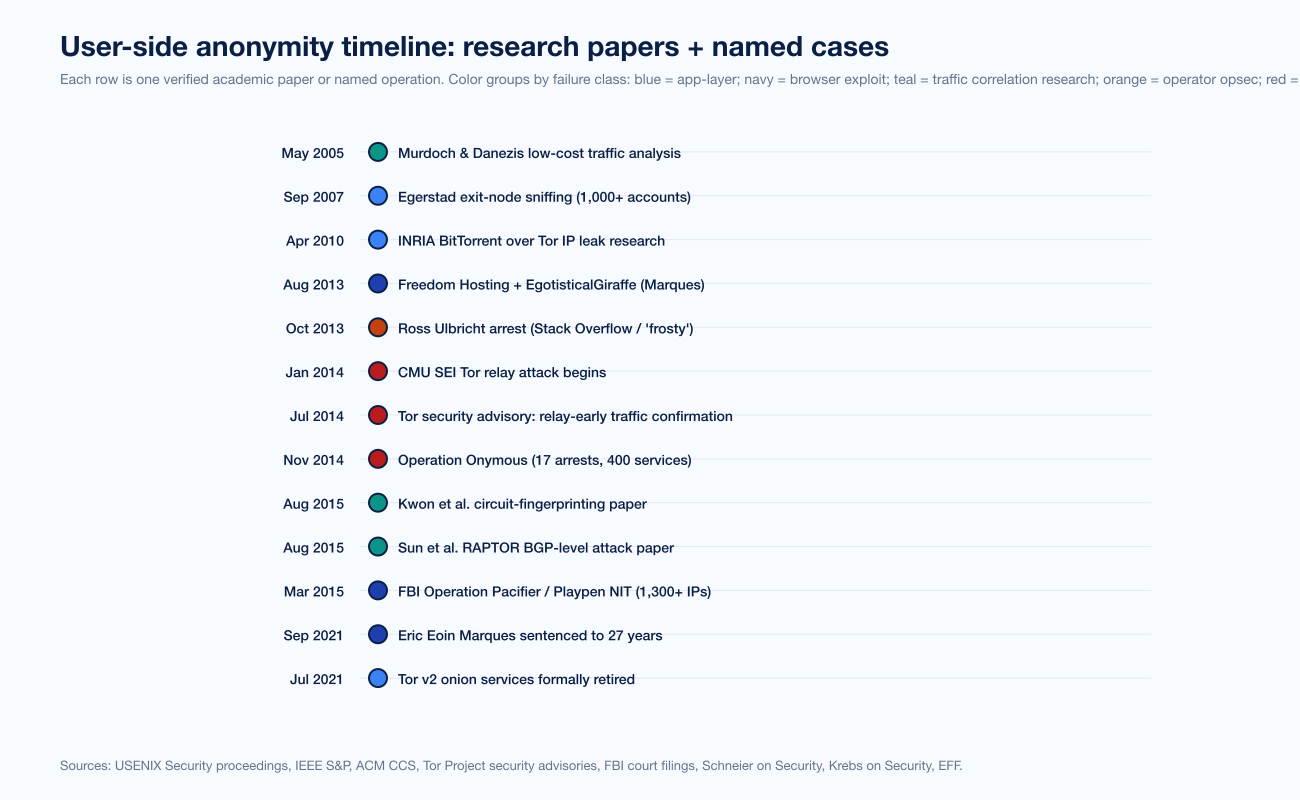

Named user-side cases over time

The user-side anonymity timeline below is one row per documented research paper or named operation that affected users (not operators).

Figure 5: A timeline of user-side anonymity research and named cases from Murdoch and Danezis (2005) through Tor v2 retirement (July 2021). Each row is one verified academic paper or named operation, color-grouped by failure class. Sources: USENIX Security and IEEE S&P proceedings, ACM CCS, Tor Project security advisories, FBI court filings.

The empirical pattern is consistent. Application-layer leaks and browser exploits dominate the consequential user-side deanonymizations. Traffic correlation is heavily studied in academia but rarely the public-facing cause of named user arrests. Compromised relays (CMU SEI 2014) are operationally feasible but produce detectable artifacts that the Tor Project closes within months. Operator OPSEC failures dominate operator-side cases and are covered in detail in our Law Enforcement Tracks Dark Web Criminals post.

What this means for buyers, journalists, and security teams

Stingrai is a Toronto-headquartered penetration-testing firm. Tor anonymity is not a service we sell; we cite it because every CISO, journalist, activist, and security buyer eventually needs a working mental model.

CISOs and security leaders. Treat dark-web exposure as a downstream consequence of credential theft and initial-access-broker activity, not as a Tor protocol problem. Your customers' credentials end up on Tor markets after an infostealer hits a corporate or personal device. The leverage is upstream: continuous offensive testing, PTaaS, and rigorous identity hygiene reduce the volume of credentials that reach dark-web markets in the first place.

Journalists and activists. Tor Browser at default settings is the floor. Use Safest mode in any session touching sensitive sources. Use Tails on a clean USB when you cannot trust the host machine. If you maintain a long-running pseudonymous identity, learn Whonix. Never log in to anything tied to your real identity inside Tor Browser, ever, including Gmail.

Founders and small companies thinking about dark-web monitoring. The honest answer to "is our company on the dark web?" is "almost certainly yes, in the form of leaked credentials." Dark-web monitoring vendors will sell you ongoing surveillance with marginal value; reducing the number of leaked credentials is more valuable, and the controls that satisfy SOC 2 or PCI DSS 4.0 also reduce the operational fingerprint that initial-access brokers monetize.

Researchers and engineers. Read the Tor design paper, the Murdoch and Danezis 2005 paper, and the Tor Project FAQ on staying anonymous. The combined 80 pages give a more accurate model than any vendor blog. The academic literature is publicly indexed at Free Haven's anonbib.

Frequently Asked Questions

How anonymous is the dark web really?

Tor (the protocol underlying most of the dark web) provides real anonymity against single-network observers like an ISP or a local Wi-Fi sniffer, and against the destination site itself if the user does not log in to a real-name account. It does not provide anonymity against application-layer leaks, browser exploits delivered through Tor (EgotisticalGiraffe, Operation Pacifier), traffic correlation by adversaries observing both ends of a circuit, operator OPSEC failures, or compromised relays at scale (CMU SEI 2014). Practical anonymity for ordinary users is high if they use Tor Browser at default settings, do not log in to real-name accounts, and use HTTPS or onion services for end-to-end encryption. Anonymity for high-stakes use cases (journalists, activists, security researchers) is meaningfully better with Tails OS or Whonix on top of Tor Browser, with JavaScript disabled in Tor Browser's Safest mode for any session touching sensitive sources.

Can the NSA or FBI break Tor?

There is no public evidence that any intelligence agency has broken Tor's protocol cryptography. The 2013 NSA "Tor Stinks" deck leaked by Edward Snowden conceded that population-scale Tor deanonymization was infeasible. Documented FBI and NSA techniques against Tor users (EgotisticalGiraffe, Operation Pacifier NIT, FoxAcid) attack the browser, not the protocol. Tor onion services v3 (introduced 2017, v2 retired July 2021) use ed25519 and curve25519 cryptography that is widely considered intact in 2026.

Is using Tor illegal?

Using Tor itself is legal in most jurisdictions, including the United States, Canada, the United Kingdom, and most of the European Union. A handful of authoritarian states (China, Iran, Belarus) restrict Tor by blocking its relays or criminalizing its use. The activity conducted over Tor may be illegal regardless of jurisdiction. Tor is widely used legally by journalists, dissidents, activists, ordinary privacy-conscious users, and security researchers.

What is the best operating system for Tor anonymity?

For most users, Tor Browser on a fully patched Windows / macOS / Linux laptop at default settings is sufficient. For high-stakes use cases, Tails OS is the de facto choice for ephemeral sessions and Whonix is the de facto choice for persistent pseudonymous identities. Tails routes everything through Tor at the OS level and forgets the session at shutdown. Whonix uses a two-VM architecture so that even a compromised application inside the Workstation cannot leak the user's real IP.

Does a VPN make Tor more anonymous?

The Tor Project's official guidance is that combining a VPN with Tor adds complexity and a single point of trust without reliably improving anonymity. A VPN before Tor hides the fact of Tor use from your ISP but adds the VPN as a party that knows you connected to Tor. A VPN after Tor lets the VPN see the destination, which is bad unless you trust the VPN as much as the destination. The cleaner approach to hiding Tor use from an ISP is a Tor bridge with a pluggable transport (obfs4, Snowflake, Conjure, WebTunnel).

Can my ISP see that I am using Tor?

By default, yes. Tor's guard relays are publicly listed in the Tor consensus. An ISP can see traffic going to a known Tor relay; it cannot see the destination beyond the Tor exit. To hide the fact of Tor use itself, use a Tor bridge with a pluggable transport: Snowflake disguises Tor as WebRTC, obfs4 disguises Tor as a random byte stream, WebTunnel disguises Tor as standard HTTPS to a regular-looking website.

What is the safest way to access the dark web?

For most ordinary users: download Tor Browser from torproject.org (verify the GPG signature), keep it patched, run it at default settings, do not log in to anything tied to your real identity, and use HTTPS or onion-service termination on every session. For high-stakes users: use Tails OS booted from a clean USB, with Tor Browser at Safest mode and JavaScript disabled. Never run BitTorrent over Tor. Be cautious opening downloaded documents inside Tor Browser; some document formats (PDF, Word) contain network-callback features that can bypass Tor at the application layer.

What is the difference between a guard relay and an exit relay?

A guard relay is the first relay in a Tor circuit. It sees the user's real IP and the encrypted Tor packet but forwards onward without seeing the destination. The user picks one or a small number of guards and reuses them for two to three months in the current Tor protocol. An exit relay is the last relay in a Tor circuit. It sees the destination IP and port and the unwrapped payload (encrypted further only if the application layer uses HTTPS or another end-to-end protocol). Exit relays are the most legally exposed Tor operators because destination logs show the exit IP, not the user's.

Are dark web markets really anonymous?

It depends on whether you are a buyer, a vendor, or an operator. A careful buyer using Tor Browser at default settings, paying in a privacy-respecting cryptocurrency, and never reusing identifiers gets a reasonable but not absolute anonymity property. A vendor who uses the same handle across multiple markets is much more exposed. An operator running infrastructure for years almost always gets caught eventually through some combination of operator OPSEC failure, blockchain tracing, infiltration, and server seizure (the five-method framework in our Law Enforcement Tracks Dark Web Criminals explainer). The Stingrai blog also covers dark web data pricing and top dark web marketplaces for buyer-side context.

How does Stingrai think about dark-web exposure for a normal company?

Stingrai's working model is that "dark-web exposure" for a normal corporate environment is a downstream symptom of credential-management and identity-hygiene problems, not a Tor protocol problem. Initial-access brokers buy and sell credentials harvested from infostealers running on personal and corporate devices. The leverage is upstream: continuous offensive testing through PTaaS, patched endpoints, MFA-everywhere identity, and rigorous incident-response drills. Stingrai was founded in 2021 and is headquartered in Toronto with a London office; team certifications include OSCE3, OSCP, OSWE, OSED, OSEP, CREST CRT, and CISSP.

Methodology note

Every numeric claim in this post links to a primary publisher or peer-reviewed paper. Tor metrics come from metrics.torproject.org. The academic deanonymization papers (Murdoch and Danezis 2005, Sun et al. RAPTOR 2015, Kwon et al. 2015, Johnson et al. 2013) link to their conference full text. Named cases (EgotisticalGiraffe, Operation Pacifier, Ross Ulbricht, Eric Eoin Marques, CMU SEI relay attack, Operation Onymous) are sourced to FBI court filings, Tor Project security advisories, EFF case-tracking pages, and named investigative reporting from Krebs on Security, The Guardian, Wired, and Schneier on Security.

References

Roger Dingledine, Nick Mathewson, Paul Syverson, "Tor: The Second-Generation Onion Router," USENIX Security 2004.

Steven J. Murdoch, George Danezis, "Low-Cost Traffic Analysis of Tor," IEEE Symposium on Security and Privacy 2005.

Yixin Sun, Anne Edmundson, Laurent Vanbever, Oscar Li, Jennifer Rexford, Mung Chiang, Prateek Mittal, "RAPTOR: Routing Attacks on Privacy in Tor," USENIX Security 2015.

Albert Kwon, Mashael AlSabah, David Lazar, Marc Dacier, Srinivas Devadas, "Circuit Fingerprinting Attacks: Passive Deanonymization of Tor Hidden Services," USENIX Security 2015.

Aaron Johnson, Chris Wacek, Rob Jansen, Micah Sherr, Paul Syverson, "Users Get Routed: Traffic Correlation on Tor by Realistic Adversaries," ACM CCS 2013.

Pere Manils et al., "Compromising Tor Anonymity Exploiting P2P Information Leakage," INRIA / arXiv 1004.1461, 2010.

Tor Project, "Tor security advisory: 'relay early' traffic confirmation attack," July 30, 2014.

Tor Project, "Thoughts and Concerns about Operation Onymous," November 9, 2014.

Tor Project, "V2 Onion Services Deprecation Timeline," 2020-2021.

Tor Project, "Am I totally anonymous if I use Tor?" Tor Project FAQ.

Tor Project, Tor Metrics dashboard, accessed 2025.

Tor Project, "Staying ahead of censors in 2025," Tor Project blog.

Bruce Schneier, "How the NSA Attacks Tor/Firefox Users With QUANTUM and FOXACID," Schneier on Security, October 7, 2013.

James Ball, Bruce Schneier, Glenn Greenwald, "Attacking Tor: how the NSA targets users' online anonymity," The Guardian, October 4, 2013.

Electronic Frontier Foundation, "The Playpen Cases: Frequently Asked Questions," EFF.

Wikipedia, "Operation Pacifier," maintained article with primary-source citations.

Wikipedia, "Freedom Hosting," maintained article with primary-source citations.

The Record (Recorded Future News), "Freedom Hosting admin gets 27 years in prison for hosting child pornography," September 2021.

Wikipedia, "Ross Ulbricht," maintained article.

Federal Bureau of Investigation, "Ross William Ulbricht's Laptop," FBI history archive.

Eric Jardine, Andrew M. Lindner, Gareth Owenson, "The potential harms of the Tor anonymity network cluster disproportionately in free countries," PNAS, 2020.

Daily Dot, "Dark Web Content Mostly Legal, Terbium Labs Study Finds," 2016.

Wired, "Rogue Nodes Turn Tor Anonymizer Into Eavesdropper's Paradise," September 2007.

Tails Project, Tails (The Amnesic Incognito Live System), official documentation.

Whonix Project, "Whonix overview," Whonix wiki.

Tor Browser Manual, "Security Settings," Tor Project.

Krebs on Security, "Eric Eoin Marques tag," tagged investigations archive.

Stingrai, "How Law Enforcement Tracks Dark Web Criminals," operator-side companion to this post.

Stingrai, "Top Dark Web Marketplaces 2026," market-by-market reference.

Stingrai, "Dark Web Data Pricing 2026," buyer-side pricing context.

Stingrai, "Compromised Credential Statistics 2026," credential-theft data driving dark-web exposure for normal companies.